Advanced Quant Trading: Mastering Mean Reversion and Volatility Regimes

A Data-Driven Framework for Institutional-Grade Quant Trading Performance

Download Source code using the button at the end of this article:

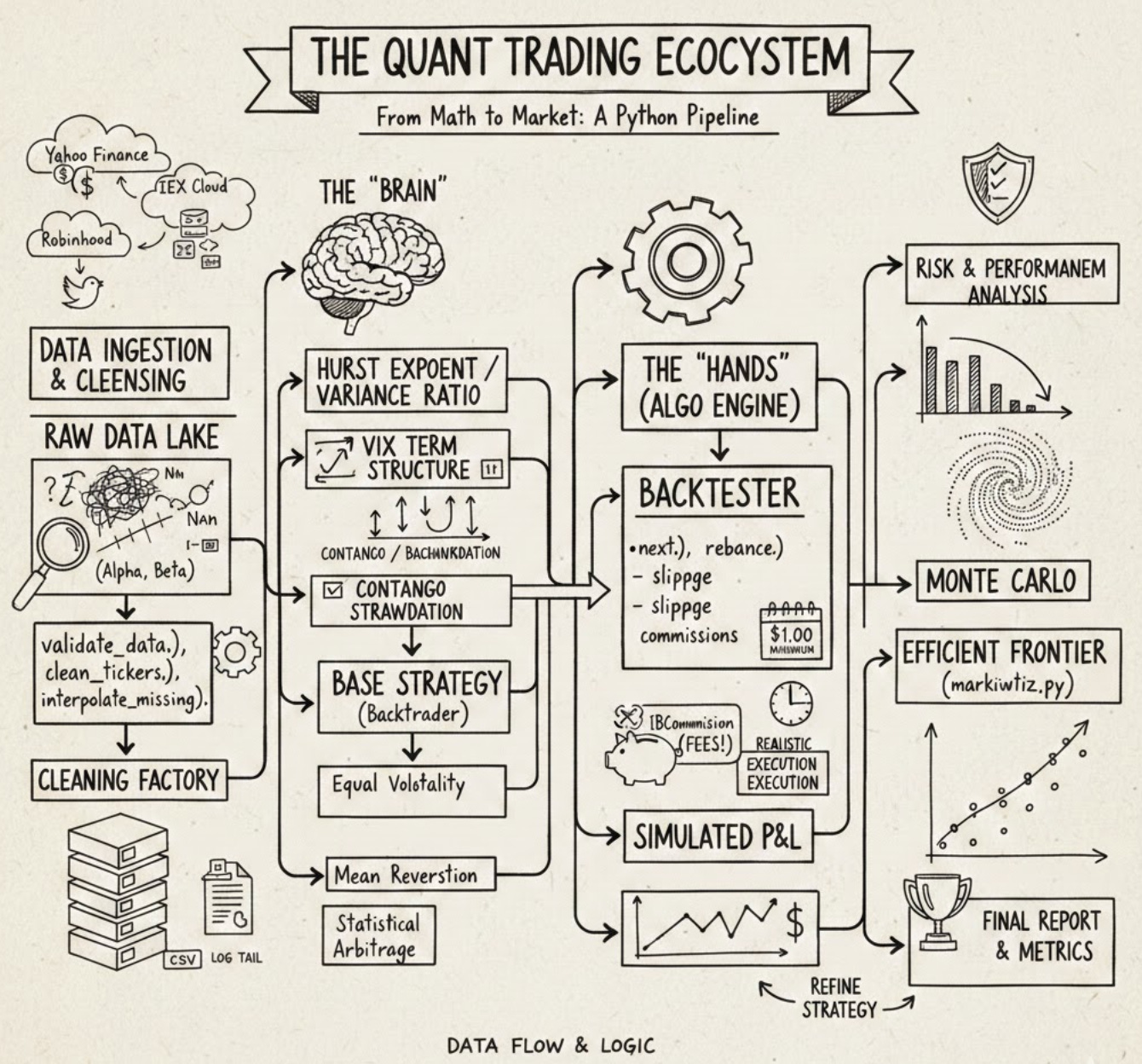

In the fast-evolving world of algorithmic finance, the line between a profitable strategy and a failed experiment often lies in the quality of the underlying framework. This article provides a comprehensive deep dive into a production-grade Quant Trading ecosystem, built from the ground up using Python and Backtrader. Whether you are an aspiring quantitative researcher or an experienced developer, you will explore the full lifecycle of systematic trading — ranging from multi-source data ingestion (Yahoo Finance, IEX, and Robinhood) to advanced statistical validation using Hurst Exponents and Variance Ratios. Readers can expect to find a modular architecture designed for real-world robustness, featuring custom risk analyzers like the Sortino Ratio, sophisticated rebalancing logic, and automated data cleaning routines. By the end of this guide, you will learn how to bridge the gap between abstract mathematical theory and a functional execution engine, gaining the tools necessary to build, backtest, and refine institutional-quality Quant Trading strategies with scientific rigor and engineering precision.

# file path: backtest/run.py

import datetime

import os.path

import argparse

import importlib

import dateutil.parser

import pandas as pd

import numpy as np

import backtrader as bt

from backtrader import TimeFrame

from .util import commission, observers, analyzers

from .util import universe as universe_utilThe import list assembles standard libraries, numeric/data tools, the backtesting engine, and our local utilities that the run entry point needs to orchestrate a backtest. datetime and os.path are used for handling date arithmetic and filesystem paths when locating price and VIX files; argparse and importlib enable command-line argument parsing and dynamic loading of a strategy module at runtime so the runner can invoke different strategies without hard-coding them; dateutil.parser is used to robustly convert user-supplied date strings into datetime objects for configuration of the backtest window. pandas and numpy provide the DataFrame/array operations used to shape raw market data and VIX term-structure series before they are passed into the engine. backtrader and the TimeFrame symbol are pulled in because the backtest runner constructs and configures a backtrader engine instance and needs timeframe semantics for resampling or feeding data. The local utilities commission, observers, and analyzers supply project-specific backtrader extensions for trade cost modeling, runtime observability, and performance measurement (including the Sortino analysis invoked later), while universe as universe_util centralizes ticker normalization and universe-group logic used to prepare the list of symbols the runner will request. This file’s imports mirror patterns elsewhere in the project—backtrader is a core dependency imported in multiple modules, and the util pieces match the same responsibilities seen in other files, with a small namespacing variance where some modules import those utilities from the package root while the runner references them through the util subpackage.

# file path: backtest/run.py

def clean_tickers(tickers, start, end):

data_path = os.path.join(os.path.dirname(__file__), ‘../data/price/’)

out_tickers = []

for ticker in tickers:

d = pd.read_csv(data_path + ticker + ‘.csv’, index_col=0, parse_dates=True)

if not (d.tail(1).index[0] < start or

d.head(1).index[0] > end):

out_tickers.append(ticker)

else:

print(’Data out of date range:’, ticker)

return out_tickersclean_tickers is the pre-filter the backtest runner uses to make sure the strategy only receives tickers with on-disk price series that actually overlap the requested backtest window. It computes a data directory relative to the module file, then loops over each ticker and reads its CSV into a pandas DataFrame with the first column as a datetime index. For each loaded series it inspects the earliest and latest timestamps (via head and tail) and applies a simple overlap test: if the series ends before the requested start or begins after the requested end the ticker is reported to the console as out of date range and is excluded; otherwise the ticker is appended to the output list. The function returns that filtered list of tickers. Because clean_tickers performs the date-range check up front, run_strategy downstream will only invoke analysis and plotting on tickers with at least some usable data; any file-read or parse errors are not handled inside clean_tickers and would propagate out.

# file path: tools/log/log.py

import os

from datetime import datetimeThe tools logging module needs basic filesystem access and timestamping so it can append and read time-stamped log lines; the two imports supply those capabilities. The os import brings in standard filesystem and path utilities the log, last, and tail helpers use to locate, open, and inspect the log file on disk. Importing the datetime class gives direct access to current timestamps so each log entry is created with an exact time and so recent entries can be identified when tail inspects activity. By contrast, the other import examples you’ve seen in the codebase are intra-package imports that pull the log module or combine log with fin_calc for higher-level functionality; here the module is simply pulling in core standard-library building blocks that enable writing and reading time-stamped files.

# file path: tools/log/log.py

def tail(count=5):

with open(LOG_FILE_PATH, ‘r’) as f:

total_lines_wanted = count

BLOCK_SIZE = 1024

f.seek(0, 2)

block_end_byte = f.tell()

lines_to_go = total_lines_wanted

block_number = -1

blocks = []

while lines_to_go > 0 and block_end_byte > 0:

if (block_end_byte - BLOCK_SIZE > 0):

f.seek(block_number*BLOCK_SIZE, 2)

blocks.append(f.read(BLOCK_SIZE))

else:

f.seek(0, 0)

blocks.append(f.read(block_end_byte))

lines_found = blocks[-1].count(’\n’)

lines_to_go -= lines_found

block_end_byte -= BLOCK_SIZE

block_number -= 1

all_read_text = ‘’.join(reversed(blocks))

for line in all_read_text.splitlines()[-total_lines_wanted:]:

print(line)Within the tools package’s simple logging utilities, the tail function reads and prints the last N log lines from the file referenced by LOG_FILE_PATH so clean_tickers can inspect recent activity during cleanup. It opens the log file for text reading, records the requested number of lines and a block size (1 KB), then moves the file cursor to the end to determine the file length. The function then walks backwards through the file in fixed-size blocks: for each iteration it seeks to the next block from the end (using negative block offsets) or, when the remaining bytes are smaller than a block, seeks to the file start and reads the remainder. Each read block is appended to a temporary list and the function counts how many newline characters that block contains to decrement how many more lines it needs. The loop continues until either the requested number of lines has been found or the start of the file is reached. After that it reverses the collected blocks to restore chronological order, joins them into one string, splits into lines, and prints the final requested slice of lines to the console. Control flow covers the happy path of finding enough lines via multiple block reads and the edge case where the entire file is smaller than the requested tail length, in which case the function simply prints whatever lines exist. Conceptually this implements a backward-chunk-reading pattern to avoid loading the whole log file into memory; it differs from the last and get_last_date helpers, which operate in binary mode and search for a single trailing newline by stepping backward byte-by-byte.

# file path: backtest/run.py

def run_strategy(strategy, tickers=None, start=’1900-01-01’, end=’2100-01-01’, cash=100000.0,

verbose=False, plot=False, plotreturns=False, universe=None, exclude=[],

kwargs=None):

start_date = dateutil.parser.isoparse(start)

end_date = dateutil.parser.isoparse(end)

tickers = tickers if (tickers or universe) else [’SPY’]

if universe:

u = universe_util.get(universe)()

tickers = [a for a in u.assets if a not in exclude]

tickers = clean_tickers(tickers, start_date, end_date)

module_path = f’.algos.{strategy}’

module = importlib.import_module(module_path, ‘backtest’)

strategy = getattr(module, strategy)

cerebro = bt.Cerebro(

stdstats=not plotreturns,

cheat_on_open=strategy.params.cheat_on_open

)

cerebro.addstrategy(strategy, verbose=verbose)

for ticker in tickers:

datapath = os.path.join(os.path.dirname(__file__), f’../data/price/{ticker}.csv’)

data = bt.feeds.YahooFinanceCSVData(

dataname=datapath,

fromdate=start_date,

todate=end_date,

reverse=False,

adjclose=False,

plot=not plotreturns)

cerebro.adddata(data)

cerebro.broker.setcash(cash)

if plotreturns:

cerebro.addobserver(observers.Value)

cerebro.addanalyzer(bt.analyzers.SharpeRatio,

riskfreerate=strategy.params.riskfreerate,

timeframe=TimeFrame.Days,

annualize=True)

cerebro.addanalyzer(analyzers.Sortino,

riskfreerate=strategy.params.riskfreerate,

timeframe=TimeFrame.Days,

annualize=True)

cerebro.addanalyzer(bt.analyzers.Returns)

cerebro.addanalyzer(bt.analyzers.DrawDown)

cerebro.addanalyzer(bt.analyzers.PositionsValue)

cerebro.addanalyzer(bt.analyzers.GrossLeverage)

results = cerebro.run(preload=False)

start_value = cash

end_value = cerebro.broker.getvalue()

print(’Starting Portfolio Value:\t{:.2f}’.format(cash))

print(’Final Portfolio Value:\t\t{:.2f}’.format(end_value))

drawdown = results[0].analyzers.drawdown.get_analysis()[’max’][’drawdown’]

cagr = results[0].analyzers.returns.get_analysis()[’rnorm100’]

sharpe = results[0].analyzers.sharperatio.get_analysis()[’sharperatio’]

sortino = results[0].analyzers.sortino.get_analysis()[’sortino’]

positions = results[0].analyzers.positionsvalue.get_analysis()

avg_positions = np.mean([sum(d != 0.0 for d in i) for i in positions.values()])

leverage = results[0].analyzers.grossleverage.get_analysis()

avg_leverage = np.mean([abs(i) for i in leverage.values()])

sharpe = ‘None’ if sharpe is None else round(sharpe, 5)

print(’ROI:\t\t{:.2f}%’.format(100.0 * ((end_value / start_value) - 1.0)))

analyzer_results = []

analyzer_results.append(’Max Drawdown:\t{:.2f}’.format(drawdown))

analyzer_results.append(’CAGR:\t\t{:.2f}’.format(cagr))

analyzer_results.append(’Sharpe:\t\t{}’.format(sharpe))

analyzer_results.append(’Sortino:\t{:.5f}’.format(sortino))

analyzer_results.append(’Positions:\t{:.5f}’.format(avg_positions))

analyzer_results.append(’Leverage:\t{:.5f}’.format(avg_leverage))

print(’\n’.join(analyzer_results))

if plot:

cerebro.plot()Back in run — continuing with run_strategy, the function orchestrates the end-to-end backtest run by preparing inputs, wiring them into Backtrader, executing the strategy, and summarizing the analyzers. It first turns the start and end strings into datetime objects and decides the ticker universe: if a universe name is provided it resolves that with universe_util.get and filters out any excluded tickers; if no tickers or universe are supplied it falls back to a single default asset. It then calls clean_tickers to validate each ticker’s local CSV price file against the requested date range, so only assets with data inside the window proceed. The strategy implementation is imported dynamically using importlib and the class object is retrieved so the test can instantiate the exact algorithm requested. A Backtrader Cerebro engine is created with standard statistics enabled or disabled depending on whether plotreturns is requested, and the cheat-on-open behavior is set from the strategy’s parameters

# file path: tools/plot.py

import os

import argparse

from datetime import datetime

import numpy as np

import pandas as pd

from pandas.plotting import register_matplotlib_converters

from matplotlib import pyplot as pltThe file brings in standard and third-party libraries needed to turn time-indexed strategy output into numerical results and visualizations: os is used for filesystem work such as creating or resolving paths and saving plot files; argparse provides CLI parsing so the plotting utilities can be invoked or parameterized from a command line; datetime.datetime supplies timestamp parsing and construction for axis labels and for converting string dates into temporal objects; numpy (aliased as np) supplies the vectorized numeric operations used to compute log returns and other array math; pandas (aliased as pd) is used to manipulate time-series data coming from Strategy.log, to hold the DataFrame/Series objects that feed the plots; pandas.plotting.register_matplotlib_converters ensures pandas datetime indices are correctly handled by the plotting backend so time axes render properly; and matplotlib.pyplot (aliased as plt) is the plotting API used to draw, format, and persist the performance charts. These imports match the project’s pattern where many modules pull in os, argparse, pandas and numpy for CLI-driven data workflows, but plot adds the datetime converter and pyplot imports specific to its standalone role of rendering time-series performance visuals (where some other modules import date instead of datetime or domain libraries like pypfopt for optimization).

# file path: tools/plot.py

def plot(data, plot_returns=False):

if plot_returns:

data = _log_returns(data)

plt.clf()

for y in data:

print(y.name)

plt.plot(y, label=y.name)

plt.legend()

plt.tight_layout()

plt.show()When run_strategy hands a collection of time series into plot, plot first decides whether those series should be converted into cumulative log returns by delegating to _log_returns; if plot_returns is True, _log_returns transforms each series by taking the log, differencing to get period returns, forcing the first value to zero, and then forming a cumulative return series. After that optional transformation, plot clears the current matplotlib figure, then iterates over the supplied series (it expects iterable objects like pandas Series that carry a name), prints each series name to the console so you can see which trace is being rendered, and plots each trace on the same axes. When all series are drawn it enables the legend, tightens the layout to avoid clipping, and displays the figure. The control flow is a simple guard on plot_returns followed by a loop over data; its observable side effects are console output for each series name and the interactive plot window used to visualize raw price series or cumulative log-return performance for strategy comparison.

# file path: tools/plot.py

def _log_returns(data):

log_d = []

for d in data:

r = np.log(d).diff()

r.iloc[0] = 0.0

r = np.cumprod(r + 1) - 1

log_d.append(r)

return log_dIn the backtester pipeline this helper prepares series for the performance plots by turning each incoming price-like series into a compounded return curve that the plot routine can draw. For each series in the provided iterable it takes the natural-log transform and differences it to get period log-returns, replaces the first-period result with zero to avoid a missing value at the start, then treats those period values as one-plus-returns and takes a cumulative product and subtracts one to produce a cumulative, compounded return series; each resulting series is collected and returned in the same order as the input. The loop is the simple accumulator that implements that sequence for every asset/strategy series you pass in; plot calls this when plot_returns is requested and then graphs each returned series (using the series name as the label). Compared with the standalone log_returns helper, which only returns the raw log-differences trimmed of the initial NaN, _log_returns produces ready-to-plot cumulative return curves anchored at zero.

# file path: backtest/algos/BaseStrategy.py

import backtrader as btThis file pulls in the external backtrader library so the BaseStrategy implementation can rely on backtrader’s primitives — lifecycle hooks, data feed and broker interfaces, order and notification objects, and the event-driven run loop — rather than reimplementing those concerns. In the context of the algo-trader-master_cleaned architecture, that single external import ties the project’s strategy layer into the backtesting engine: BaseStrategy will extend or call into backtrader types to receive ticks/candles, submit orders, and handle order callbacks. Other modules in the codebase follow the opposite direction: they import BaseStrategy (sometimes aliased as base) or import BaseStrategy together with concrete algorithms like BuyAndHold and CrossOver to assemble or register strategies for backtests. In short, this import brings the external framework into the strategy base so internal strategy classes can plug into the backtesting lifecycle, while the rest of the project imports the resulting BaseStrategy to build higher-level algorithm compositions.

# file path: backtest/algos/BaseStrategy.py

def log(self, txt, date=None):

if self.verbose:

date = date or self.data.datetime.date(0)

print(’{}, {}’.format(date.isoformat(), txt))Strategy.log is the small, centralized diagnostic helper the Strategy base class uses to emit human-readable lifecycle messages to the console when a backtest is running. It honors the verbose flag that Strategy.init copies from the strategy parameters, so logging only happens when the strategy was configured to be chatty. When called it either uses the date the caller provided or, if none was supplied, pulls the current bar date from the active data feed via the strategy’s primary data datetime accessor; it then prints a single line combining the ISO-formatted date and the textual message. Most concrete strategies and the notify_order handler call Strategy.log to report events like order creation, fills, costs and rejections, so this function centralizes that output behavior and keeps the rest of the lifecycle code free of repetitive console-formatting logic.

# file path: tools/vix_term.py

import pandas as pdImporting pandas as pd brings the project’s primary tabular and time‑series toolkit into VixTermStructure so the class can ingest, normalize and hold the VIX term‑structure as a DataFrame for downstream use. You already know pandas is used elsewhere to manipulate time series for plotting; here VixTermStructure relies on pandas to read the remote HTML table, to set a meaningful index, to slice out the front‑month columns and contango columns, and to convert the scraped string cells into numeric types for the get and contango methods to compute metrics. In short, pandas provides the read, transformation and storage primitives that turn the raw VIXCentral table into the normalized, time‑indexed DataFrame the rest of the auxiliary dataset and analysis tools expect.

# file path: tools/vix_term.py

def get(self, month, month2=None):

if month2 is None:

return float(self._term_structure.iloc[0, month-1])

else:

terms = self._term_structure.iloc[0, month-1: month2-1]

terms = terms.astype(float)

return termsVixTermStructure.get is the small accessor that pulls numeric forward-VIX values out of the term-structure DataFrame that VixTermStructure.init built from the download results. It treats the term columns F1..F12 as 1-based month indexes and always reads from the first (most recent) row of self._term_structure produced by download; when only month is supplied it converts the single cell into a Python float and returns that scalar, and when month2 is provided it slices the row across the requested forward-month range, coerces the slice to floats, and returns the resulting pandas Series. The simple guard on month2 lets callers such as VixTermStructure.contango receive either a single front/back value or a vector of term values for downstream calculations.

# file path: backtest/util/analyzers.py

import math

import numpy as np

from backtrader import Analyzer, TimeFrame

from backtrader.analyzers import TimeReturnThese imports bring in the numerical helpers and the backtrader analyzer primitives that the Sortino analyzer needs to produce its risk-adjusted metric when a backtest finishes. math supplies small, reliable scalar routines the analyzer will use for things like square roots and isnan checks that are convenient at single-value decision points; numpy provides the vectorized array operations and reductions used to turn a time series of period returns into downside deviations and aggregated statistics (you’ve already seen numpy used elsewhere for similar vector math). Analyzer is the backtrader base class that Sortino subclasses so it can hook into backtrader’s lifecycle, and TimeFrame supplies the timeframe constants used to configure how returns are bucketed. TimeReturn is pulled from backtrader.analyzers to produce the per-period return series that Sortino reads during stop/get_analysis; the init you saw earlier wires up a TimeReturn instance with the analyzer’s timeframe/compression so that the analyzer receives the correct time-indexed return stream. Compared with other modules that import a broader set of utilities and the full backtrader module, these imports are intentionally minimal and focused on the numerical work and analyzer plumbing required to compute the Sortino ratio from the strategy’s returns.

# file path: backtest/util/analyzers.py

def get_analysis(self):

return dict(sortino=self.ratio)Sortino.get_analysis is the analyzer’s export point that hands the computed Sortino metric back to the rest of the backtester; it returns the stored Sortino ratio under the sortino key so run_strategy and other orchestrating code can collect analyzer outputs uniformly. Remember that Sortino.init wires up a TimeReturn helper and initializes self.ratio, and that Sortino.stop performs the actual computation over the collected returns and assigns the result to self.ratio. get_analysis performs no computation or branching itself — it simply packages whatever value is currently on self.ratio (which may be a numeric ratio or None if stop could not produce a valid value) into a serializable dictionary for upstream aggregation and reporting.

# file path: backtest/run.py

if __name__ == ‘__main__’:

PARSER = argparse.ArgumentParser()

PARSER.add_argument(’strategy’, nargs=1)

PARSER.add_argument(’-t’, ‘--tickers’, nargs=’+’)

PARSER.add_argument(’-u’, ‘--universe’, nargs=1)

PARSER.add_argument(’-x’, ‘--exclude’, nargs=’+’)

PARSER.add_argument(’-s’, ‘--start’, nargs=1)

PARSER.add_argument(’-e’, ‘--end’, nargs=1)

PARSER.add_argument(’--cash’, nargs=1, type=int)

PARSER.add_argument(’-v’, ‘--verbose’, action=’store_true’)

PARSER.add_argument(’-p’, ‘--plot’, action=’store_true’)

PARSER.add_argument(’--plotreturns’, action=’store_true’)

PARSER.add_argument(’-k’, ‘--kwargs’, nargs=’+’)

ARGS = PARSER.parse_args()

ARG_ITEMS = vars(ARGS)

TICKERS = ARG_ITEMS[’tickers’]

KWARGS = ARG_ITEMS[’kwargs’]

EXCLUDE = ARG_ITEMS[’exclude’]

del ARG_ITEMS[’tickers’]

del ARG_ITEMS[’kwargs’]

del ARG_ITEMS[’exclude’]

STRATEGY_ARGS = {k: (v[0] if isinstance(v, list) else v) for k, v in ARG_ITEMS.items() if v}

STRATEGY_ARGS[’tickers’] = TICKERS

STRATEGY_ARGS[’kwargs’] = KWARGS

if EXCLUDE:

STRATEGY_ARGS[’exclude’] = [EXCLUDE] if len(EXCLUDE) == 1 else EXCLUDE

run_strategy(**STRATEGY_ARGS)When the module is invoked as a script it builds an argparse parser to accept the same set of backtest parameters that run_strategy expects from other callers: a positional strategy name plus optional flags for tickers, universe, exclude, start, end, cash, verbose, plot, plotreturns and a free-form kwargs list for strategy-specific parameters. The parsed Namespace is converted to a plain dict and the code pulls out the tickers, kwargs and exclude entries early because nargs settings produce list values for many options; the subsequent normalization step flattens single-element lists into scalars for the remaining parameters so that start, end, cash and similar fields become the simple values that run_strategy prefers. After normalization it reattaches the original tickers and kwargs to the arguments map and ensures exclude is represented as a list of strings (treating a single exclude token as a one-element list). Finally the prepared STRATEGY_ARGS mapping, which now contains cleaned and correctly-typed inputs coming from the CLI, is handed to run_strategy so the runner can perform ticker cleaning, VIX term-structure fetching and the Sortino analysis pipeline.

# file path: tools/log/log.py

LOG_FILE_PATH = os.path.join(os.path.dirname(__file__), ‘./log’)LOG_FILE_PATH is a module-level constant that establishes where the tools package writes and reads its persistent log; it builds a filesystem path by taking the directory that contains this module and joining it with a local filename called log, so the log file lives next to the module rather than depending on the process working directory. The logging helpers—log, tail and last—all reference LOG_FILE_PATH so that log appends timestamped lines to that file and tail/last read recent entries from the same location; clean_tickers in the tools workflow uses tail to inspect those recent lines during cleanup. This follows the same use of the os utilities imported earlier for filesystem work, but goes a step further by resolving an on-disk, module-anchored path rather than relying on relative paths at call time, making the log location a simple centralized configuration for the package.

# file path: tools/log/log.py

def log(log_type, message):

time = datetime.now()

out = ‘{} -- {}: {}\n’.format(time, log_type, message)

with open(LOG_FILE_PATH, ‘a’) as f:

f.write(out)log is the simple append-only file logger the tools package uses to persist runtime messages for later inspection. It takes a log_type and a message, captures the current timestamp with datetime.now (the datetime import we already covered), formats a single human-readable line that combines the timestamp, the log_type and the message, and then opens the file at LOG_FILE_PATH (the path constructed earlier with os.path.join) in append mode and writes that line with a trailing newline. Because it opens the file for appending, the file will be created if it doesn’t exist and each call adds one more chronologically-ordered entry; last and tail then read those entries back (last seeks from the file end to return the most recent line), so log and those readers form a minimal write/read logging pair used by tools such as clean_tickers to inspect recent activity.

# file path: tools/log/log.py

def last():

with open(LOG_FILE_PATH, ‘rb’) as f:

f.seek(-2, os.SEEK_END)

while f.read(1) != b’\n’:

f.seek(-2, os.SEEK_CUR)

last_line = f.readline().decode()

print(last_line)As part of the tools logging utilities, last is the simple helper that fetches and prints the most recent log entry so other maintenance scripts (for example clean_tickers which inspects recent log activity) can quickly observe the latest line without loading the full file. last opens the log file in binary mode so it can move the file cursor and compare raw bytes, positions the cursor very near the end of the file, then walks backwards one byte at a time until it encounters a newline byte; once that newline boundary is found it reads forward to capture the final line, decodes the bytes into a text string, and prints that string to the console. Conceptually it implements a backward-seek-and-read strategy focused on a single terminal line; that mirrors the bytewise backseek used by get_last_date (which parses a date from the same terminal line) while differing from tail, which reads the file in fixed-size blocks and assembles multiple trailing lines when more than one recent entry is requested. The function has the usual side effects for this utilities module: it performs file I/O against LOG_FILE_PATH and writes output to stdout.

# file path: tools/log/log.py

if __name__ == ‘__main__’:

print(”log imported”)The module contains a simple Python entry-point guard so that if you run the log module as a standalone script it prints a brief confirmation that the log module loaded. That behavior is only for ad-hoc, manual checks of the tools logging utilities and does not execute when other parts of the system import the log module (so callers like the cleanup helper that use the tail helper or other utilities will not trigger the print). This mirrors the lightweight pattern used elsewhere in the project to allow a module to be exercised directly for a quick sanity check without affecting normal import-driven operation.

# file path: tools/vix_term.py

class VixTermStructure:VixTermStructure encapsulates fetching and holding a snapshot of VIX futures term-structure so the rest of the platform can ask for individual contract levels or simple metrics like contango without re-parsing the source each time. When an instance is created it asserts a positive days value and immediately calls download to populate an in-memory DataFrame stored on the instance; the constructor then extracts the month-by-month term columns labeled F1 through F12 into a dedicated term-structure attribute and keeps the last three columns in a separate contango-related attribute for quick access. The download method performs a network fetch by asking pandas to read the HTML table from the VIXCentral historical page for the requested lookback, prints start/finish messages to the console, and normalizes the raw table into a tidy DataFrame by taking the first row as the header, trimming the first and last rows, setting the leftmost column as the index, and applying the header labels to the remaining columns. The get method exposes two access patterns: asking for a single month returns a numeric scalar taken from the front row of the stored term-structure (months are mapped from 1-based input to the internal zero-based positions), while asking for a month range returns a small series of floating values for the requested span (the method ensures numeric conversion for multi-value results). The contango method composes on get by retrieving the front and back contract levels for the two specified months and returning their relative spread computed as back divided by front minus one, so callers get a simple percent-style contango figure. Overall, VixTermStructure centralizes network retrieval, basic normalization and short-term in-memory caching of VIX term data so analysis and strategy code can request contract levels or the contango metric without repeating the HTML parsing logic; it mirrors other network-fetch helpers in the codebase by performing an external request and returning a structured pandas object, but it persists the downloaded table in instance attributes for reuse.

# file path: tools/vix_term.py

def download(self, days=1):

print(’Downloading VIX Term-Structure...’)

url = f’http://vixcentral.com/historical/?days={days}’

data = pd.read_html(url)[0]

header = data.iloc[0]

data = data[1:-1]

data = data.set_index(0)

del data.index.name

data.columns = header[1:]

print(”Term-Structure downloaded.”)

return dataThe download method fetches a snapshot of historical VIX futures term-structure from the external vixcentral service for a requested number of days, normalizes the HTML table into a predictable pandas DataFrame, and returns it for VixTermStructure.init to split into the F1–F12 front-month grid and the contango-related columns. It begins by printing a short status line and building the vixcentral URL with the days parameter, then performs a synchronous HTML table read using pandas so the first table on the page becomes the working DataFrame. Because the source embeds its column labels as the first data row and includes a trailing summary row, the method pulls that first row out as the column header, drops the top and bottom rows to remove the embedded header and summary, and then sets the table’s row index from the first column values while clearing the index name. Finally it assigns the extracted header entries (excluding the original index label) as the DataFrame’s column names, prints a completion message, and returns the cleaned DataFrame so the rest of VixTermStructure can rely on a consistent layout for month lookup and contango calculation.

# file path: tools/vix_term.py

def __init__(self, days=1):

assert days > 0

self._data = self.download(days)

self._term_structure = self._data.loc[:, ‘F1’:’F12’]

self._contango_data = self._data.iloc[:, -3:]VixTermStructure.init is the lightweight constructor that takes a positive days parameter and immediately fetches and caches a snapshot of the VIX futures table for later queries. It first guards against invalid input by asserting days is greater than zero, then calls VixTermStructure.download to retrieve and normalize the historical term-structure table from the remote source; the returned DataFrame is stored on the instance as _data so subsequent calls reuse the same snapshot instead of re-downloading. After caching the raw table, the constructor slices that DataFrame to produce two purpose-built views: _term_structure, which isolates the twelve front-month futures columns labeled F1 through F12 so callers can request individual or ranges of term points via VixTermStructure.get, and _contango_data, which takes the last three columns of the table and holds the subset used by contango calculations and related analysis. By centralizing download and these two derived attributes at construction, the rest of the pipeline—where strategies or analysis routines call get or contango—operates against a stable, pre-normalized in-memory snapshot rather than repeatedly parsing the source. This follows the project’s pattern of thin adapters that normalize external data into simple in-memory structures for downstream strategy execution and analysis; it also produces the side effects documented previously by writing the instance attributes _data, _term_structure, and _contango_data.

# file path: tools/vix_term.py

def contango(self, months=(1, 2)):

front = self.get(months[0])

back = self.get(months[1])

return (back / front - 1.0)VixTermStructure.contango is the tiny public accessor that turns the cached term-structure snapshot into the single metric other parts of the platform expect: the relative premium of a longer-dated VIX future versus a nearer-dated one. It takes a months pair (defaults to the first and second futures), asks VixTermStructure.get for the front-month level and then for the back-month level, and returns the fractional difference computed as the back level divided by the front level minus one. Because it delegates the retrieval to get, it operates against the in-memory term-

# file path: tools/vix_term.py

if __name__ == ‘__main__’:

vts = VixTermStructure()

print(vts._term_structure)The entry-point guard at the bottom acts as a lightweight self-test and developer convenience: when the module is executed as a script it constructs a VixTermStructure using the default parameters, which runs the class initialization path to download and normalize recent VIX futures into the internal _data, _term_structure and _contango_data attributes, and then prints the current _term_structure snapshot to standard output so a developer can inspect the F1–F12 matrix. Because the guard only executes on direct invocation, importing the module elsewhere in the backtester leaves behavior unchanged; compared with the log module’s simple confirmation guard, this guard actually triggers a data fetch and outputs the normalized term-structure rather than a status line. The printed table is the same term-structure that VixTermStructure.init prepares and that VixTermStructure.get and other consumers will subsequently read for single-month values, ranges, or contango calculations.

# file path: tools/vix_term.py

print(’F1:’, vts.get(1))

print(’F2:’, vts.get(2))

print()When run, these lines print a short, human-readable sanity check of the VIX futures snapshot by asking the VixTermStructure instance for its front-month and second-month levels and writing them to stdout with simple labels, then emit an empty line for visual separation. The calls rely on VixTermStructure.get to return numeric contract levels (recall get pulls a float from the term-structure columns assembled in VixTermStructure.init), so the printed values are the immediate front and next contract quotes that the rest of the platform can use for metrics like contango. Execution follows a straight sequential path: construct or reuse vts, call get for month 1 and month 2, and print the results; on the happy path an operator or maintenance script sees two labeled numbers, while if the underlying term-structure is missing or malformed the get method will raise and that exception will propagate, causing the script to fail rather than silently continue.

# file path: tools/vix_term.py

print(vts._contango_data)

print()Those two lines simply dump the VixTermStructure instance’s internal contango slice to the console and then emit a blank line to separate output visually. Because VixTermStructure builds its state by downloading raw VIX futures into _data and then deriving _term_structure and _contango_data in init, printing _contango_data gives a human-readable snapshot of the specific columns the class reserves for contango-related analysis (the three-column tail extracted during initialization). This is a runtime inspection step meant to let a developer or maintenance script confirm that the download and normalization produced the expected contango-related fields before any call to the contango method or get method uses them; contango computes a simple front/back ratio via get, while get returns specific month values from _term_structure, so the printed _contango_data shows the different, pre-sliced view that those computations rely on. The empty print that follows is just for readability when scanning console output.

# file path: tools/vix_term.py

print(’Contango (1/2):’, vts.contango((1, 2)))

print(’Contango (3/5):’, vts.contango((3, 5)))

print(’Contango (4/7):’, vts.contango((4, 7)))These three statements call VixTermStructure.contango on the live VIX term snapshot and print a short, human‑readable label together with the numeric contango value for three different maturity pairs. Each call invokes VixTermStructure.contango, which in turn asks VixTermStructure.get for the front and back contract levels from the preloaded term-structure snapshot and returns the proportional difference (back divided by front minus one). The three pairs exercise a very short spread (first vs second month), a mid-curve spread (third vs fifth month), and a longer spread (fourth vs seventh month), so the printed lines provide a quick demonstration of how contango varies across the curve without re-downloading or re-parsing the source data.

# file path: backtest/util/analyzers.py

class Sortino(Analyzer):

params = (

(’timeframe’, TimeFrame.Years),

(’compression’, 1),

(’riskfreerate’, 0.01),

(’factor’, None),

(’convertrate’, True),

(’annualize’, False),

)

RATEFACTORS = {

TimeFrame.Days: 252,

TimeFrame.Weeks: 52,

TimeFrame.Months: 12,

TimeFrame.Years: 1,

}Sortino is an Analyzer subclass that plugs into the backtest pipeline to produce a Sortino ratio at strategy teardown; it is constructed when run_strategy or universe builders like SP500 attach analyzers to a run, and its get_analysis is called by the framework to expose the final metric. The class-level params define how Sortino interprets periodicity and the risk-free baseline (timeframe, compression, riskfreerate, factor, convertrate, annualize), and RATEFACTORS maps TimeFrame symbols to their period counts so conversions between annual and periodic rates can be done. During init, Sortino creates a TimeReturn helper configured with the same timeframe and compression so that TimeReturn accumulates the per-period returns the analyzer needs, and it initializes the ratio state. When stop runs at the end of a backtest, Sortino pulls the collected returns from TimeReturn.get_analysis, looks up the appropriate conversion factor via RATEFACTORS, and either converts the configured risk-free rate to the returns’ periodicity or converts the returns to the chosen annual scale depending on convertrate; this alignment is why the class stores both a riskfreerate and a convertrate flag. It then computes the mean excess return over the risk-free level and the downside deviation by taking the square root of the mean of squared negative deviations below the target rate, and divides the excess mean by that downside deviation to yield the Sortino ratio; if conversion and annualization are requested it scales the ratio by the square root of the period factor. Empty-return inputs and arithmetic problems are guarded so the ratio becomes None on error, and get_analysis finally returns the stored ratio under the key sortino for other modules to consume. Remember VixTermStructure as an example of a reusable snapshot provider in the codebase; Sortino plays a similar role for performance metrics by encapsulating collection, conversion and final computation so callers like run_strategy receive a ready-to-use risk-adjusted number.

# file path: backtest/util/analyzers.py

def __init__(self):

super(Sortino, self).__init__()

self.ret = TimeReturn(

timeframe=self.p.timeframe,

compression=self.p.compression)

self.ratio = 0.0Sortino.init begins by delegating to the Analyzer base class so the analyzer parameter machinery (accessible via self.p) is initialized, then constructs a TimeReturn helper configured with the analyzer’s timeframe and compression so that trade returns are aggregated at the exact periodicity Sortino expects; that TimeReturn instance is stored on self.ret and is the source of the return series that Sortino.stop will later read to compute the metric. Finally, Sortino seeds self.ratio with a numeric placeholder of zero so get_analysis can return a stable value prior to teardown; the actual Sortino ratio is computed and assigned to self.ratio during stop. This setup follows the platform’s analyzer lifecycle used by run_strategy and related universe utilities (for example VixTermStructure): instantiate, accumulate via helpers, then compute and expose results at teardown.

# file path: backtest/util/analyzers.py

params = (

(’timeframe’, TimeFrame.Years),

(’compression’, 1),

(’riskfreerate’, 0.01),

(’factor’, None),

(’convertrate’, True),

(’annualize’, False),

)In the Sortino analyzer, params is the class-level configuration that Backtrader expects to define the analyzer’s default behavior and to expose tweakable options when the analyzer is attached to a strategy; it lists the named settings the rest of the class reads via self.p. The entries set a default sampling granularity (a TimeFrame enum with a default of years) and a compression of one bar per sample so the TimeReturn helper created in init knows how to aggregate returns, a baseline risk-free rate of one percent used to form excess returns, a placeholder for a period-conversion factor that may be filled from the RATEFACTORS mapping, a flag that controls whether the supplied risk-free rate should be converted to the returns’ per-period rate, and a flag that controls whether the computed Sortino ratio should be scaled to an annual basis. Those defaults drive the data flow: init passes the timeframe and compression into TimeReturn; stop pulls the period returns from TimeReturn and then uses the risk-free rate, the conversion flag, and the factor (derived, if available, from RATEFACTORS) to align rates and returns before computing downside deviation and the final ratio; get_analysis then exposes the stored result. This follows the Backtrader Analyzer parameter convention and ties into the broader pipeline by letting run_strategy and other modules configure how aggressively the analyzer converts and annualizes returns when producing Sortino-based risk-adjusted metrics.

# file path: backtest/util/analyzers.py

RATEFACTORS = {

TimeFrame.Days: 252,

TimeFrame.Weeks: 52,

TimeFrame.Months: 12,

TimeFrame.Years: 1,

}RATEFACTORS is a small, class-level lookup that maps the Backtrader TimeFrame enumeration values to the number of those periods that make up a year (trading days -> 252, weeks -> 52, months -> 12, years -> 1). In the context of the Sortino analyzer, this mapping is the bridge between the periodic return series produced by the TimeReturn helper (configured via the analyzer’s timeframe and compression parameters) and the annualized or period-converted rates the stop method needs to compute the Sortino ratio correctly. During teardown, Sortino consults RATEFACTORS to decide whether and how to convert the user-specified risk-free rate or the collected returns between periodic and annual bases (depending on the convertrate and annualize flags); if a factor exists, it will either convert the continuous rate into the corresponding periodic rate or lift periodic returns to an annual scale. Conceptually it behaves like a fixed, non‑tweakable piece of configuration (similar in role to the params tuple but not user-adjustable) and it depends on the TimeFrame enum imported earlier so the analyzer can reason about periods-per-year consistently across timeframes.

# file path: backtest/util/analyzers.py

def stop(self):

returns = list(self.ret.get_analysis().values())

rate = self.p.riskfreerate

factor = None

if self.p.timeframe in self.RATEFACTORS:

factor = self.RATEFACTORS[self.p.timeframe]

if factor is not None:

if self.p.convertrate:

rate = pow(1.0 + rate, 1.0 / factor) - 1.0

else:

returns = [pow(1.0 + x, factor) - 1.0 for x in returns]

if len(returns):

ret_free_avg = np.mean(returns) - rate

tdd = math.sqrt(np.mean([min(0, r - rate)**2 for r in returns]))

try:

ratio = ret_free_avg / tdd

if factor is not None and \

self.p.convertrate and self.p.annualize:

ratio = math.sqrt(factor) * ratio

except (ValueError, TypeError, ZeroDivisionError):

ratio = None

else:

ratio = None

self.ratio = ratioWhen the backtest framework calls Sortino.stop at strategy teardown, the method pulls the periodic return series that Sortino.init prepared on the TimeReturn instance stored at self.ret by calling its get_analysis and flattening the values into a list called returns; that list is the primary input for all subsequent calculations and is what links the TimeReturn aggregation logic to the Sortino metric. It then reads the configured risk free rate and looks up a period conversion factor from RATEFACTORS based on the analyzer’s timeframe; if a factor is present the code follows one of two mutually exclusive paths depending on the convertrate flag: when convertrate is true it converts the configured annual risk free rate into the same periodic rate as the returns, otherwise it converts the periodic returns into the equivalent multi-period return that matches the annual scale. With returns and rate aligned, the method computes the mean excess return by subtracting the periodic risk free rate from the mean of returns and computes the target downside deviation as the square root of the mean squared negative differences between each return and the risk free rate (i.e., the downside volatility only from shortfalls). It then attempts to form the Sortino ratio as the excess mean divided by the downside deviation and, if a factor was used and both convertrate and annualize are true, scales the ratio by the square root of the factor to present an annualized figure. If there are no returns or an arithmetic error occurs (bad types or division by zero), the ratio is set to None. Finally, the computed ratio is stored on self.ratio so that get_analysis can expose it to the rest of the pipeline.

# file path: tools/plot.py

if __name__ == ‘__main__’:

register_matplotlib_converters()

PARSER = argparse.ArgumentParser()

PARSER.add_argument(’tickers’, nargs=’+’)

PARSER.add_argument(’-r’, ‘--returns’, action=”store_true”)

PARSER.add_argument(’-s’, ‘--start’, nargs=1, type=int)

PARSER.add_argument(’-e’, ‘--end’, nargs=1, type=int)

ARGS = PARSER.parse_args()

TICKERS = ARGS.tickers

START = ARGS.start or [1900]

END = ARGS.end or [2100]

START_DATE = datetime(START[0], 1, 1)

END_DATE = datetime(END[0], 1, 1)

DATA = []

for ticker in TICKERS:

datapath = os.path.join(os.path.dirname(__file__), f’../data/price/{ticker}.csv’)

ticker_data = pd.read_csv(datapath, index_col=’Date’, parse_dates=True)[’Adj Close’].rename(ticker)

DATA.append(ticker_data.loc[START_DATE: END_DATE])

plot(DATA, plot_returns=ARGS.returns)When the module is executed as a script the main guard activates a small command‑line entrypoint that wires up matplotlib’s pandas date converters and then parses arguments so a developer can request one or more ticker plots from the local price store. The code registers the pandas/matplotlib converters to ensure date indices plot correctly, builds an ArgumentParser that accepts a positional list of tickers, a boolean flag to request returns plotting, and optional start and end year options that are supplied as single‑element integer lists; the start and end values fall back to year bounds of 1900 and 2100 when not provided and are then converted into datetime objects representing January 1 of the given years. For each ticker the routine constructs a path relative to the script directory pointing at the CSV under the data/price folder, reads the file with pandas.read_csv using the Date index and parsing dates, selects the adjusted close series, renames that Series to the ticker symbol, slices it to the requested date interval, and appends the series to a DATA list. Finally the DATA list is handed to the plot function with the plot_returns flag driven by the parsed --returns option; plot will call _log_returns when returns plotting is requested, otherwise it draws the price series so this entrypoint provides a convenient CLI for producing the same performance or return visualizations the backtester uses at run_strategy time.

# file path: backtest/algos/EqualVolatility.py

import numpy as np

import pandas as pd

from . import BaseStrategy as baseThe file pulls in numpy and pandas because EqualVolatility needs both dense numerical operations and series-based time series conveniences: pandas is used to materialize closing prices into a time-indexed Series and compute log returns and diffs over the lookback window, while numpy supplies vectorized array arithmetic and aggregation (for example, turning a list of per-asset volatilities into an array and calculating inverse-volatility weights). The local import of BaseStrategy as base brings in the project’s common Strategy machinery so EqualVolatility can subclass and reuse the backtester plumbing (parameter handling, order lifecycle, broker value access, etc.); EqualVolatility.init delegates to base.Strategy.init following the same Strategy-subclass pattern used elsewhere in the codebase. Compared with a similar file that only imported numpy and the BaseStrategy alias, the addition of pandas here signals that this strategy constructs and manipulates a pandas Series for lookback return calculation rather than relying solely on raw numeric lists. The aliasing of BaseStrategy to base mirrors the convention used across strategy implementations, keeping references to the shared Strategy API concise when the strategy later reads self.datas, computes vols, and issues target orders.

# file path: backtest/algos/EqualVolatility.py

class EqualVolatility(base.Strategy):

params = {

‘rebalance_days’: 21,

‘target_percent’: 0.95,

‘lookback’: 21

}EqualVolatility is a concrete Strategy subclass that implements an inverse‑volatility allocation rule and plugs into the backtester’s execution pipeline so the engine can run an equal‑volatility portfolio over whatever data feeds were loaded. It declares three tunable params: rebalance_days to control calendar cadence, target_percent to cap how much of portfolio value this strategy should allocate in total, and lookback to set the window used to estimate volatility. Its constructor simply delegates to base.Strategy.init so it inherits the order bookkeeping, verbose logging flag and rejection handling that Strategy.init sets up. The runtime heart is rebalance: it pulls the recent close series for each data feed by calling the data feed’s accessor, converts those prices to log returns over the configured lookback, computes each asset’s sample standard deviation as the volatility estimate, and collects those volatilities into a vector. It converts volatilities into normalized inverse‑volatility weights so lower‑vol assets receive larger weights, scales the total allocation by target_percent times the broker’s current portfolio value, and determines for each asset the difference between that notional target and the asset’s current position value (computed from getposition). It then issues target percent orders for each asset in an order determined by sorting those differences, using order_target_percent so the broker will move positions toward the inverse‑volatility targets. next implements the control flow: it prevents overlapping orders by returning early if there is a pending order, triggers rebalance on the configured periodic cadence (length modulo rebalance_days) or immediately after a rejected order, and clears the rejection flag after handling it. Because it calls Strategy.log and the base order/notify machinery, the usual console messages and order state transitions are emitted as the framework expects; and, as noted earlier, the file contains places where VixTermStructure data is printed (see the previously examined contango prints), so EqualVolatility sits alongside utilities that may query the VIX term structure to annotate behavior or logging even though the core allocation here is driven by per‑asset historical volatilities. In pattern terms, EqualVolatility follows the backtester’s Strategy subclass template used elsewhere (same init delegation and use of order_target_percent seen in other strategies) and implements a deterministic rebalance pipeline: data read → return/vol estimation → weight computation → target order issuance.

# file path: tools/std.py

import os

import argparse

import pandas as pd

import numpy as nptools/std.py brings in a small, focused set of standard libraries and data libraries to support the numerical helpers it provides to hurst_exp and the EqualVolatility strategy and to allow the module to be invoked from the shell. The os import supplies lightweight operating‑system and filesystem utilities that the helpers use when they need to read/write diagnostic files or resolve paths for optional persistence; argparse provides the command‑line parsing layer so the module can expose simple flags and parameters when executed as a script for ad‑hoc diagnostics. pandas is brought in as the primary time‑series container: the std and gap_L13_21 routines expect and return pandas Series/DataFrame semantics (resampling, indexing, NaN handling), so pandas is used for alignment, windowing and any Series-level operations. numpy is used for the underlying numeric work — vectorized math, efficient array computations, and the basic population/sample formulas that implement the standard deviation and gap calculations. Compared with other modules you’ve seen, tools/std.py intentionally keeps its dependency surface small: unlike files that also import datetime, matplotlib, or scipy for plotting or distribution tests, std.py focuses on pandas and numpy plus minimal OS/CLI support, matching its role as a low-level, fast utility library that records diagnostics via Strategy.log rather than producing plots or heavier statistical tests.

# file path: tools/std.py

def std(ticker, length=250, usereturns=False):

path = os.path.join(os.path.dirname(__file__), f’../data/price/{ticker}.csv’)

price = pd.read_csv(path, parse_dates=True, index_col=’Date’)[’Adj Close’].rename(’Price’)

s = price

if usereturns:

s = np.log(price).diff().iloc[1:]

print(f’{ticker} ${price.iloc[-1]} ({length})’ + (’ [Using Returns]’ if usereturns else ‘’))

print(’std:\t\t’, round(s.iloc[-length:].std(), 5))std is a small diagnostic helper that reads a ticker’s normalized adjusted‑close series from the local price repository, optionally converts that series to log returns, computes a recent-sample standard deviation and emits simple console diagnostics. It builds a filesystem path relative to the module directory to open the ticker CSV, loads the Adj Close column into a pandas Series indexed by Date and named Price, and assigns that series to a working variable s. If the user requests returns, s is replaced with the series of natural‑log differences (dropping the initial NaN), otherwise s remains the raw price series; that is the single control branch in the function. The function prints a one‑line header showing the ticker, the most recent price and the window length (and marks when returns are being used), then computes the standard deviation over the final length observations of s, rounds it to five decimals and prints it. Data flows from disk into pandas, through an optional log‑returns transform (the same transformation used elsewhere via log_returns), into a single numeric summary, and out to the console; the call performs file I/O and produces console output and is used by higher‑level pieces such as hurst_exp and the Equal

# file path: backtest/algos/BaseStrategy.py

def __init__(self, kwargs=None):

bt.Strategy.__init__(self)

self.order = None

self.buyprice = None

self.buycomm = None

self.order_rejected = False

self.verbose = self.params.verboseStrategy.init establishes the shared runtime state every concrete algorithm expects when the backtest starts. It first lets the backtesting framework initialize its internals, then it clears or initializes the core attributes used across the strategy lifecycle: a placeholder for a pending order, placeholders for the last executed buy price and commission, a boolean marker that an order was rejected, and a verbosity flag read from the strategy parameters. These fields are the plumbing that connects strategy decision logic to the diagnostic and ordering machinery: the verbosity flag gates Strategy.log (so printed diagnostics only show when enabled), the pending-order slot is checked by strategy next methods to avoid duplicate submits, and buyprice/buycomm plus the order_rejected flag are written by Strategy.notify_order to record execution outcomes that downstream next/rebalance logic consults. Concrete strategies call base.Strategy.init in their own initializers to inherit this common lifecycle and diagnostic behavior so order submission, rejection handling, and simple logging work uniformly across BuyAndHold, CrossOver, MeanReversion, and the others.

# file path: backtest/algos/EqualVolatility.py

def rebalance(self):

vols = []

for d in self.datas:

returns = pd.Series(d.close.get(size=self.params.lookback))

returns = np.log(returns).diff().iloc[1:]

vol = returns.std()

vols.append(vol)

vols = np.array(vols)

order_sort = []

weights = []

for v, d in zip(vols, self.datas):

weight = (1.0 / v) / sum(1.0 / vols)

weights.append(weight)

position = self.getposition(d)

position_value = position.size * position.price

order_target = self.params.target_percent * weight * self.broker.get_value()

order_sort.append(order_target - position_value)

for s, d, w in sorted(zip(order_sort, self.datas, weights), key=lambda pair: pair[0]):

self.order_target_percent(d, self.params.target_percent * w)EqualVolatility.rebalance is the routine that turns the live price feeds into equal‑volatility target weights and then issues the orders to move the portfolio toward those weights. When next calls rebalance on its scheduled cadence, rebalance iterates over each feed in self.datas and pulls the recent close series using the strategy lookback; it converts those prices into log returns, drops the initial NaN, and computes the sample standard deviation as each asset’s volatility. Those volatilities are grouped into an array and converted into inverse‑volatility weights by taking each asset’s reciprocal volatility and normalizing by the sum of reciprocals so the weights sum to one. For each asset it then reads the current position via getposition, computes the present position value from size and price, and builds a desired dollar target equal to params.target_percent of total portfolio value multiplied by the asset’s inverse‑vol weight. The difference between that dollar target and the current position value is recorded for each asset into order_sort; finally the assets are processed in order of that difference (smallest adjustment first) and the strategy issues order_target_percent calls to set each asset to params.target_percent times its computed weight. Conceptually, the data flows from the ingestion layer (data.close), through a volatility estimator (log returns → std), into a weight calculator (inverse‑vol normalization), and finally into the execution layer (order_target_percent using broker.get_value); sorting by required adjustment schedules sells before buys so the execution sequence aligns cash needs with the intended rebalancing.

# file path: backtest/algos/EqualVolatility.py

params = {

‘rebalance_days’: 21,

‘target_percent’: 0.95,

‘lookback’: 21

}The params class attribute on EqualVolatility declares the strategy’s default, tweakable runtime settings that the backtester exposes through the usual self.p machinery (the same pattern Sortino uses for its configuration). rebalance_days controls the cadence used by next to decide when to invoke rebalance; target_percent is the portfolio exposure the strategy aims to allocate across the instruments when it computes equal‑volatility weights; lookback sets how many recent bars are read inside rebalance to form the log‑return series used to estimate each instrument’s volatility. Compared with the nearby variants, the only difference in one is a longer lookback horizon (sixty bars instead of twenty‑one) which smooths volatility estimates, while the other variant replaces the single exposure parameter with an explicit leverage vector and flips the sign of the target exposure to produce inverse positioning; otherwise they follow the same class‑level param pattern. Together these defaults implement a roughly monthly rebalance using a three‑week volatility estimate and targeting about ninety‑five percent invested, feeding directly into rebalance’s weight calculation and the subsequent order_target_percent calls.

# file path: backtest/algos/EqualVolatility.py

def __init__(self):

base.Strategy.__init__(self)EqualVolatility.init simply hands control to base.Strategy.init, which establishes the shared runtime state the backtester and all concrete strategies expect (the order-tracking fields, buy price/commission holders, the order_rejected flag and the verbosity wiring from the L5 params). By delegating initialization this way, EqualVolatility is fully plugged into the framework’s logging and notify_order machinery so its rebalance and next methods can rely on the common state and console diagnostics without adding any extra per-instance fields at construction time; this mirrors the lightweight init pattern used by BuyAndHold and other strategies that only need the framework defaults, whereas strategies like CrossOver or MeanReversion augment initialization when they require indicators or extra counters.

# file path: backtest/algos/EqualVolatility.py

def next(self):

if self.order:

return

if len(self) % self.params.rebalance_days == 0:

self.rebalance()

elif self.order_rejected:

self.rebalance()

self.order_rejected = FalseEqualVolatility.next is the per-bar driver the backtester invokes to decide whether the strategy should act on the current tick; it controls the timing and simple retry semantics for rebalancing while preventing concurrent order activity. First it checks the self.order flag and returns immediately if an order is already outstanding so the strategy never issues overlapping orders. If no order is pending, it checks the elapsed bar count via len(self) against the cadence declared in self.params.rebalance_days and, when that modulo test hits, it calls EqualVolatility.rebalance to compute equal‑volatility weights and submit the required orders (rebalance performs the volatility calculations, consults VixTermStructure.get and emits orders and logs). If the scheduled trigger did not fire but an earlier order was rejected, next calls rebalance again to retry the allocation and then clears the retry marker by setting self.order_rejected to False. This flow is the same periodic-plus-retry pattern used by LeveragedEtfPair.next and the other strategy next methods (NCAV adds a filter step before rebalance, PairSwitching uses a one‑bar offset), and its side effects are limited to invoking rebalance (which can perform network/IO and portfolio mutations) and updating instance state such as order_rejected.

# file path: backtest/algos/MeanReversion.py

import pandas as pd

import numpy as np

from . import BaseStrategy as baseMeanReversion pulls in pandas and numpy to do the core data manipulation and numeric work that a mean‑reversion engine needs: pandas provides the Series/DataFrame primitives for aligning and windowing the normalized adjusted‑close time series that std reads and for the table‑level ranking and rolling statistics used by the ranking and filtering stages, while numpy supplies the fast array math for z‑score calculations, percentiles, mask logic and the numerical kernels behind the Kelly position‑sizing and order sizing. It also imports BaseStrategy from the local package under the name base so the mean‑reversion implementation can subclass and use the shared lifecycle, state and utility methods established by Strategy.init (the same runtime plumbing that EqualVolatility.rebalance and other strategies rely on). This import pattern matches other strategy modules that bring in numpy and the local BaseStrategy; the only notable difference is that MeanReversion explicitly brings pandas because it performs heavier DataFrame‑style ranking and filtering that the lighter strategies sometimes avoid.

# file path: backtest/algos/MeanReversion.py

class MeanReversion(base.Strategy):

params = {

‘target_percent’: 0.95,

‘riskfreerate’: 0,

‘quantile’: 0.10,

‘npositions’: 25,

‘quantile_std’: 0.10,

‘quantile_vol’: 1.0,

‘lookback’: 6,

‘offset’: 1,

‘order_frequency’: 5,

‘cheat_on_open’: False

}MeanReversion implements the mean‑reversion strategy that the backtester uses to decide which assets to long, short, or close at periodic rebalancing points, and it wires into the Strategy lifecycle so ranking, filtering, sizing and order issuance happen inside the usual prenext/next cadence. On construction MeanReversion invokes Strategy.init (so it inherits the shared runtime state like the order and order_rejected flags) and initializes its own counters and containers: a bar counter, an empty Pandas Series for ranking, a filter list, placeholders for the top/bottom ranked groups and the lists of longs/shorts/closes, an order_valid boolean and a rolling list of portfolio values used for adaptive sizing. At each bar prenext and next compute the same order_valid predicate from the bar count, the configured lookback and offset and the order_frequency; when order_valid is true the routine calls process and then closes and reissues orders, and when an order has been rejected it retries order submission and clears the rejection flag. process orchestrates filtering and ranking: add_filter scans every datafeed in self.datas and, after guarding on having enough history, computes a short lookback standard deviation

# file path: backtest/algos/MeanReversion.py

def add_rank(self):

for i, d in enumerate(self.datas):

if len(d) < self.params.lookback + self.params.offset:

continue

if i not in self.filter:

continue

prev = d.close.get(size=self.params.lookback, ago=self.params.offset)[0]

pct_ret = (d.close[0] / prev) - 1

self.rank.loc[i] = pct_ret

if self.params.npositions > 0:

self.top = list(self.rank.nlargest(self.params.npositions).index)

self.bottom = list(self.rank.nsmallest(self.params.npositions).index)

else:

quantile_top = self.rank.quantile(1 - self.params.quantile)

self.top = list(self.rank[self.rank >= quantile_top].index)

quantile_bottom = self.rank.quantile(self.params.quantile)

self.bottom = list(self.rank[self.rank <= quantile_bottom].index)MeanReversion.add_rank is the step that turns the raw per-symbol price feeds into a ranked signal the strategy can act on: it walks the universe held in self.datas, skips any series that lacks enough history for the configured lookback plus offset (protecting against indexing into incomplete windows), and also skips any asset index that the precomputed filter did not include (remember add_filter populates self.filter and performs the VIX/volatility screening). For each eligible symbol it pulls the close price from lookback periods ago (honoring the offset to avoid look‑ahead), computes the simple percentage return from that past close to the current close, and stores that return into the persistent pd.Series self.rank keyed by the asset’s integer index — this creates a stable mapping between rank entries and items in self.datas. After populating self.rank the routine chooses the candidates to trade: if a fixed number of positions is requested via params.npositions it selects the n largest and n smallest ranked indices as the top and bottom lists; otherwise it computes quantile thresholds from the rank distribution and assigns all assets above the top quantile to the top list and all assets below the bottom quantile to the bottom list. Those resulting top and bottom index lists are the inputs that process converts into longs, shorts, and closes, and that send_orders, set_kelly_weights, and close_positions use to size and execute trades in the mean‑reversion lifecycle.

# file path: backtest/algos/MeanReversion.py

def add_filter(self):

sd = pd.Series()

vol = pd.Series()

for i, d in enumerate(self.datas):

if len(d) < self.params.lookback + self.params.offset:

continue

lookback = d.close.get(size=self.params.lookback, ago=self.params.offset)

returns = np.diff(np.log(lookback))[1:]

sd.loc[i] = np.std(returns)

lookback = d.close.get(size=min(126, len(d)), ago=self.params.offset)

returns = np.diff(np.log(lookback))[1:]

vol.loc[i] = np.std(lookback)

quantile_std = sd.quantile(1 - self.params.quantile_std)

quantile_vol = vol.quantile(1 - self.params.quantile_vol)

sd = list(sd[sd <= quantile_std].index)

vol = list(vol[vol <= quantile_vol].index)

self.filter = list(set(sd) | set(vol))MeanReversion.add_filter builds the shortlist of asset indices the strategy will consider by measuring recent variability across the universe: it iterates over each data feed in self.datas, skipping any feed that does not yet have enough history to satisfy the configured lookback plus offset, and for each eligible feed it pulls a close-price window via the feed’s close.get accessor. It converts that short lookback window into log returns (using a differencing of the logged prices) and records the standard deviation of those returns into a pandas Series named sd keyed by the asset index; it then pulls a longer window (up to 126 bars or the available length), again turns it into returns, and records a second volatility measure into a pandas Series named vol keyed by the index. After collecting per-asset sd and vol numbers it computes cutoff thresholds by taking the (1 - quantile) tails defined by the parameters quantile_std and quantile_vol, selects assets whose recent-return sd is less than or equal to the sd cutoff and whose vol measure is less than or equal to the vol cutoff, and assigns the union of those index sets to self.filter. The guard that skips short histories prevents mis-sized windows and ensures only assets with sufficient data are measured; the resulting self.filter is then used by add_rank and the rest of process

# file path: backtest/algos/MeanReversion.py

def process(self):

self.add_filter()

self.add_rank()

self.longs = [d for (i, d) in enumerate(self.datas) if i in self.bottom]

self.shorts = [d for (i, d) in enumerate(self.datas) if i in self.top]

self.closes = [d for d in self.datas if (

(d not in self.longs) and

(d not in self.shorts)

)]MeanReversion.process is the orchestration step that turns raw instrument feeds into three actionable sets the rest of the strategy uses: longs, shorts and closes. It first invokes add_filter to prune the universe based on recent volatility and liquidity heuristics (you already saw that add_filter builds self.filter by scanning self.datas and computing short- and medium‑term standard deviations), then invokes add_rank to compute short‑term performance ranks for the filtered instruments and populate self.top and self.bottom (you already saw that add_rank writes into self.rank and chooses top/bottom either by fixed count or by quantile). After those two preparatory passes, process walks the strategy’s feed collection, mapping feed indices into concrete Backtrader data objects: any feed whose index appears in self.bottom becomes part of the longs list (mean‑reversion logic buys recent laggards), any feed whose index appears in self.top becomes part of the shorts list (it sells recent leaders), and any feed that is in neither list is collected into closes so existing positions in those instruments will be closed. The method therefore does not itself place orders or compute position sizes; it only establishes the candidate groups that next lifecycle steps use — send_orders will read longs and shorts to issue target percent orders and close_positions will iterate over closes to liquidate. Because next and prenext gate process with the order_valid condition you examined earlier, process runs only at rebalance times and relies on add_filter and add_rank to skip feeds lacking sufficient history, keeping those guard clauses centralized in the ranking/filtering stage.

# file path: backtest/algos/MeanReversion.py

def send_orders(self):

for d in self.longs:

if len(d) < self.params.lookback + self.params.offset:

continue

split_target = 1 * self.params.target_percent / len(self.longs)

self.order_target_percent(d, target=split_target)

for d in self.shorts:

if len(d) < self.params.lookback + self.params.offset:

continue

split_target = -1 * self.params.target_percent / len(self.shorts)

self.order_target_percent(d, target=split_target)send_orders is the routine that actually converts the candidate lists produced by process into live position targets for the backtester: when next or prenext sets order_valid and they have already run process and close_positions, send_orders iterates the long and short candidate lists that process populated from add_rank and add_filter and issues percent‑target orders through the engine. For each data feed in self.longs it first skips any feed that does not yet have at least lookback plus offset bars (the same guard used elsewhere to avoid acting on incomplete histories), then computes an equal split of the overall params.target_percent across the number of long candidates and calls the backtest API to move that instrument to the computed positive target weight. It repeats the same pattern for self.shorts but negates the split so the orders become short targets. Conceptually this implements a simple equal‑weight allocation across the chosen mean‑reversion longs and shorts (using the strategy’s configured target_percent), and relies on order_target_percent to translate those weight targets into the broker orders/position adjustments the engine executes. This behavior mirrors the rebalance pattern used elsewhere (NCAV.rebalance) but differs by operating separately on the long and short sets and applying a negative sign for short targets; the inputs to send_orders come from the ranking/filtering pipeline that add_rank and add_filter establish and from the timing control in next/prenext.

# file path: backtest/algos/MeanReversion.py

def set_kelly_weights(self):

value = self.broker.get_value()

self.values.append(value)

kelly_lookback = 20

if self.count > kelly_lookback:

d = pd.Series(self.values[-kelly_lookback:])

r = d.pct_change()

mu = np.mean(r)

std = np.std(r)

if std == 0.0:

return

f = (mu)/(std**2)

if f == np.nan:

return

self.params.target_percent = max(0.2, min(2.0, f / 2.0))