An introduction to neural networks using PyTorch

Artificial intelligence and machine learning implementations are increasingly based on deep neural networks.

A neural network-based advanced modeling technique will dominate the future of data mining. Since neural networks were invented in the 1950s, it is an obvious wonder why they are now becoming so popular.

As a parallel information processing system, neural networks are borrowed from computer science, where each input is related to another, like neurons in the human brain, to transmit information, enabling tasks such as face recognition, image recognition, and so on to be performed. Various data mining tasks, including classification, regression, forecasting, and feature reduction, are discussed in this article using neural network-based methods. ANNs work in a similar way to how the human brain does, by processing information and generating insights by connecting billions of neurons.

Activation functions

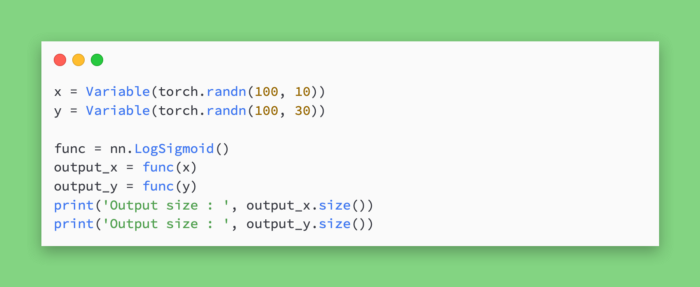

Are activation functions used in real projects and how do they work? What are the steps for implementing an activation function using PyTorch?

According to the type of mathematical transformation function, an activation function converts a binary, floating point, or integer vector into another format. A mathematical function called an activation function connects neurons in different layers — input, hidden, and output. The following sections explain different activation functions. Having a clear understanding of the activation function is crucial to accurately implementing neural network models.

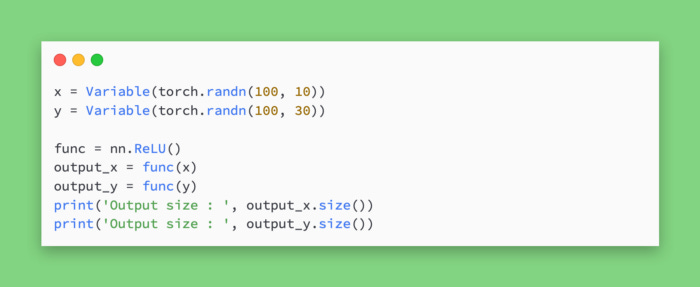

Neural network activation functions can be broadly divided into linear and nonlinear functions. Using PyTorch torch.nn, you can create any type of neural network model. The torch.nn module in PyTorch is useful for deploying activation functions.

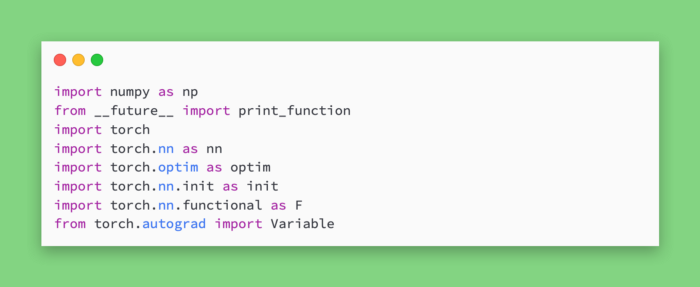

Python Torch and TensorFlow differ primarily in how computational graphs are defined, how calculations are performed, and how flexible the scripts are. Before we initialize the model in TensorFlow, we need to define the variables and placeholders. Objects we need later need to be tracked, and for that we need placeholders. PyTorch allows us to define the model as we go, rather than keeping placeholders in the code, which we have to do with TensorFlow. Because of this, PyTorch is a dynamic framework.

Linear Function

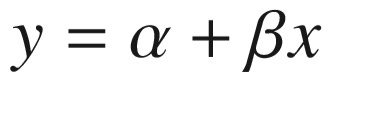

A linear function is a simple function that usually transfers information between the demapping layer and the output layer. In places where there are fewer variations in data, we use the linear function. The last hidden layer to the output layer in deep learning models typically uses a linear function. Using a linear function, the output is always limited to a certain range; therefore, it is often used as the last hidden layer of a deep learning model, in linear regression, or in deep learning models that are tasked with predicting outcomes based on input information. The formula is as follows.

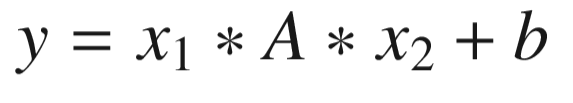

Bilinear Function

An information transfer function is a bilinear function. Incoming data is transformed bilinearly.

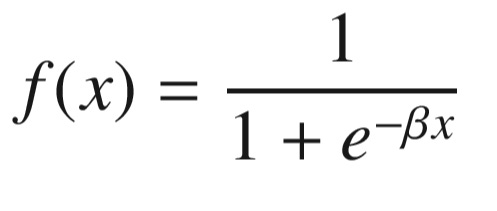

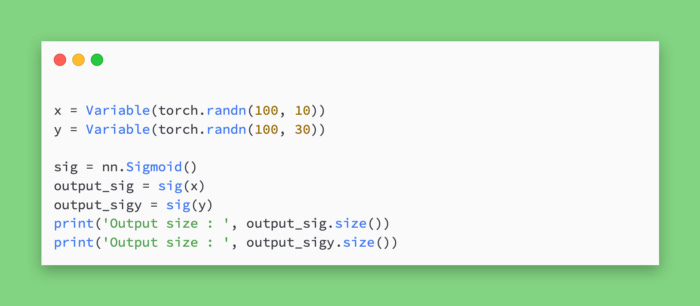

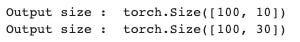

Sigmoid Function

Data mining and analytics professionals often use sigmoid functions because they are easier to explain and implement. The function is nonlinear. We want our model to capture all types of nonlinearity in the data by passing weights from the input layer to the hidden layer; therefore, we recommend using the sigmoid function in the hidden layer. In order to generalize the dataset, nonlinear functions are used. Gradients can be computed more easily with nonlinear functions.

An activation function that is nonlinear is the sigmoid function. Due to its confined range, sigmoid functions are mostly used to perform classification-based tasks. In addition to getting stuck in local minima, the sigmoid function has another limitation. This provides a probability that one will be a member of the class, which is an advantage. Its equation is as follows.

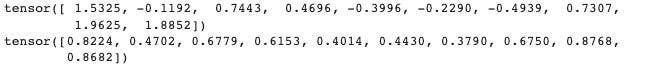

The hyperbolic tangent function

Another type of transformation function is the hyperbolic tangent function. The mapping layer is transformed into the hidden layer using this layer. Neural network models typically use it between the hidden layers. Between –1 and +1 is the range of the tanh function.

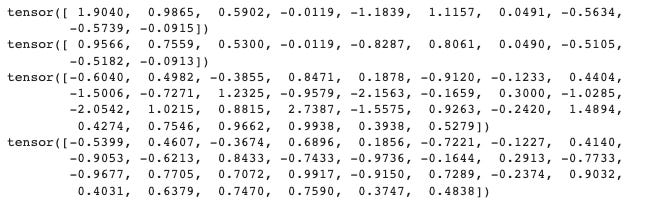

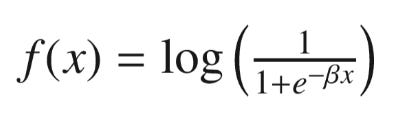

Sigmoid Transfer Function for Logs

In order to map the input layer to the hidden layer, we use the log sigmoid transfer function. We should use the log sigmoid transfer function when the input feature has a lot of outliers (for example, large numeric values).

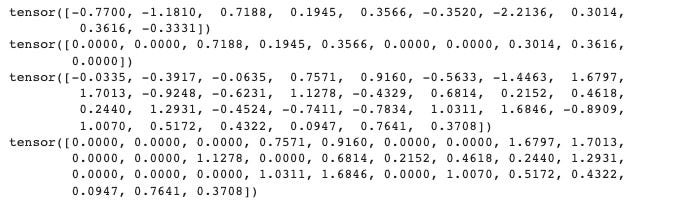

ReLU Function

An additional activation function is the rectified linear unit (ReLu). Information is transferred from the input layer to the output layer using this layer. Convolutional neural networks use ReLu most frequently. There is a range of 0 to infinity in the range of this activation function. In a neural network model, it is used mostly between hidden layers.

An architecture for neural networks uses interchangeable transfer functions. To increase the accuracy of the model, they can be used at different stages, such as as inputs, hidden layers, and output layers.

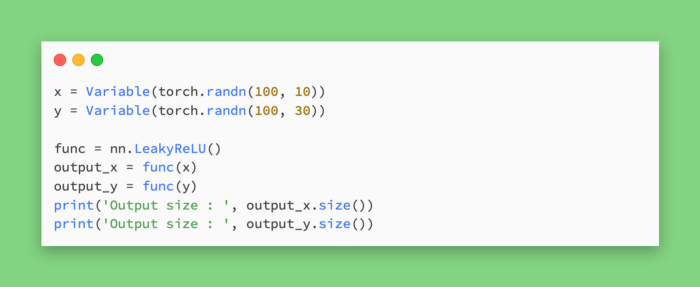

The leaky ReLU

It is common for neural networks to suffer from dying gradient problems. Leaky ReLU is applied to avoid this problem. When the unit is not active, there can be a small, non-zero gradient.

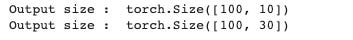

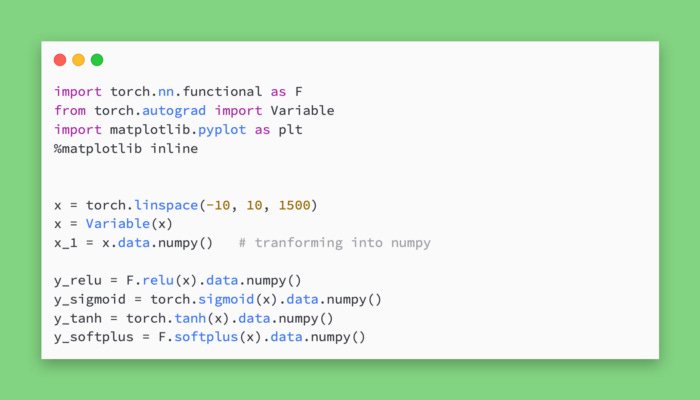

A visual representation of activation functions

The activation functions can be visualized in what way? In order to build a neural network model correctly, it is important to visualize activation functions.

Data is translated between layers by activation functions. Visualize the function by plotting the transformed data against the actual tensor. By converting a sample tensor to a PyTorch variable, applying the function, and storing it as another tensor, we have created another tensor. Using matplotlib, present the transformed and original tensors.

In addition to providing better accuracy, the right activation function will also assist in extracting meaningful data.

We have 1500 sample points in a linear array between -10 and +10. For plotting, we remade the vector as a NumPy variable after converting it to a Torch variable. The activation functions were then calculated. Activation functions are shown in the following images.