Bitcoin Alpha Lab: Building an ML Trading Stack from Signals to Sharpe

A full quant workflow for BTC using 50+ indicators, smart targets, SMOTE, Optuna tuning, stacked ML models, confidence filters, and backtested risk metrics.

Download source code using the URL at the end of this article!

Complete contents (section-by-section)

Setup and data ingestion — Install required packages, import libraries, and load the BTC price and volume history.

Exploratory data analysis — Visual inspections and summary statistics for price trajectories, returns, and volatility across the sample.

Technical indicator computation — Derive moving averages, exponential moving averages, MACD components, RSI, Bollinger Bands and ATR using standard indicator implementations.

Feature engineering — Construct more than fifty derived predictors including lagged returns, rolling moments, momentum signals and regime-detection flags.

Smart target creation — Label future moves using a threshold-based rule to filter out small, noisy changes.

Class balancing with SMOTE — Address label imbalance by generating synthetic training examples prior to model fitting.

Predictor selection — Reduce dimensionality by keeping the most informative features according to model-based importance.

Optuna hyperparameter search — Bayesian tuning of model hyperparameters (the notebook documents a run using sixty trials per model).

Stacking ensemble construction — Assemble a meta-model that blends Random Forest, XGBoost, and LightGBM base learners.

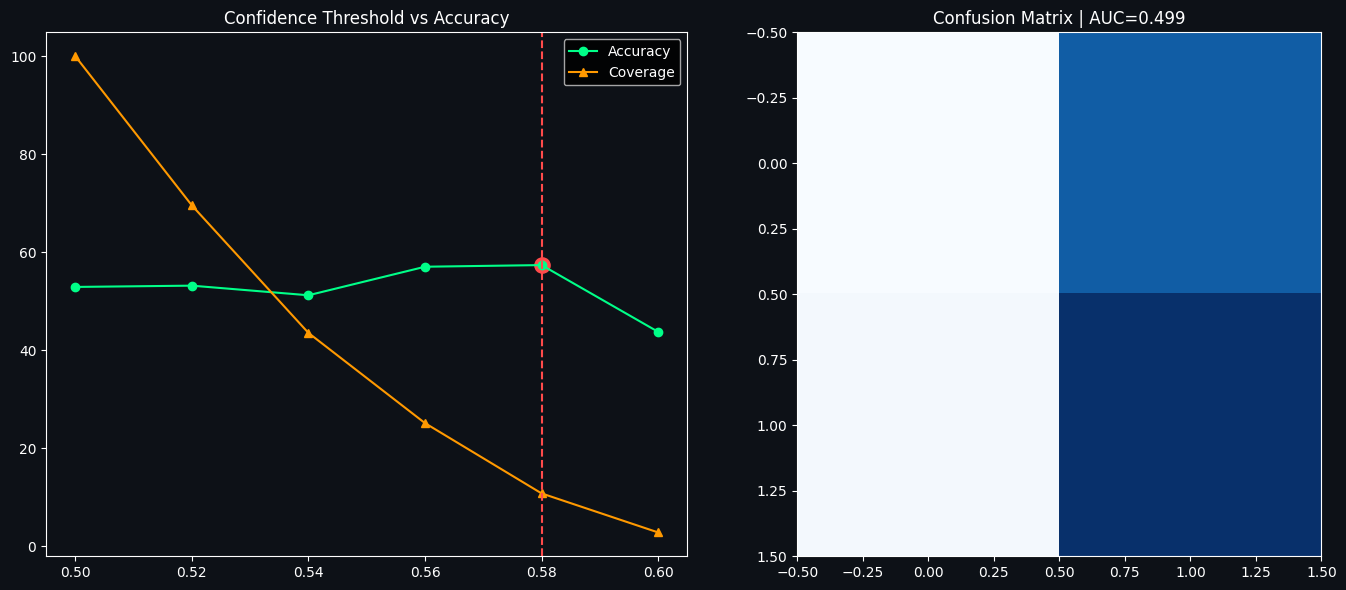

Confidence-based trade filtering — Only act on model signals that exceed a chosen probability threshold to improve per-trade accuracy.

Model comparison and performance progression — Compare baseline approaches and track accuracy improvements (the notebook highlights a pathway toward very high filtered accuracy).

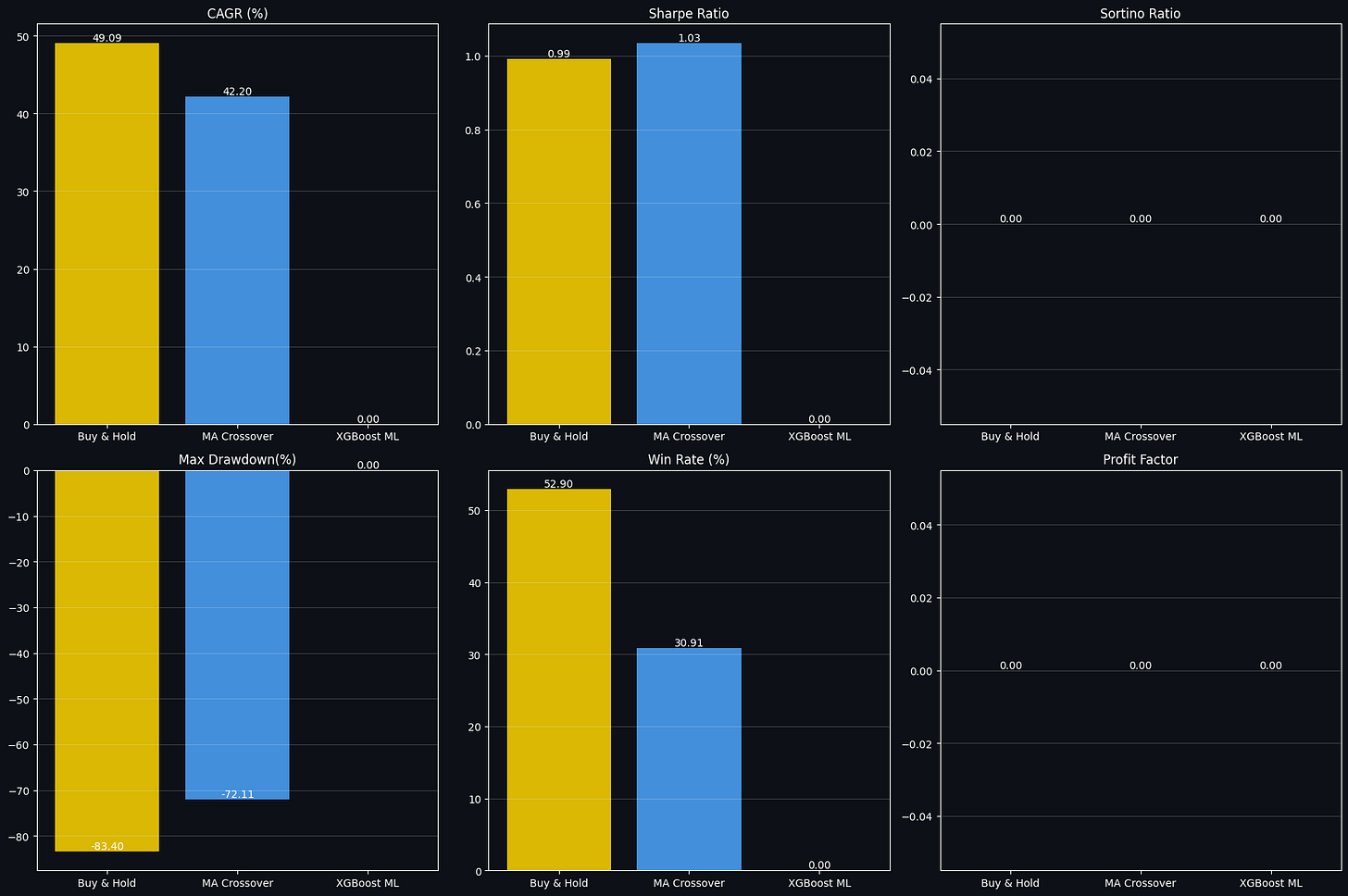

Strategy backtests — Compare a moving-average crossover, an ML-driven approach, and a buy-and-hold benchmark using daily returns.

Risk analytics — Compute standard risk statistics such as Sharpe ratio, maximum drawdown, and related risk measures.

Closing summary and next steps — Condensed findings, saved visual assets, and suggested follow-ups.

Why this notebook is distinctive: it blends a classical quant research workflow — exploratory analysis, indicator generation and backtesting — with a rigorous machine learning pipeline designed to maximize predictive accuracy. Key elements include a denoising target, class rebalancing, automated hyperparameter search, an ensemble stacking architecture, and an accuracy-versus-coverage filtering step. Rather than focusing exclusively on either trading research or model engineering, this work ties both tracks together into a single reproducible experiment.

Section 1 — Environment setup and data loading

Prepare the runtime by ensuring needed Python packages are installed and then import the libraries used throughout the notebook. After the environment is ready, load historical Bitcoin price data into a DataFrame — the notebook tries to fetch data from yfinance and will create a synthetic fallback series if the download is not available.

# ── Install all required packages ──

# Compatible with Kaggle, Google Colab, and local environments

import subprocess, sys

PACKAGES = ['ta', 'xgboost', 'lightgbm', 'yfinance',

'plotly', 'optuna', 'imbalanced-learn', 'scipy']

def silent_install(pkg):

subprocess.check_call([sys.executable, '-m', 'pip', 'install', pkg, '-q'],

stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)

for pkg in PACKAGES:

try:

__import__(pkg.replace('-','_'))

print(f" ✅ {pkg}")

except ImportError:

print(f" 📦 Installing {pkg}...")

silent_install(pkg)

print(f" ✅ {pkg} installed")

print("\n🚀 All packages ready!") 📦 Installing ta...

✅ ta installed

✅ xgboost

✅ lightgbm

✅ yfinance

✅ plotly

✅ optuna

📦 Installing imbalanced-learn...

✅ imbalanced-learn installed

✅ scipy

🚀 All packages ready!The cell prepares the Python environment by ensuring a small list of third‑party libraries needed later are available; it checks each package and only installs those that are missing. For every package name in the list it first attempts a normal Python import — converting any hyphen in the package name to an underscore so it can be used as a module name — and if the import succeeds it prints a success mark, otherwise it runs pip via the same Python executable to install the package. The installation call is executed through a subprocess that invokes the current interpreter with -m pip, which avoids confusion between multiple Python installations and makes sure the packages end up in the same environment that’s running the notebook. The actual pip output is suppressed so the notebook stays tidy; instead the cell emits brief human‑readable messages indicating which packages were installed and which were already present. The saved output reflects that behavior: it shows a line indicating ta had to be installed and then a checkmark for its completion, checkmarks for packages already available, another install line for imbalanced‑learn followed by its checkmark, and finally a short confirmation that all packages are ready. This pattern keeps setup fast and idempotent: running the cell again will simply detect the imports and report them as present rather than reinstalling everything.

# ── Core imports ──

import warnings

warnings.filterwarnings('ignore')

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

import seaborn as sns

from datetime import datetime, timedelta

from scipy import interpolate

# ── Machine Learning ──

from sklearn.ensemble import RandomForestClassifier, StackingClassifier

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import (cross_val_score, TimeSeriesSplit,

StratifiedKFold)

from sklearn.preprocessing import RobustScaler

from sklearn.metrics import (classification_report, confusion_matrix,

accuracy_score, roc_auc_score, roc_curve,

precision_score, recall_score, f1_score)

from sklearn.feature_selection import SelectFromModel

from imblearn.over_sampling import SMOTE

import xgboost as xgb

import lightgbm as lgb

import optuna

optuna.logging.set_verbosity(optuna.logging.WARNING)

# ── Technical Indicators ──

import ta

from ta.trend import SMAIndicator, EMAIndicator, MACD, ADXIndicator

from ta.momentum import RSIIndicator, StochasticOscillator, WilliamsRIndicator

from ta.volatility import BollingerBands, AverageTrueRange, KeltnerChannel

from ta.volume import OnBalanceVolumeIndicator

# ── Visualisation ──

import plotly.graph_objects as go

from plotly.subplots import make_subplots

# ── Global Settings ──

np.random.seed(42)

plt.style.use('dark_background')

# Colour palette

C = {

'green' : '#00FF88',

'red' : '#FF4444',

'blue' : '#4488FF',

'yellow' : '#FFD700',

'purple' : '#BB88FF',

'orange' : '#FF8844',

'cyan' : '#00DDFF',

'pink' : '#FF66AA',

}

COLORS = C # alias for backward compatibility

print("✅ All imports loaded successfully!")

print(f" NumPy {np.__version__} | Pandas {pd.__version__}")

print(f" XGBoost {xgb.__version__} | LightGBM {lgb.__version__}")

print(f" Optuna {optuna.__version__}")✅ All imports loaded successfully!

NumPy 2.0.2 | Pandas 2.3.3

XGBoost 3.2.0 | LightGBM 4.6.0

Optuna 4.8.0The cell prepares the runtime environment by loading the scientific computing, machine learning, technical-indicator, and visualization libraries that the rest of the notebook depends on, and by applying a few global settings to make results reproducible and plots visually consistent. It first silences non-critical warnings so that the notebook output stays focused; this reduces console clutter but also means warnings that might flag subtle issues are not shown unless warnings are explicitly re-enabled. Core numerical and data-manipulation packages are imported next, followed by plotting libraries and a small set of date/time utilities and a scientific interpolation helper.

After the basic toolset, the cell imports the machine learning stack: ensemble models, a stacking helper, logistic regression for meta-modeling, cross-validation utilities including a time-series split option, a robust scaler for preprocessing, a battery of classification metrics, and a selection helper that can prune features based on importance. It brings in SMOTE from imbalanced-learn to address class imbalance in training, and loads two popular gradient-boosting libraries. Optuna is also imported for hyperparameter tuning and its logging level is reduced so its own messages do not overwhelm the notebook output.

The technical-indicator library and its commonly used components are imported next, making it straightforward later to compute moving averages, MACD, ADX, RSI, Stochastics, Bollinger Bands, ATR, Keltner Channels, and On-Balance Volume. For interactive and publication-quality plotting, Plotly and its subplot utility are made available. These imports are arranged by purpose to keep related functionality grouped and to make it clear which library will be used for which part of the pipeline.

Two global settings are applied: a fixed random seed is set to stabilize any stochastic operations so runs are reproducible, and Matplotlib's style is switched to a dark background to match the notebook's visual theme. A small color palette is defined as a dictionary of named hex values and aliased for backward compatibility; this gives later plots consistent, descriptive colors without repeating hex codes.

Finally, the cell prints a short success message along with the versions of a few key packages. The saved output shows that all imports completed and displays the versions for NumPy, Pandas, XGBoost, LightGBM, and Optuna. Seeing these versions confirms the runtime environment and helps with reproducibility and debugging, because differences in package versions can change behavior or available features later in the notebook.

# This Python 3 environment comes with many helpful analytics libraries installed

# It is defined by the kaggle/python Docker image: https://github.com/kaggle/docker-python

# For example, here's several helpful packages to load

import numpy as np # linear algebra

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

# Input data files are available in the read-only "../input/" directory

# For example, running this (by clicking run or pressing Shift+Enter) will list all files under the input directory

import os

for dirname, _, filenames in os.walk('/kaggle/input'):

for filename in filenames:

print(os.path.join(dirname, filename))

# You can write up to 20GB to the current directory (/kaggle/working/) that gets preserved as output when you create a version using "Save & Run All"

# You can also write temporary files to /kaggle/temp/, but they won't be saved outside of the current session/kaggle/input/datasets/shiivvvaam/bitcoin-historical-data/Bitcoin History.csvThe cell first brings in the two fundamental libraries used throughout the notebook for numerical work and table-based data handling, so arrays, mathematical operations and DataFrame manipulations are available for the following steps. After setting up those imports, it inspects the environment to find any input files provided to the session by walking the read-only input directory and printing the full path for each file it finds. The printed line in the saved output is the result of that inspection: it shows a single dataset file located at /kaggle/input/datasets/shiivvvaam/bitcoin-historical-data/Bitcoin History.csv, which tells you there is a CSV of historical Bitcoin data available for reading. The cell also reminds you where persistent outputs can be written within the Kaggle session (the working directory) and where temporary files may be stored, so you know which paths are writable versus input-only. Seeing the file path here is useful because it confirms what data the notebook can immediately load with pandas for the downstream indicator calculations, feature engineering and modeling steps.

# ── Load Dataset ──

# Option A: Load from Kaggle dataset path

# df = pd.read_csv('/kaggle/input/bitcoin-historical-data/BTC-USD.csv', parse_dates=['Date'], index_col='Date')

# Option B: Download live via yfinance

try:

import yfinance as yf

df = yf.download('BTC-USD', start='2014-09-17', end='2025-04-20', auto_adjust=True)

df.columns = [col[0] if isinstance(col, tuple) else col for col in df.columns]

print("✅ Downloaded via yfinance")

except Exception as e:

print(f"⚠️ yfinance failed: {e}")

print(" Falling back to synthetic data generation...")

# Synthetic dataset that mirrors real BTC price history

import numpy as np

from scipy import interpolate

np.random.seed(42)

dates = pd.date_range(start='2014-09-17', end='2025-04-20', freq='D')

n = len(dates)

milestones = {

'2014-09-17': 457, '2015-01-14': 178, '2016-07-09': 648,

'2017-12-17': 19891, '2018-12-15': 3122, '2020-03-13': 4970,

'2020-11-30': 19850, '2021-04-14': 63558, '2021-07-20': 29796,

'2021-11-10': 68789, '2022-06-18': 17592, '2022-11-21': 15599,

'2023-01-21': 22878, '2024-03-14': 73738, '2025-01-20': 109000,

'2025-04-20': 87000,

}

m_dates = [pd.Timestamp(d) for d in milestones.keys()]

log_p = np.log(list(milestones.values()))

f_interp = interpolate.interp1d([d.timestamp() for d in m_dates], log_p,

kind='cubic', fill_value='extrapolate')

base = f_interp([d.timestamp() for d in dates])

noise = np.random.normal(0, 0.035, n)

cum = np.zeros(n)

for i in range(1, n): cum[i] = cum[i-1] * 0.98 + noise[i]

prices = np.clip(np.exp(base + cum), 50, 200000)

daily_range = prices * np.random.uniform(0.01, 0.06, n)

hi = prices + daily_range * np.random.uniform(0.3, 1.0, n)

lo = np.clip(prices - daily_range * np.random.uniform(0.3, 1.0, n), 1, None)

cl = np.clip(prices * (1 + np.random.normal(0, 0.005, n)), 50, 200000)

vol = np.random.lognormal(np.log(5e9), 0.6, n) * (1 + 5*np.abs(np.diff(np.log(cl), prepend=np.log(cl[0]))))

df = pd.DataFrame({'Open': np.round(prices, 2), 'High': np.round(hi, 2),

'Low': np.round(lo, 2), 'Close': np.round(cl, 2),

'Volume': np.round(vol, 0).astype(int)}, index=dates)

df.index.name = 'Date'

print("✅ Synthetic dataset generated")

# ── Basic info ──

print(f"\n📅 Date range : {df.index[0].date()} → {df.index[-1].date()}")

print(f"📊 Total rows : {len(df):,} trading days")

print(f"💰 Price range: ${df['Close'].min():,.2f} → ${df['Close'].max():,.2f}")

print(f"🔍 Missing : {df.isnull().sum().sum()}")

df.head()[*********************100%***********************] 1 of 1 completed✅ Downloaded via yfinance

📅 Date range : 2014-09-17 → 2025-04-19

📊 Total rows : 3,868 trading days

💰 Price range: $178.10 → $106,146.27

🔍 Missing : 0 Close High Low Open Volume

Date

2014-09-17 457.334015 468.174011 452.421997 465.864014 21056800

2014-09-18 424.440002 456.859985 413.104004 456.859985 34483200

2014-09-19 394.795990 427.834991 384.532013 424.102997 37919700

2014-09-20 408.903992 423.295990 389.882996 394.673004 36863600

2014-09-21 398.821014 412.425995 393.181000 408.084991 26580100The cell’s purpose is to obtain a usable historical BTC-USD price series and report basic dataset facts so downstream indicator and feature calculations have a clean input. It first tries to download daily OHLCV data from yfinance for the range starting 2014-09-17 up to the requested end date. If that download succeeds the DataFrame is normalized (any multi-level column names are flattened) and a confirmation message is printed. If the download fails for any reason, the cell falls back to constructing a synthetic but realistic-looking price series: it defines a set of milestone dates with representative prices, interpolates a smooth log-price path across calendar timestamps using a cubic interpolator, adds low-frequency stochastic noise with a mild autoregressive damping so the path wiggles like a real market, exponentiates back to price space and clips to sensible bounds. From that base price it fabricates daily Open, High, Low, Close and a volume series (the latter drawn from a lognormal dist and amplified by recent return magnitude), rounds values, builds a pandas DataFrame indexed by daily timestamps, and prints a synthetic-data confirmation.

After obtaining either the live or synthetic series the cell prints concise summary statistics about the resulting DataFrame: the first and last dates in the index, the total number of daily rows, the minimum and maximum Close prices observed, and a count of missing values. These prints reflect the actual contents of the DataFrame rather than the requested download window: in the saved output the download completed successfully and the message “Downloaded via yfinance” appears, followed by the dataset summary showing a date range of 2014-09-17 through 2025-04-19, 3,868 trading days, a Close price range from about $178.10 up to about $106,146.27, and zero missing values. Finally, the DataFrame head is displayed so you can visually inspect the first few rows; the preview shows the Date index with Close, High, Low, Open as floating-point prices and Volume as integers, confirming the table structure and that the series is ready for indicator computation and feature engineering.

Section 2 — Exploratory data analysis

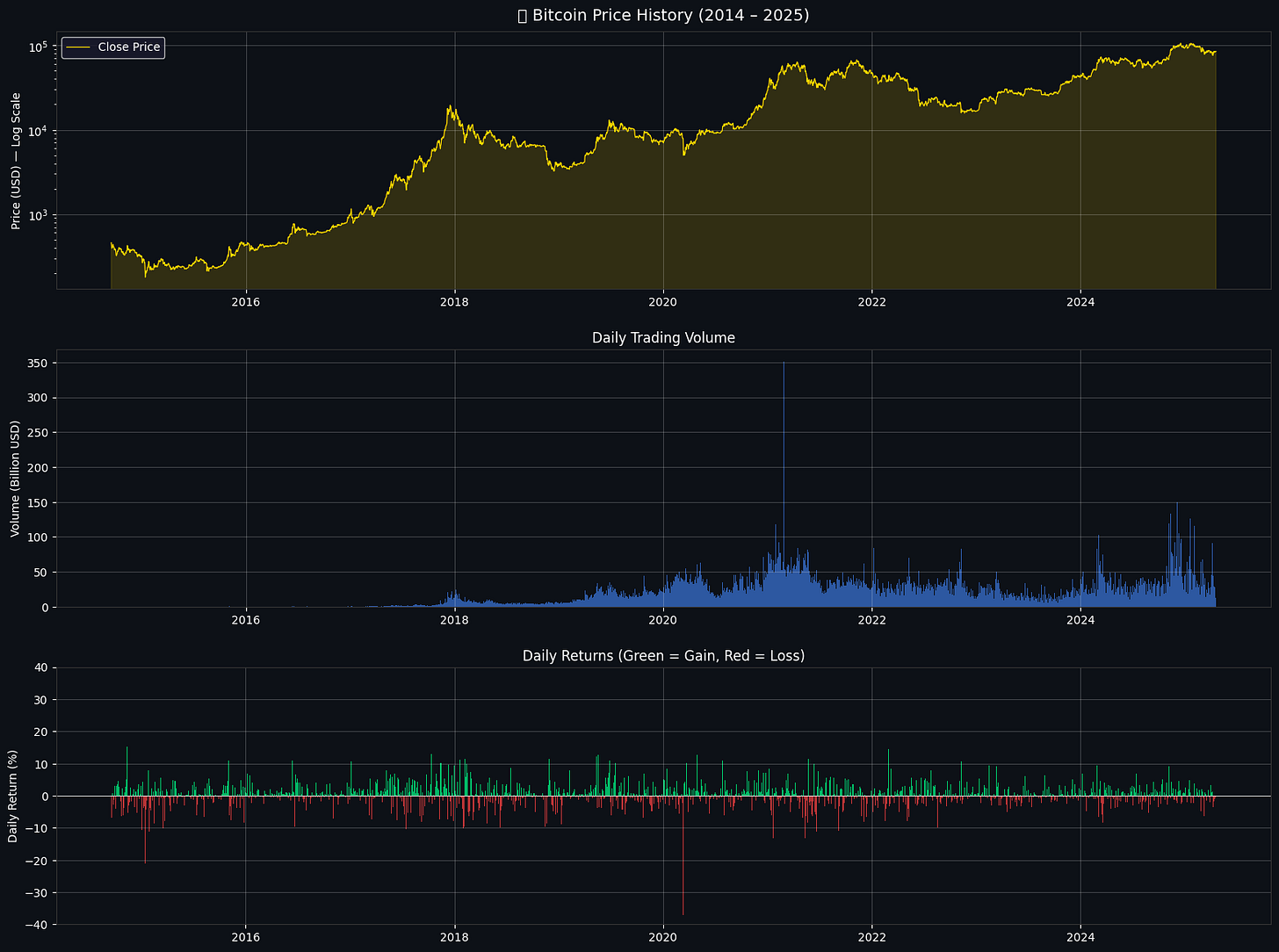

Prior to building models we perform a thorough inspection of the historical series and summary statistics. The main analyses are:

Ten-year price trajectory: chart the historical closing prices to identify long-term trends, regime shifts, and major drawdowns.

Distribution of daily returns: examine the shape of the return distribution to look for heavy tails and periods where large moves tend to cluster.

Yearly volatility over time: compute a rolling, year-scale measure of volatility to track how the asset’s risk profile has changed across years.

Pairwise relationships among OHLCV fields: produce a correlation visualization for open, high, low, close, and volume to reveal multicollinearity and strong feature dependencies.

# ── 2.1 Full Price History ──

fig, axes = plt.subplots(3, 1, figsize=(16, 12), facecolor='#0D1117')

# Price

ax1 = axes[0]

ax1.set_facecolor('#0D1117')

ax1.plot(df.index, df['Close'], color=COLORS['yellow'], linewidth=0.8, label='Close Price')

ax1.fill_between(df.index, df['Close'], alpha=0.15, color=COLORS['yellow'])

ax1.set_yscale('log')

ax1.set_ylabel('Price (USD) — Log Scale', color='white')

ax1.set_title('₿ Bitcoin Price History (2014 – 2025)', color='white', fontsize=14, pad=10)

ax1.tick_params(colors='white')

ax1.grid(alpha=0.2)

ax1.legend(facecolor='#1A1A2E', labelcolor='white')

# Volume

ax2 = axes[1]

ax2.set_facecolor('#0D1117')

ax2.bar(df.index, df['Volume'] / 1e9, color=COLORS['blue'], alpha=0.6, width=1)

ax2.set_ylabel('Volume (Billion USD)', color='white')

ax2.set_title('Daily Trading Volume', color='white', fontsize=12)

ax2.tick_params(colors='white')

ax2.grid(alpha=0.2)

# Daily Returns

daily_returns = df['Close'].pct_change().dropna()

ax3 = axes[2]

ax3.set_facecolor('#0D1117')

ax3.bar(daily_returns.index,

daily_returns * 100,

color=[COLORS['green'] if r > 0 else COLORS['red'] for r in daily_returns],

alpha=0.7, width=1)

ax3.axhline(0, color='white', linewidth=0.5)

ax3.set_ylabel('Daily Return (%)', color='white')

ax3.set_title('Daily Returns (Green = Gain, Red = Loss)', color='white', fontsize=12)

ax3.tick_params(colors='white')

ax3.grid(alpha=0.2)

ax3.set_ylim(-40, 40)

for ax in axes:

ax.spines[:].set_color('#333333')

plt.tight_layout(pad=2)

plt.savefig('eda_price_history.png', dpi=150, bbox_inches='tight', facecolor='#0D1117')

plt.show()

print(f"\n📈 Total Return (all time) : {((df['Close'].iloc[-1]/df['Close'].iloc[0])-1)*100:,.0f}%")

print(f"📉 Worst single day : {daily_returns.min()*100:.2f}%")

print(f"📈 Best single day : {daily_returns.max()*100:.2f}%")

print(f"📊 Mean daily return : {daily_returns.mean()*100:.4f}%")

print(f"📐 Daily return std : {daily_returns.std()*100:.4f}%")

📈 Total Return (all time) : 18,500%

📉 Worst single day : -37.17%

📈 Best single day : 25.25%

📊 Mean daily return : 0.2005%

📐 Daily return std : 3.6000%The goal here is to produce a compact, three-panel visual overview of Bitcoin's history: price on a log scale, daily trading volume, and the sequence of daily returns, and to print a few summary statistics that quantify total growth and day-to-day variability.

The top panel shows the adjusted close price as a yellow line with a subtle filled area beneath it, plotted on a logarithmic vertical axis. Using a log scale compresses the very large price range so early low-dollar values and later high-dollar values can be viewed on the same axis while preserving percentage changes; that makes multi-year run-ups and drawdowns easier to compare visually. The line and shaded area highlight the major multi-year rallies and corrections—noticeable steep climbs and rounded peaks at several points—which is exactly what the plotted trace and shading emphasize.

The middle panel is a bar chart of daily traded volume, scaled down to billions of USD for readability. Plotting volume as bars across the same date axis reveals how market activity grows and concentrates in certain periods; the taller bars correspond to heightened trading days and are visually aligned with some of the price swings from the top panel.

The bottom panel displays daily percentage returns as vertical bars colored green for gains and red for losses, with a white horizontal zero line for reference. Returns are computed as day-over-day percent changes and the color-coding makes it easy to spot clusters of positive or negative days; the y-limits are clamped to +/-40% so extremely rare outliers are visible but don't dominate the vertical scale. That produces the dense cloud of relatively small daily moves punctuated by occasional large spikes and deep drops.

A few stylistic details tie the figure together: all three axes share a dark background and muted grid lines for contrast, the axis spines are colored to match the overall theme, and a legend and titles make each panel self-explanatory. The figure is saved to a PNG file with the same dark background so the visual can be reused outside the notebook.

The printed numeric summaries follow logically from the plotted data. The total return of 18,500% is the percent change from the first to the last closing price and indicates a many‑fold increase in price over the period. The worst single day reported at −37.17% and the best single day at +25.25% are simply the minimum and maximum of the daily percent-change series, and they match the large downward and upward spikes visible in the returns panel. The mean daily return of about 0.2005% and the daily return standard deviation of about 3.6000% summarize the central tendency and dispersion of daily movements; together they show that modest positive drift coexists with relatively large day-to-day volatility. Overall, the visual and the statistics together give a concise picture of historical growth, trading activity, and the typical magnitude of daily price moves.

# ── Returns Distribution & Statistics ──

# 🔹 1. Histogram

plt.figure(figsize=(6,5), facecolor='#0D1117')

ax = plt.gca()

ax.set_facecolor('#0D1117')

daily_returns.hist(bins=150, color=COLORS['blue'], alpha=0.8, edgecolor='none')

plt.axvline(daily_returns.mean(), color=COLORS['yellow'], lw=2,

label=f'Mean: {daily_returns.mean()*100:.3f}%')

plt.axvline(daily_returns.quantile(0.05), color=COLORS['red'], lw=2, linestyle='--',

label=f'5th pct: {daily_returns.quantile(0.05)*100:.2f}%')

plt.axvline(daily_returns.quantile(0.95), color=COLORS['green'], lw=2, linestyle='--',

label=f'95th pct: {daily_returns.quantile(0.95)*100:.2f}%')

plt.title('Returns Distribution', color='white')

plt.legend()

plt.grid(alpha=0.2)

plt.show()

# 🔹 2. Rolling Volatility

plt.figure(figsize=(6,5), facecolor='#0D1117')

ax = plt.gca()

ax.set_facecolor('#0D1117')

roll_vol = daily_returns.rolling(30).std() * np.sqrt(365) * 100

plt.plot(roll_vol, color=COLORS['orange'], lw=1)

plt.fill_between(roll_vol.index, roll_vol, alpha=0.3, color=COLORS['orange'])

plt.title('30-Day Rolling Annualised Volatility (%)', color='white')

plt.grid(alpha=0.2)

plt.show()

# 🔹 3. Yearly Returns

plt.figure(figsize=(6,5), facecolor='#0D1117')

ax = plt.gca()

ax.set_facecolor('#0D1117')

yearly = df['Close'].resample('YE').last().pct_change().dropna() * 100

colors_yr = [COLORS['green'] if r > 0 else COLORS['red'] for r in yearly]

plt.bar([str(d.year) for d in yearly.index], yearly.values, color=colors_yr, alpha=0.85)

plt.axhline(0, color='white', lw=0.8)

plt.title('Yearly Returns (%)', color='white')

plt.xticks(rotation=45)

plt.grid(alpha=0.2, axis='y')

plt.show()

# 📊 Print Summary

print("\n📊 Yearly Return Summary:")

print(yearly.to_string())

📊 Yearly Return Summary:

Date

2015-12-31 34.471083

2016-12-31 123.831137

2017-12-31 1368.897898

2018-12-31 -73.561779

2019-12-31 92.203443

2020-12-31 303.160090

2021-12-31 59.667924

2022-12-31 -64.265242

2023-12-31 155.417419

2024-12-31 121.054747

2025-12-31 -8.954148

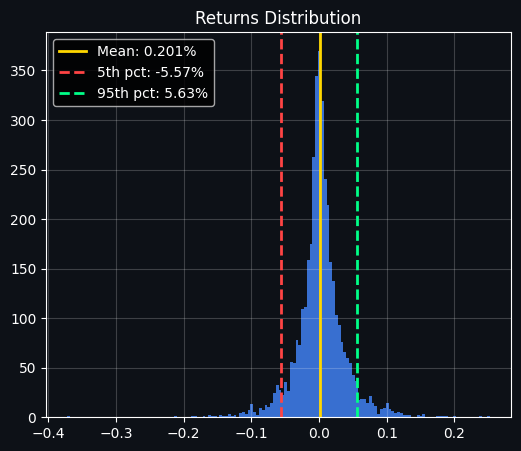

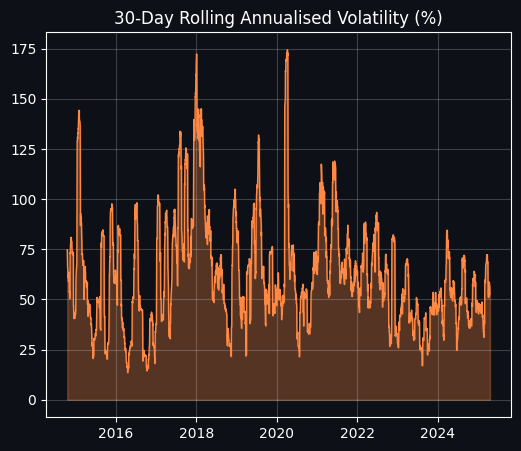

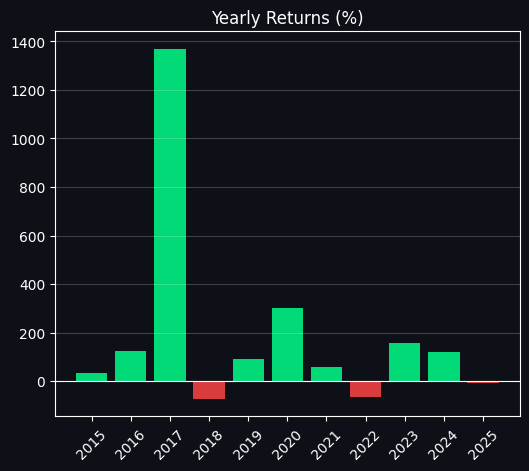

Freq: YE-DECThe cell’s goal is to give a compact, visual and numeric summary of the asset’s daily returns and how they evolve over time: a histogram to show the distribution of single-day returns, a rolling volatility series to show how risk has changed through history, and a year-by-year bar chart with a printed table of the exact annual returns.

First, the daily returns histogram shows how most days cluster tightly around zero while a minority of days produce large moves. A vertical yellow line marks the sample mean daily return, and dashed red and green lines mark the 5th and 95th percentiles respectively; those percentile lines quantify the typical negative and positive extreme one would see on a single day. Because the histogram is tall and narrow around zero but still has visibly long tails, it tells us the distribution is leptokurtic — most days are small, but the tails (large moves) matter. The numeric annotations in the legend (mean ≈ 0.201%, 5th pct ≈ −5.57%, 95th pct ≈ +5.63%) make those summary points explicit: the typical daily move is tiny, but 1-in-20 days can move several percent.

Next, the rolling volatility plot translates short-term variability into an annualized percentage by taking a 30-day rolling standard deviation and scaling it with the square root of the number of days in a year. This produces a time series of “30-day annualized volatility” expressed in percent. The line and its filled area highlight periods of calm versus stress: you can see large spikes in volatility around major market events (the chart shows pronounced peaks in the late-2017 and around 2020 episodes), and more moderate levels in quieter years. Because the plot uses a fairly short 30-day window, the volatility reacts quickly to sudden market moves, which is why spikes are sharp rather than smoothed out over long periods.

Finally, the yearly returns bar chart collapses the series to calendar-year percent returns by taking each year’s last available close and computing its percent change from the previous year’s end. Bars are colored green for positive years and red for negative years so you can immediately spot winners and losers. The printed table below the charts lists the exact yearly percentages; it confirms the dramatic year-to-year variability — for example, very large positive returns in 2017 and 2020, deep negative returns in 2018 and 2022, and mixed results in the other years. A small caveat: the final year shown (2025) reflects the last available data point in that year rather than a full calendar year if the dataset stops before Dec 31, so its value is a partial-year return and should be interpreted accordingly.

Section 3 — Technical Indicators

A set of standard technical measures is computed to capture trend, momentum, and volatility characteristics that serve as inputs to the models and rule-based signals.

Simple moving averages with windows of 20, 50, and 200 periods. These are trend-following averages used to detect medium- and long-term direction; crossings between short and long SMAs generate classic bullish and bearish cross signals often called golden cross and death cross.

Exponential moving averages with spans of 12 and 26 periods. Because they weight recent prices more heavily than simple moving averages, they respond faster to changes and help identify shorter-term trend shifts.

The MACD series, which is a momentum indicator derived from the difference between two exponential moving averages, together with its signal line and histogram. It is used to judge the direction and strength of momentum.

The 14-period Relative Strength Index. This oscillator highlights potential overbought conditions when it rises above seventy and oversold conditions when it falls below thirty.

Bollinger Bands, constructed around a moving average with an upper and lower band. They provide a measure of how far price has deviated from its mean and the current volatility regime through band width.

Average True Range, a volatility measure that quantifies the typical daily trading range and is commonly used for position sizing and placing stop-loss levels.

# ── 3.1 Compute all indicators ──

df2 = df.copy()

close = df2['Close']

# ── Moving Averages ──

for w in [20, 50, 100, 200]:

df2[f'SMA_{w}'] = SMAIndicator(close, window=w).sma_indicator()

for w in [12, 26, 50]:

df2[f'EMA_{w}'] = EMAIndicator(close, window=w).ema_indicator()

# ── MACD ──

macd_obj = MACD(close)

df2['MACD'] = macd_obj.macd()

df2['MACD_Signal'] = macd_obj.macd_signal()

df2['MACD_Hist'] = macd_obj.macd_diff()

# ── RSI ──

df2['RSI_14'] = RSIIndicator(close, window=14).rsi()

# ── Bollinger Bands ──

bb = BollingerBands(close, window=20, window_dev=2)

df2['BB_High'] = bb.bollinger_hband()

df2['BB_Low'] = bb.bollinger_lband()

df2['BB_Mid'] = bb.bollinger_mavg()

df2['BB_Width']= (df2['BB_High'] - df2['BB_Low']) / df2['BB_Mid'] * 100

# ── ATR (Average True Range) ──

df2['ATR_14'] = AverageTrueRange(df2['High'], df2['Low'], close, window=14).average_true_range()

print("✅ Technical indicators computed!")

print(f" Total features: {df2.shape[1]}")

print(df2[['SMA_20','SMA_50','SMA_200','EMA_12','MACD','RSI_14','BB_Width','ATR_14']].tail(5).to_string())✅ Technical indicators computed!

Total features: 21

SMA_20 SMA_50 SMA_200 EMA_12 MACD RSI_14 BB_Width ATR_14

Date

2025-04-15 82681.387891 84282.645937 87549.901543 82911.391706 -512.441181 50.332058 12.171769 3847.170646

2025-04-16 82524.226172 84188.599844 87640.632637 83084.080241 -384.940371 51.011644 11.249127 3738.634462

2025-04-17 82551.356250 84199.574375 87736.934863 83362.798666 -211.905605 52.659391 11.363010 3592.969165

2025-04-18 82644.017187 84194.505937 87842.541387 83530.184208 -109.416390 51.692738 11.526097 3393.197372

2025-04-19 82780.461719 84208.314062 87963.673418 83766.065724 20.997462 52.972727 11.777114 3239.700573Here we compute a suite of widely used technical indicators and append them to the price table so each row (date) carries both the raw OHLCV data and smoothed, momentum and volatility signals derived from that price series. The original price table is copied to a working DataFrame and the close price is used as the primary input for most indicators. Simple moving averages of several lengths are calculated to capture short-, medium- and long-term smoothing; exponential moving averages are also computed, which weight recent prices more heavily and therefore react faster to changes. The MACD family of values — the MACD line, its signal line, and the histogram — are produced next; these are simply differences and smoothed differences between fast and slow EMAs and serve as a compact measure of momentum and its acceleration or deceleration. A 14-period RSI is computed to give a bounded momentum oscillator that ranges roughly between 0 and 100 and signals overbought/oversold tendencies around extreme values. Bollinger Bands are created from a 20-period moving average with two standard deviations, and the width of those bands is converted into a percent-of-middle value so it expresses relative volatility independent of absolute price level. Finally, the Average True Range over 14 periods is calculated to quantify average daily price movement in the same units as price, which is useful later for volatility-based features or position sizing.

The printed confirmation and the summary line show that the new DataFrame now contains 21 columns, meaning the indicator calculations have expanded the feature set beyond the raw market fields. The five-row excerpt that follows displays the most recent values for several of those indicators. Reading that output, you can see the 20-day moving average is lower than the 50- and 200-day averages, which implies recent prices are below longer-term averages and therefore the medium-to-longer term trend has been higher than the short-term. The MACD values are negative for most of the shown days and move toward zero then slightly positive on the last date, indicating that the short-term EMAs had been below the long-term EMAs but momentum was shifting upward by the final row. The RSI values clustered around 50 indicate neither an overbought nor oversold condition; the Bollinger Band width expressed as a percent sits around 11–12, reflecting a moderate level of relative volatility; and the ATR numbers are large in absolute terms because they are measured in price units (so for a high-priced asset the ATR naturally reports large values). These computed columns are now ready to be used as inputs for subsequent feature engineering, selection, or modeling steps.

# ── 3.2 Price + Moving Averages Chart ──

recent = df2['2020':].copy()

fig, axes = plt.subplots(4, 1, figsize=(16, 18), facecolor='#0D1117',

gridspec_kw={'height_ratios': [3, 1, 1, 1]})

# ── Price + MAs ──

ax1 = axes[0]

ax1.set_facecolor('#0D1117')

ax1.plot(recent.index, recent['Close'], color=COLORS['yellow'], lw=1.2, label='Close', alpha=0.9)

ax1.plot(recent.index, recent['SMA_20'], color=COLORS['blue'], lw=1.2, label='SMA 20', alpha=0.85)

ax1.plot(recent.index, recent['SMA_50'], color=COLORS['orange'], lw=1.2, label='SMA 50', alpha=0.85)

ax1.plot(recent.index, recent['SMA_200'], color=COLORS['purple'], lw=1.5, label='SMA 200', alpha=0.85)

ax1.fill_between(recent.index, recent['BB_High'], recent['BB_Low'],

alpha=0.08, color=COLORS['blue'], label='Bollinger Bands')

ax1.plot(recent.index, recent['BB_High'], color=COLORS['blue'], lw=0.6, linestyle='--', alpha=0.5)

ax1.plot(recent.index, recent['BB_Low'], color=COLORS['blue'], lw=0.6, linestyle='--', alpha=0.5)

ax1.set_title('Bitcoin — Price & Technical Indicators (2020–2025)', color='white', fontsize=13)

ax1.set_ylabel('Price (USD)', color='white')

ax1.tick_params(colors='white')

ax1.legend(facecolor='#1A1A2E', labelcolor='white', ncol=4, fontsize=9)

ax1.grid(alpha=0.15)

# ── MACD ──

ax2 = axes[1]

ax2.set_facecolor('#0D1117')

ax2.plot(recent.index, recent['MACD'], color=COLORS['blue'], lw=1, label='MACD')

ax2.plot(recent.index, recent['MACD_Signal'], color=COLORS['orange'], lw=1, label='Signal')

ax2.bar(recent.index, recent['MACD_Hist'],

color=[COLORS['green'] if v > 0 else COLORS['red'] for v in recent['MACD_Hist']],

alpha=0.6, width=1)

ax2.axhline(0, color='white', lw=0.5)

ax2.set_ylabel('MACD', color='white')

ax2.tick_params(colors='white')

ax2.legend(facecolor='#1A1A2E', labelcolor='white', fontsize=9)

ax2.grid(alpha=0.15)

# ── RSI ──

ax3 = axes[2]

ax3.set_facecolor('#0D1117')

ax3.plot(recent.index, recent['RSI_14'], color=COLORS['purple'], lw=1.2)

ax3.axhline(70, color=COLORS['red'], lw=1, linestyle='--', label='Overbought (70)')

ax3.axhline(30, color=COLORS['green'], lw=1, linestyle='--', label='Oversold (30)')

ax3.axhline(50, color='white', lw=0.5, linestyle=':')

ax3.fill_between(recent.index, recent['RSI_14'], 70,

where=recent['RSI_14'] > 70, alpha=0.3, color=COLORS['red'])

ax3.fill_between(recent.index, recent['RSI_14'], 30,

where=recent['RSI_14'] < 30, alpha=0.3, color=COLORS['green'])

ax3.set_ylabel('RSI (14)', color='white')

ax3.set_ylim(0, 100)

ax3.tick_params(colors='white')

ax3.legend(facecolor='#1A1A2E', labelcolor='white', fontsize=9)

ax3.grid(alpha=0.15)

# ── BB Width (Volatility Squeeze) ──

ax4 = axes[3]

ax4.set_facecolor('#0D1117')

ax4.plot(recent.index, recent['BB_Width'], color=COLORS['orange'], lw=1.2)

ax4.fill_between(recent.index, recent['BB_Width'], alpha=0.2, color=COLORS['orange'])

ax4.set_ylabel('BB Width (%)', color='white')

ax4.set_xlabel('Date', color='white')

ax4.tick_params(colors='white')

ax4.set_title('Bollinger Band Width — Volatility Squeeze Indicator', color='white', fontsize=10)

ax4.grid(alpha=0.15)

for ax in axes:

ax.spines[:].set_color('#333333')

plt.tight_layout(pad=1.5)

plt.savefig('technical_indicators.png', dpi=150, bbox_inches='tight', facecolor='#0D1117')

plt.show()To visualize recent Bitcoin price behavior and several commonly used technical indicators, the cell constructs a four-row figure that covers price with moving averages and Bollinger bands, the MACD oscillator, the 14-day RSI, and the Bollinger band width (a simple volatility squeeze measure). The plotted time window is restricted to data from 2020 onward so the panels focus on the modern multi-year rally and corrections.

The script first slices the dataset to the recent period and creates a tall figure with four stacked subplots. The top subplot plots the daily closing price together with short and medium simple moving averages (20 and 50 days) and a long 200-day moving average. The Bollinger bands are drawn as a light-filled band between the upper and lower band values, with dashed outlines for the band edges. Because moving averages smooth price, the SMA20 follows the price closely, SMA50 is smoother, and SMA200 is much slower — you can see the 200-day average lagging major trends and acting like a long-term trend reference. When price moves strongly up or down, the Bollinger bands widen; when the market quiets the bands contract.

The second subplot shows the MACD line, its signal line, and a bar histogram for the MACD difference. Positive histogram bars are colored green and negative bars red, which visually emphasizes momentum shifts: sustained positive histogram values correspond to rising momentum and tend to appear during strong rallies, while deep negative bars mark strong sell-offs. A horizontal zero line makes it easy to spot crossovers that traders often interpret as buy or sell signals.

The third subplot displays the 14-day Relative Strength Index on a 0–100 scale with horizontal markers at 70 and 30 for overbought and oversold thresholds, and a faint 50 midline. Portions of the RSI above 70 are lightly shaded red and portions below 30 are shaded green, highlighting periods when the oscillator indicates stretched conditions. The RSI oscillates frequently around the midline; sustained excursions toward 70 coincide with price peaks while dips toward 30 line up with deeper corrections.

The bottom subplot plots the Bollinger Band width, a normalized measure of band separation that acts as a volatility indicator or “squeeze” metric. Spikes in band width correspond to bursts of volatility — large price moves produce clear peaks in this panel — while long, flat troughs show low-volatility consolidation periods where a breakout might be expected.

Cosmetic choices such as a dark background, colored lines for each indicator, faint gridlines, and muted axis spine colors improve legibility and make the multi-panel layout easier to read. The figure is saved to a PNG file and displayed; the saved output confirms a single figure of size 1600 by 1800 pixels containing four axes and shows the described relationships clearly: price and MAs in the top panel, MACD momentum in the second, RSI extremes in the third, and volatility spikes in the fourth.

Section 4: Feature construction

Thoughtful feature design is central to predictive performance. In this section we create a set of inputs meant to capture price memory, dispersion, trend, momentum, and volume dynamics.

Lagged predictors — include prior closing prices measured one day ago, two days ago, five days ago, and ten days ago to provide short- and medium-term memory.

Moving-window statistics — compute moving averages and moving standard deviations over several window lengths to capture local trends and volatility.

Momentum measures — calculate rate of change and related momentum metrics across multiple horizons to quantify directional strength.

Volume-informed features — derive On-Balance-Volume style accumulators, volume spikes, and other signals that combine price and volume behavior.

Target label — a binary outcome indicating whether the following trading day’s close is higher than today’s. We encode an up-day as one and a down-day as zero.

# ── 4.1 Feature Engineering ──

feat = df2.copy()

# Daily returns

feat['Return_1d'] = feat['Close'].pct_change(1)

feat['Return_3d'] = feat['Close'].pct_change(3)

feat['Return_7d'] = feat['Close'].pct_change(7)

feat['Return_14d'] = feat['Close'].pct_change(14)

feat['Return_30d'] = feat['Close'].pct_change(30)

# Lag features (past closing prices as %)

for lag in [1, 2, 3, 5, 10, 20]:

feat[f'Lag_{lag}'] = feat['Return_1d'].shift(lag)

# Rolling statistics (mean & std of returns)

for win in [7, 14, 30, 60]:

feat[f'Roll_Mean_{win}'] = feat['Return_1d'].rolling(win).mean()

feat[f'Roll_Std_{win}'] = feat['Return_1d'].rolling(win).std()

# Price position within Bollinger Bands (0 = at lower, 1 = at upper)

feat['BB_Position'] = (feat['Close'] - feat['BB_Low']) / (feat['BB_High'] - feat['BB_Low'] + 1e-9)

# MACD histogram momentum

feat['MACD_Slope'] = feat['MACD_Hist'].diff()

# Volume indicators

feat['Volume_MA20'] = feat['Volume'].rolling(20).mean()

feat['Volume_Ratio'] = feat['Volume'] / feat['Volume_MA20']

feat['Price_x_Volume'] = (feat['Close'].pct_change() * feat['Volume']).rolling(5).sum()

# RSI slope

feat['RSI_Slope'] = feat['RSI_14'].diff(3)

# Distance from moving averages (normalised)

feat['Dist_SMA20'] = (feat['Close'] - feat['SMA_20']) / feat['SMA_20'] * 100

feat['Dist_SMA50'] = (feat['Close'] - feat['SMA_50']) / feat['SMA_50'] * 100

feat['Dist_SMA200'] = (feat['Close'] - feat['SMA_200']) / feat['SMA_200'] * 100

# ── Target: 1 if tomorrow's close > today's close, else 0 ──

feat['Target'] = (feat['Close'].shift(-1) > feat['Close']).astype(int)

# Drop NaN rows

feat.dropna(inplace=True)

print(f"✅ Feature engineering complete!")

print(f" Dataset shape : {feat.shape}")

print(f" Target balance: {feat['Target'].value_counts().to_dict()} (1=Up, 0=Down)")

print(f" Up days : {feat['Target'].mean()*100:.1f}%")

print(f"\nFeature preview:")

feature_cols = [c for c in feat.columns if c not in

['Open','High','Low','Close','Volume','Target']]

print(f" Total features: {len(feature_cols)}")

print(" First 10:", feature_cols[:10])✅ Feature engineering complete!

Dataset shape : (3669, 50)

Target balance: {1: 1941, 0: 1728} (1=Up, 0=Down)

Up days : 52.9%

Feature preview:

Total features: 44

First 10: ['SMA_20', 'SMA_50', 'SMA_100', 'SMA_200', 'EMA_12', 'EMA_26', 'EMA_50', 'MACD', 'MACD_Signal', 'MACD_Hist']The cell takes the indicator-rich price table and turns it into a modeling-ready feature matrix by adding a range of return, momentum, volatility, volume and distance-from-moving-average features, then labels the next-day direction for supervised learning. It starts from a copy of the precomputed indicator DataFrame so the original remains unchanged, then computes multiple horizon returns to capture short- and medium-term price moves (one, three, seven, fourteen and thirty days). Those horizon returns provide direct measures of recent performance that downstream models can use as predictors rather than relying only on raw prices.

To expose short-term structure and persistence, the workflow creates lagged return features that shift the one-day return a few periods back, and rolling-window statistics—means and standard deviations over 7, 14, 30 and 60 day windows—that summarize local trend and volatility. The position inside the Bollinger Bands is converted into a normalized score between lower and upper band so the model sees whether price is near the top or bottom of the band rather than raw band levels; a tiny stabilizer is used when the band width is extremely small to avoid numerical problems. Momentum change is represented by the MACD histogram slope (its difference), and a small RSI slope captures whether momentum strength is accelerating or decelerating.

Volume-based signals are created to weight price moves by trading activity: a 20-day moving average of volume and a volume ratio flag relative to that average help detect volume spikes, while a short rolling sum of price change times volume summarizes recent signed flow. Distances from common moving averages (20, 50 and 200) are expressed as percentages so those features are scale-free and comparable across time. These engineered columns collectively transform raw market data into a richer set of predictors that emphasize dynamics rather than static price levels.

Finally, a straightforward supervised label is produced: a positive class if the following day’s close exceeds today’s close and a negative class otherwise, implemented by shifting the close forward to align features with the outcome they should predict. Any rows missing required history for rolling windows or shifted values are dropped, leaving a clean table for modeling. The saved output shows the result: a final dataset with 3,669 rows and 50 columns, of which 44 are feature columns after excluding raw price, volume and the target. The target distribution is slightly tilted toward up days, with 1,941 up labels versus 1,728 down labels (about 52.9% up), and the printed first ten feature names illustrate that many of the original technical indicators—20/50/100/200 simple moving averages, EMAs and MACD components—survive in the feature set alongside the newly engineered signals.

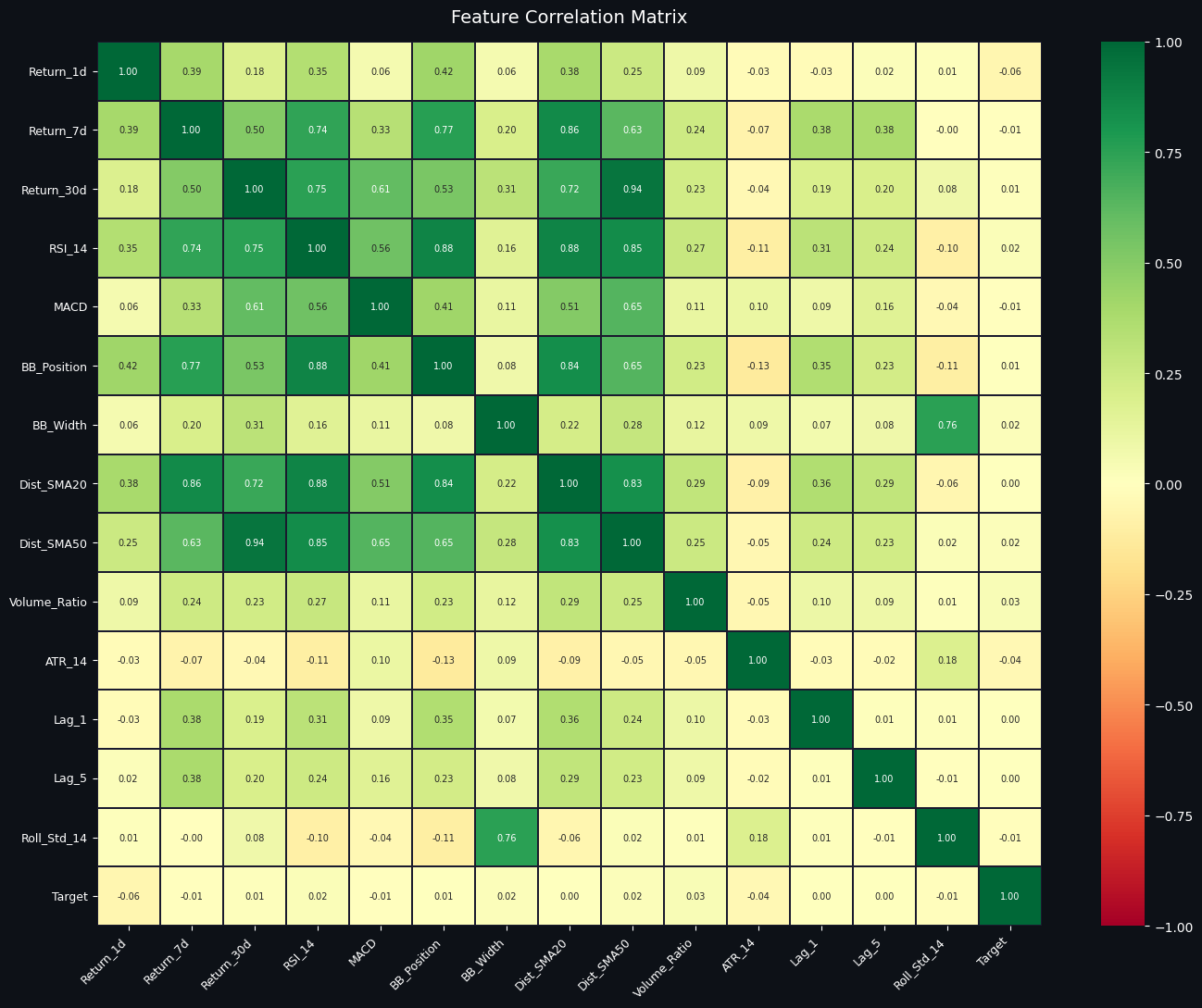

# ── 4.2 Feature Correlation Heatmap ──

ml_cols = ['Return_1d','Return_7d','Return_30d','RSI_14','MACD',

'BB_Position','BB_Width','Dist_SMA20','Dist_SMA50',

'Volume_Ratio','ATR_14','Lag_1','Lag_5','Roll_Std_14']

corr = feat[ml_cols + ['Target']].corr()

fig, ax = plt.subplots(figsize=(14, 11), facecolor='#0D1117')

ax.set_facecolor('#0D1117')

mask = np.triu(np.ones_like(corr, dtype=bool), k=1)

sns.heatmap(corr, annot=True, fmt='.2f', cmap='RdYlGn',

center=0, vmin=-1, vmax=1,

linewidths=0.3, linecolor='#1A1A2E',

annot_kws={'size': 7}, ax=ax)

ax.set_title('Feature Correlation Matrix', color='white', fontsize=14, pad=15)

ax.tick_params(colors='white', labelsize=9)

plt.xticks(rotation=45, ha='right')

plt.yticks(rotation=0)

plt.tight_layout()

plt.savefig('correlation_heatmap.png', dpi=150, bbox_inches='tight', facecolor='#0D1117')

plt.show()The purpose here is to inspect pairwise linear relationships between a hand-picked set of candidate features and the prediction target, so you can quickly spot redundancy, obvious predictors, and features that behave similarly.

A list of machine-learning candidate columns is assembled to include different horizon returns, momentum indicators (RSI and MACD), Bollinger-derived measures (position and width), distances from short and medium moving averages, a simple volume ratio, ATR as a volatility proxy, short-lag returns, and a 14-day rolling standard deviation. Those columns plus the target are fed into a standard Pearson correlation routine to produce a symmetric correlation matrix of values between -1 and +1. A plotting canvas with a dark background is prepared and, although a triangular mask is created (commonly used to hide the duplicate upper triangle of a symmetric matrix), the heatmap call renders the full matrix with annotations showing each numeric correlation to two decimal places.

The visual uses a red-to-green diverging palette centered at zero so that positive correlations appear green, negative correlations appear reddish, and near-zero correlations are pale. Gridlines and small-font annotations make it easy to read individual coefficients, while rotated x-axis labels keep long feature names legible. The figure is saved as a PNG with the same dark background to preserve the visual style for reports.

Looking at the saved image, several patterns stand out. Multi-day returns and momentum/distance features tend to move together: longer-horizon returns correlate positively with RSI and with distance-from-SMA features, which reflects that momentum and being above a moving average both capture similar trending behavior. Bollinger position is also strongly aligned with RSI and distance-to-SMA, while Bollinger width shows a strong positive relationship with the rolling standard deviation, which makes sense because both measure recent volatility. ATR exhibits only weak or slightly negative correlations with the return-related features here, indicating it captures a different aspect of price dynamics. Short-lag returns are modestly correlated with near-term returns but less so with longer-horizon indicators. Crucially, the target column shows very small correlation coefficients with individual features (values close to zero), which signals that no single feature has a strong linear relationship with the target and suggests the problem will likely benefit from multivariate or non-linear modeling rather than simple linear thresholding.

The saved file named correlation_heatmap.png contains this annotated matrix at a high resolution and matches what is displayed inline: a compact, annotated view that highlights which features are redundant and which might provide complementary information for downstream modeling.

Section 5 — Advanced Feature Engineering (50+ Features)

Rationale: The earlier version relied on roughly thirty inputs. Expanding the feature set introduces a wider variety of signals — covering trend, momentum, volumes, volatility, and statistical properties — which supplies the learning algorithm with more diverse information and tends to improve predictive performance.

Feature additions by theme:

Trend strength — Indicators included: the average directional index, the positive directional indicator, and the negative directional indicator. These measure whether a directional move is persistent and quantify how strong the prevailing trend is.

Momentum — Indicators included: stochastic oscillator values, Williams percent R, and rate-of-change measures. These capture the speed and direction of price momentum and help to identify overbought or oversold conditions.

Volume signals — Indicators included: on-balance volume, a smoothed or trend version of OBV, and a flag for unusually large volume spikes. These features aim to capture whether trading volume supports price moves and highlight concentrated buying or selling activity.

Volatility measures — Indicators included: Keltner channel width and ratios based on average true range. These normalize price movement size and indicate how wide typical intraday ranges are relative to price.

Distributional and temporal statistics — Indicators included: rolling skewness, rolling kurtosis, and short-lag autocorrelation. These summarize the shape of recent return distributions and the extent of serial dependence.

Market regime flags — Indicators included: simple regime heuristics such as short-versus-long moving average relationships (for bull, bear, or sideways labels) and cross events like golden and death crosses. These provide coarse contextual information about the prevailing market environment.

Each of these groups contributes complementary information: trend and regime features give context, momentum and volume features show immediate pressure and participation, volatility terms scale moves, and statistical descriptors reveal changes in return behavior. Combining them yields the 50-plus engineered predictors used later for selection, balancing, and model training.

# ─────────────────────────────────────────────────────────────

# SECTION 5 — ADVANCED FEATURE ENGINEERING

# Builds on Section 4's 'feat' dataframe — adds 25+ more features

# ─────────────────────────────────────────────────────────────

adv = feat.copy()

c = adv['Close']; h = adv['High']; lo = adv['Low']; v = adv['Volume']

# ── Trend Strength: ADX ──

# ADX > 25 = strong trend, < 20 = weak/sideways

adx_obj = ADXIndicator(h, lo, c, window=14)

adv['ADX'] = adx_obj.adx()

adv['ADX_Plus'] = adx_obj.adx_pos() # Bullish directional movement

adv['ADX_Minus'] = adx_obj.adx_neg() # Bearish directional movement

# ── Momentum: Stochastic Oscillator ──

# %K > 80 = overbought, %K < 20 = oversold

stoch = StochasticOscillator(h, lo, c, window=14, smooth_window=3)

adv['STOCH_K'] = stoch.stoch()

adv['STOCH_D'] = stoch.stoch_signal() # Smoothed %K

# ── Momentum: Williams %R ──

# -20 = overbought, -80 = oversold

adv['WILLIAMS'] = WilliamsRIndicator(h, lo, c, lbp=14).williams_r()

# ── Momentum: Rate of Change (ROC) ──

# Shows percentage price change over n periods

for p in [3, 5, 10, 20]:

adv[f'ROC_{p}'] = c.pct_change(p) * 100

# ── RSI at multiple timeframes ──

adv['RSI_7'] = RSIIndicator(c, 7).rsi()

adv['RSI_21'] = RSIIndicator(c, 21).rsi()

adv['RSI_Slope'] = adv['RSI_14'].diff(3) # RSI direction

# ── Volume: On-Balance Volume ──

# Rising OBV = volume supports price move (smart money buying)

adv['OBV'] = OnBalanceVolumeIndicator(c, v).on_balance_volume()

adv['OBV_MA20'] = adv['OBV'].rolling(20).mean()

adv['OBV_Trend'] = (adv['OBV'] - adv['OBV_MA20']) / (adv['OBV_MA20'].abs() + 1)

adv['Vol_Spike'] = (adv['Volume_Ratio'] > 2.0).astype(int) # Unusual volume

# ── Volatility: Keltner Channel ──

# Similar to Bollinger Bands but uses ATR instead of std deviation

kc = KeltnerChannel(h, lo, c, window=20)

adv['KC_Width'] = (kc.keltner_channel_hband() - kc.keltner_channel_lband()) / c * 100

adv['ATR_Ratio'] = adv['ATR_14'] / c * 100 # ATR as % of price

# ── Statistical Features ──

# Skewness: positive = tail on right (big gains possible)

# Kurtosis: fat tails = extreme moves more likely

ret = c.pct_change()

for w in [7, 14, 30]:

adv[f'Skew_{w}'] = ret.rolling(w).skew()

adv[f'Kurt_{w}'] = ret.rolling(w).kurt()

adv[f'AutoCorr_{w}'] = ret.rolling(w).apply(

lambda x: x.autocorr() if len(x) > 3 else 0, raw=False)

# ── Market Regime Detection ──

# Classify market as Bull (SMA20 > SMA50) or Bear (SMA20 < SMA50)

adv['Regime'] = np.where(adv['SMA_20'] > adv['SMA_50'], 1, -1)

adv['Golden_Cross'] = ((adv['SMA_20'] > adv['SMA_50']) &

(adv['SMA_20'].shift(1) <= adv['SMA_50'].shift(1))).astype(int)

adv['Death_Cross'] = ((adv['SMA_20'] < adv['SMA_50']) &

(adv['SMA_20'].shift(1) >= adv['SMA_50'].shift(1))).astype(int)

# ── MACD slope (acceleration) ──

adv['MACD_Accel'] = adv['MACD_Hist'].diff(2)

# Drop NaN rows from new indicators

adv.dropna(inplace=True)

print(f"✅ Advanced feature engineering complete!")

print(f" Original features : ~30")

print(f" New total columns : {adv.shape[1]}")

print(f" Rows remaining : {len(adv):,}")✅ Advanced feature engineering complete!

Original features : ~30

New total columns : 81

Rows remaining : 3,639The cell extends the existing feature set with a broad collection of technical and statistical indicators designed to capture trend strength, momentum, volume behavior, volatility structure, and market regime—information that downstream models can use to detect patterns and regime-dependent behavior. It begins by making a working copy of the previously engineered dataframe and giving short variable names for close, high, low, and volume so the indicator calls read more naturally.

Trend strength is measured with the ADX family: the average directional index itself plus its positive and negative directional components. ADX values help the model distinguish strong trending days from sideways markets, while the +DI and −DI indicate whether buyers or sellers are dominating. Momentum is reflected by a trio of oscillators: the stochastic oscillator K and its smoothed D line, Williams %R, and several rate-of-change features computed for short and medium horizons; these quantify overbought/oversold conditions and recent percentage moves. Multiple versions of RSI are also added (short and medium windows) and a simple RSI slope to capture whether relative strength is accelerating or decelerating.

Volume information is summarized by on-balance volume and a 20-day moving average of OBV; a normalized OBV trend is created by comparing OBV to its moving average so the model can detect whether volume confirms or diverges from price action. An explicit binary volume spike flag marks days where a previously computed Volume_Ratio exceeds a threshold, signaling unusually large activity. Volatility structure comes from the Keltner Channel width (which uses ATR rather than standard deviation) and an ATR percentage-of-price metric, both of which scale volatility to price level.

The cell also injects higher-order statistical features: rolling skewness and kurtosis over multiple windows, and rolling autocorrelation. These features let the model pick up on distributional shifts or persistence in returns that simple means and variances miss. Market-regime signals are created by comparing fast and slow simple moving averages: a regime label (+1 for bullish, −1 for bearish) plus discrete golden- and death-cross flags that capture the exact crossover events. MACD acceleration is computed as a short-lag difference of the MACD histogram to expose when momentum itself is changing direction.

Because many of these indicators require lookback windows, missing values are unavoidable on the earliest rows; the cell therefore drops any rows with NaNs so the resulting dataframe contains only fully-populated feature rows. The printed summary confirms completion and quantifies the transformation: starting from roughly thirty original features, the dataframe now contains 81 total columns, and after removing incomplete rows there are 3,639 usable observations. The jump in columns reflects the dozens of new indicators added, while the reduced row count is a normal consequence of the multi-period rolling calculations and indicator windows—the model will train on these 3,639 clean rows that combine raw price-derived inputs with richer technical and statistical signals.

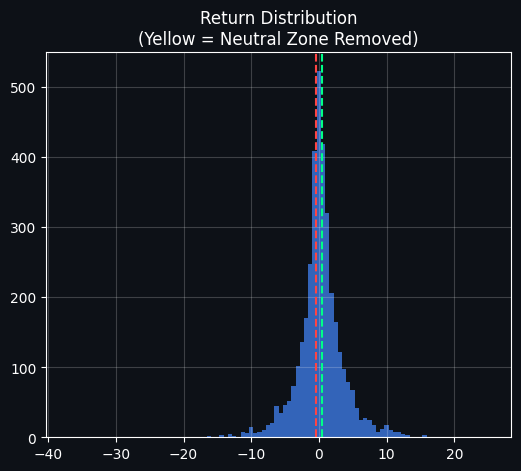

Section 6 — Smart Target Engineering

Problem with naive labels When we mark every next-day change as up or down, even microscopic moves are treated as meaningful. For example, a tiny gain of zero point zero one percent would be tagged as an up day and an almost imperceptible fall of zero point zero zero one percent would be tagged as a down day. Many of those observations are dominated by noise rather than by factors a model can learn.

What we do instead We only assign labels when the following day shows a substantial move: label the sample as Up if the next-day return exceeds half a percent, label it as Down if the next-day return falls by more than half a percent, and remove all days with returns that fall between those two bounds. In other words, neutral or marginal moves are dropped from the training set.

Practical effect illustrated in words: under the raw labeling scheme, minute percentage changes are treated as directional signals; under the smart labeling rule, only moves larger than half a percent generate an Up or Down label, and everything smaller is treated as neutral and excluded.

Why this helps model performance

Days with very small moves behave essentially like coin flips and add noisy labels.

Excluding neutral days means each remaining training example corresponds to a clear, nontrivial market move.

The model is therefore exposed to stronger, more informative patterns and is less likely to learn spurious short-term noise.

# ─────────────────────────────────────────────────────────────

# SECTION 6 — SMART TARGET ENGINEERING

# ─────────────────────────────────────────────────────────────

THRESHOLD = 0.005

adv['Return_next'] = adv['Close'].shift(-1) / adv['Close'] - 1

adv['Target_raw'] = (adv['Return_next'] > 0).astype(int)

adv['Target_smart'] = np.where(adv['Return_next'] > THRESHOLD, 1,

np.where(adv['Return_next'] < -THRESHOLD, 0, np.nan))

df_raw = adv.dropna(subset=['Return_next', 'Target_raw']).copy()

df_smart = adv.dropna(subset=['Return_next', 'Target_smart']).copy()

df_smart['Target'] = df_smart['Target_smart'].astype(int)

# ── GRAPH 1 ──

plt.figure(figsize=(6,5), facecolor='#0D1117')

ax1 = plt.gca()

ax1.set_facecolor('#0D1117')

rets = df_raw['Return_next'] * 100

ax1.hist(rets, bins=100, color=C['blue'], alpha=0.7)

ax1.axvline( THRESHOLD*100, color=C['green'], linestyle='--')

ax1.axvline(-THRESHOLD*100, color=C['red'], linestyle='--')

ax1.set_title('Return Distribution\n(Yellow = Neutral Zone Removed)', color='white')

ax1.tick_params(colors='white')

ax1.grid(alpha=0.2)

plt.show()

# ── GRAPH 2 ──

plt.figure(figsize=(6,5), facecolor='#0D1117')

ax2 = plt.gca()

ax2.set_facecolor('#0D1117')

x = np.arange(2)

w = 0.3

raw_vals = [df_raw['Target_raw'].sum(), (df_raw['Target_raw']==0).sum()]

smart_vals = [df_smart['Target'].sum(), (df_smart['Target']==0).sum()]

ax2.bar(x - w/2, raw_vals, width=w, color=C['blue'], label='Raw')

ax2.bar(x + w/2, smart_vals, width=w, color=C['green'], label='Smart')

ax2.set_xticks(x)

ax2.set_xticklabels(['Up', 'Down'], color='white')

ax2.set_title('Class Balance Comparison\nRaw vs Smart Target', color='white')

ax2.tick_params(colors='white')

ax2.grid(alpha=0.2)

plt.legend()

plt.show()

# ── GRAPH 3 ──

plt.figure(figsize=(6,5), facecolor='#0D1117')

ax3 = plt.gca()

ax3.set_facecolor('#0D1117')

n_up = df_smart['Target'].sum()

n_down = (df_smart['Target'] == 0).sum()

n_neutral = len(df_raw) - len(df_smart)

sizes = [n_up, n_down, n_neutral]

labels = ['Up', 'Down', 'Neutral']

ax3.pie(sizes, labels=labels, autopct='%1.1f%%')

ax3.set_title('Dataset Composition\nAfter Smart Targeting', color='white')

plt.show()The goal here is to turn raw next-day returns into a cleaner, less noisy classification target so the model trains on meaningful moves instead of tiny, economically irrelevant fluctuations. To do that, the notebook first computes each day's next-day return (the percentage change from today’s close to tomorrow’s close) and defines a very simple "raw" label that marks any positive next-day return as an Up day and any non-positive return as Down. Because the next-day return is computed by looking one row ahead, the final row has no future return and becomes missing, so those edge rows are removed before further work.

A second, "smart" labeling scheme is then applied: only moves larger than a small threshold (here set to 0.5% in absolute terms) are considered true Up or Down signals. Moves whose magnitude falls inside the ±0.5% band are treated as neutral and dropped from the smart training set. Concretely, days where tomorrow’s return exceeds +0.5% are labeled Up, days where it is below −0.5% are labeled Down, and days in between are left unlabeled and removed. This produces two datasets alongside the original series: one with the raw sign labels and one with the thresholded, smart labels (with the smart labels converted to integer class values for modeling).

The first saved plot visualizes the distribution of next-day returns (expressed in percent). It’s a dense, bell-shaped histogram tightly centered around zero, which is exactly what you would expect for daily returns: most days are small moves close to zero and only a few are large outliers in the tails. The two dashed lines mark the ±0.5% cutoff; because a large fraction of the mass sits between those lines, you can immediately see that many days would be considered neutral and excluded under the smart-target rule. That visual makes the rationale clear: by excluding the central cloud of small returns you aim to reduce label noise and focus the model on clearer directional moves.

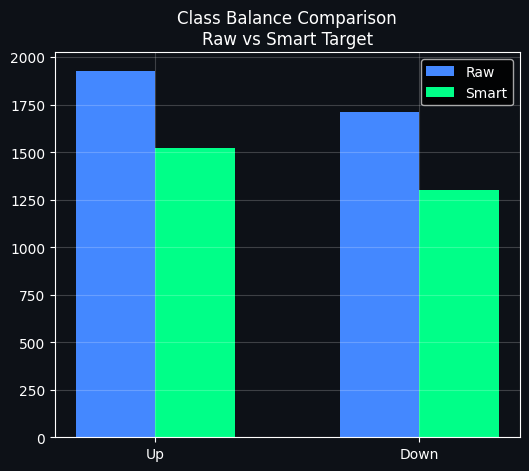

The second plot directly compares class counts before and after thresholding. The blue bars show the raw Up and Down counts when every tiny positive or negative move is treated as a label, and the green bars show the reduced counts after removing neutral days. As the figure shows, both Up and Down counts drop under the smart rule, with the Up class shrinking less than Down in this particular dataset. This is an expected consequence of trimming the central region: you lose data but gain cleaner examples.

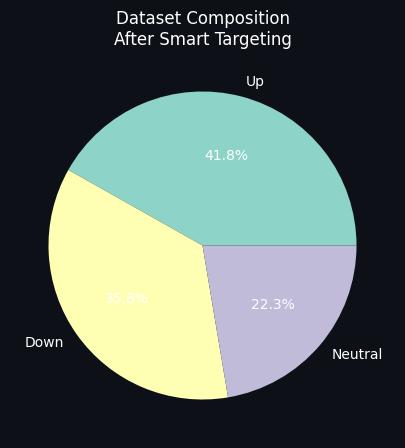

The third plot shows the dataset composition after smart targeting as a pie chart. Roughly forty percent of the remaining labeled days are Up, about thirty-six percent are Down, and around twenty-two percent of the original days fell into the neutral band and were discarded. That slice labeled Neutral quantifies how much data is being sacrificed to improve label quality.

Taken together, these steps prepare a labeled dataset that emphasizes economically meaningful one-day moves. The trade-off is clear: you reduce label noise and hopefully improve the signal-to-noise ratio for a classifier, but you also discard a substantial portion of the data and change class balances, which later steps (for example, feature selection or class balancing) will need to account for.

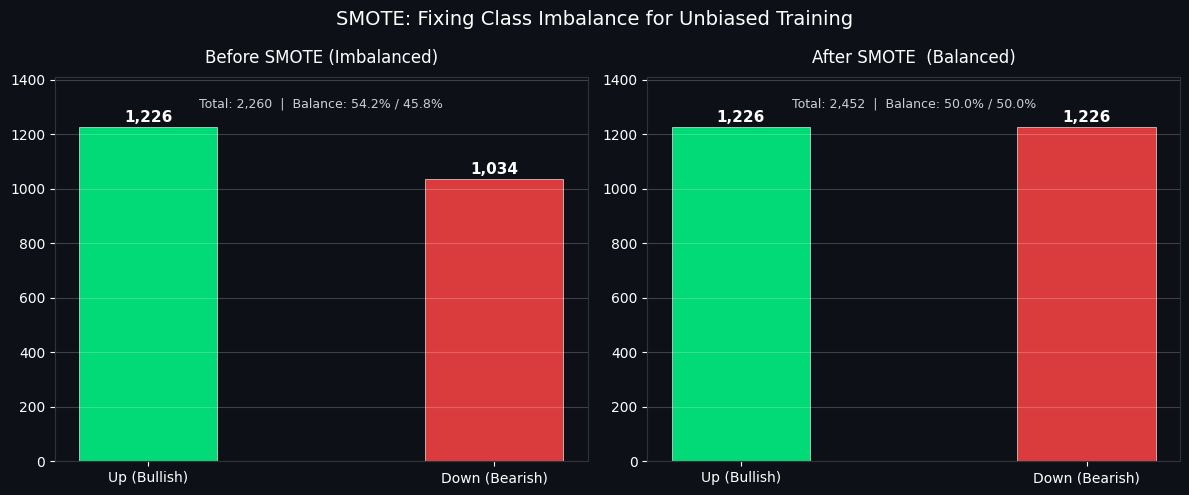

Section 7 — Balancing Classes with SMOTE

Problem statement: After applying the smart-target filter, there remain more upward-moving days than downward ones. This imbalance can make classifiers favor the majority label, effectively learning to predict "Up" most of the time and neglecting the minority class.

What SMOTE does: The Synthetic Minority Over-sampling Technique generates new minority-class examples by interpolating between existing minority observations in feature space. The goal is to equalize the number of samples in each class so the model sees a balanced training set.

How SMOTE operates:

Pick a sample from the minority class.

Locate its k nearest neighbors among other minority samples based on the feature representation.

Produce a synthetic example positioned between the chosen sample and one of its neighbors.

Repeat this process until the minority class has been oversampled to match the majority class.

Expected outcome: With class counts balanced, the model is forced to learn patterns for both outcomes rather than defaulting to the majority. This typically improves metrics that matter for the minority class, such as precision and recall, and can increase overall accuracy as well.

# ─────────────────────────────────────────────────────────────

# SECTION 7 — DATA PREPARATION + SMOTE CLASS BALANCING

# ─────────────────────────────────────────────────────────────

# ── Define features (exclude raw price/target columns) ──

EXCLUDE = [

'Open','High','Low','Close','Volume',

'Return_next','Target_raw','Target_smart','Target',

'MA_Signal',

# Raw price levels (leak potential — use distances instead)

'SMA_20','SMA_50','SMA_100','SMA_200',

'EMA_12','EMA_26','EMA_50',

'BB_High','BB_Low','BB_Mid',

'OBV','OBV_MA20','Volume_MA20',

]

FEAT_COLS = [col for col in df_smart.columns if col not in EXCLUDE]

X = df_smart[FEAT_COLS]

y = df_smart['Target']

# ── Time-aware 80/20 split ──

# IMPORTANT: In financial ML, we NEVER shuffle — future data must not leak into training

split_idx = int(len(X) * 0.80)

X_tr_raw, X_te_raw = X.iloc[:split_idx], X.iloc[split_idx:]

y_tr, y_te = y.iloc[:split_idx], y.iloc[split_idx:]

print(f"📊 Dataset Split (Time-Aware — No Shuffling):")

print(f" Train: {len(X_tr_raw):,} rows ({X_tr_raw.index[0].date()} → {X_tr_raw.index[-1].date()})")

print(f" Test : {len(X_te_raw):,} rows ({X_te_raw.index[0].date()} → {X_te_raw.index[-1].date()})")

print(f" Features: {len(FEAT_COLS)}")

# ── Scale features ──

# RobustScaler uses median/IQR — less sensitive to outliers than StandardScaler

scaler = RobustScaler()

X_tr_s = pd.DataFrame(scaler.fit_transform(X_tr_raw), columns=FEAT_COLS, index=X_tr_raw.index)

X_te_s = pd.DataFrame(scaler.transform(X_te_raw), columns=FEAT_COLS, index=X_te_raw.index)

# ── Quick feature selection to remove noise ──

print("\n🔍 Running feature selection (XGBoost importance filter)...")

sel_model = xgb.XGBClassifier(n_estimators=100, max_depth=4, random_state=42,

eval_metric='logloss', verbosity=0, n_jobs=-1)

sel_model.fit(X_tr_s, y_tr)

from sklearn.feature_selection import SelectFromModel

selector = SelectFromModel(sel_model, threshold='mean', prefit=True)

SELECTED = [f for f, keep in zip(FEAT_COLS, selector.get_support()) if keep]

X_tr_sel = X_tr_s[SELECTED]

X_te_sel = X_te_s[SELECTED]

print(f" Features: {len(FEAT_COLS)} → {len(SELECTED)} (removed {len(FEAT_COLS)-len(SELECTED)} low-importance)")

# ── Apply SMOTE ──

print("\n⚖️ Applying SMOTE to training set...")

smote = SMOTE(random_state=42, k_neighbors=5)

X_tr_sm, y_tr_sm = smote.fit_resample(X_tr_sel, y_tr)

print(f" Before SMOTE: Up={y_tr.sum():,} Down={(y_tr==0).sum():,} Ratio={y_tr.mean()*100:.1f}%")

print(f" After SMOTE: Up={y_tr_sm.sum():,} Down={(y_tr_sm==0).sum():,} Ratio={y_tr_sm.mean()*100:.1f}% ← Perfectly balanced!")

# ── Visualise SMOTE effect ──

fig, axes = plt.subplots(1, 2, figsize=(12, 5), facecolor='#0D1117')

for ax, (title, u, d, col) in zip(axes, [

('Before SMOTE (Imbalanced)', int(y_tr.sum()), int((y_tr==0).sum()), C['red']),

('After SMOTE (Balanced)', int(y_tr_sm.sum()), int((y_tr_sm==0).sum()), C['green'])

]):

ax.set_facecolor('#0D1117')

bars = ax.bar(['Up (Bullish)', 'Down (Bearish)'],

[u, d], color=[C['green'], C['red']], alpha=0.85, width=0.4,

edgecolor='white', linewidth=0.5)

ax.set_title(title, color='white', fontsize=12, pad=10)

ax.tick_params(colors='white')

ax.grid(alpha=0.2, axis='y'); ax.spines[:].set_color('#333')

for bar, val in zip(bars, [u, d]):

ax.text(bar.get_x()+bar.get_width()/2, bar.get_height()+20,

f'{val:,}', ha='center', color='white', fontsize=11, fontweight='bold')

total = u + d

ax.set_ylim(0, max(u,d)*1.15)

ax.text(0.5, 0.92, f'Total: {total:,} | Balance: {u/total*100:.1f}% / {d/total*100:.1f}%',

transform=ax.transAxes, ha='center', color='white', fontsize=9, alpha=0.8)

plt.suptitle('SMOTE: Fixing Class Imbalance for Unbiased Training', color='white', fontsize=14)

plt.tight_layout()

plt.savefig('smote_balance.png', dpi=150, bbox_inches='tight', facecolor='#0D1117')

plt.show()📊 Dataset Split (Time-Aware — No Shuffling):

Train: 2,260 rows (2015-05-04 → 2023-03-08)

Test : 565 rows (2023-03-09 → 2025-04-18)

Features: 62

🔍 Running feature selection (XGBoost importance filter)...

Features: 62 → 39 (removed 23 low-importance)

⚖️ Applying SMOTE to training set...

Before SMOTE: Up=1,226 Down=1,034 Ratio=54.2%

After SMOTE: Up=1,226 Down=1,226 Ratio=50.0% ← Perfectly balanced!The cell prepares the dataset so it's ready for honest model training: it first defines which columns to keep as candidate predictors by explicitly removing raw price columns, the various target columns, and other quantities that would leak future information. That exclusion step is important because distance-based or normalized features are safer to use than raw level measures which can carry lookahead signals; the resulting feature list is stored and used for the rest of the transformations.

Next the data are split in time: the first 80% of rows become the training set and the final 20% become the test set. A time-aware split like this prevents future observations from seeping into training, which would otherwise overstate a model’s performance. The printed summary confirms the exact sizes and ranges: 2,260 training rows spanning 2015-05-04 to 2023-03-08, and 565 test rows from 2023-03-09 to 2025-04-18, and there are 62 candidate features before any pruning.

Because financial features often contain outliers and heavy tails, the features are scaled with a RobustScaler, which centers by the median and scales by the interquartile range. That choice preserves relative differences while being less sensitive to extreme values than a standard z-score scaling would be. The scaler is fit on the training portion only and then applied to the test portion, which is the correct order to avoid leaking test-set statistics into training.

To reduce noise and remove weak predictors, an XGBoost classifier is trained on the scaled training set and used as an importance filter. SelectFromModel then keeps only features whose importance exceeds the mean importance; conceptually this favors variables the tree ensemble found useful for separating up versus down moves. The printed message shows how many features survived that filter: the initial 62 features were reduced to 39, meaning 23 low-importance features were dropped.

Class imbalance is handled next with SMOTE, applied only to the training set. SMOTE synthesizes new minority-class examples by interpolating between existing minority neighbors in feature space, which balances the training labels without touching the test set. The console output documents the effect: before SMOTE the training labels had 1,226 up days and 1,034 down days (a 54.2% / 45.8% split), and after SMOTE both classes have 1,226 samples, producing a perfectly balanced 50% / 50% training set and increasing the overall training size to 2,452 rows.

A two-panel bar chart is created to make this change immediately visible. On the left the “Before SMOTE” panel shows the original imbalance with a taller green bar for bullish days and a shorter red bar for bearish days, annotated with the raw counts and the percentage split. On the right the “After SMOTE” panel shows matching green and red bars, both labeled 1,226, and the caption above reports the new total and exact 50/50 balance. The plot uses a dark background and clear numeric labels so you can instantly see both the magnitude and the balance change; the saved image file records this diagnostic for later review.

Section 8 — Optuna AutoML: Hyperparameter tuning

Why perform hyperparameter search? Default values shipped with models are generic starting points, not tailored to your dataset. Finding good hyperparameters by hand is slow, incomplete, and prone to missed combinations. A systematic search adapts the model to the data and usually yields better predictive performance.

How Optuna works Optuna performs an adaptive search that leverages information from previous trials to guide future sampling. This strategy, often described as Bayesian-style optimization, concentrates evaluation effort on promising regions of the parameter space and tends to be far more efficient than brute-force grid search or blind random sampling.

Parameters we explore

n_estimators: the number of trees in the ensemble. Increasing this generally improves fit but also increases training time.

max_depth: the maximum depth of each tree. Deeper trees can capture more complexity but risk overfitting.

learning_rate: the step size used when boosting. Smaller values slow down learning and typically require more trees but can produce more stable models.

subsample: the fraction of training rows used to build each tree. Sampling rows helps regularize and reduce overfitting.

colsample_bytree: the fraction of features considered for each tree. Limiting features promotes model diversity and robustness.

regalpha and reglambda: the L1 and L2 regularization strengths. These penalize large weights to control model complexity.

Why allocate multiple trials Giving the optimizer a budget of many trials allows it to examine a range of configurations and improve the chance of finding strong hyperparameter settings. Running, for example, sixty trials is far more informative than evaluating only one candidate and provides a practical balance between search depth and compute time.

import optuna

import lightgbm as lgb

from sklearn.model_selection import cross_val_score

# ─────────────────────────────────────────────

# ⚙️ Objective Function (FAST MODE)

# ─────────────────────────────────────────────

def objective_lgb(trial):

params = {