What is a Language Model in NLP?

“You shall know the nature of a word by the company it keeps.” – John Rupert Firth

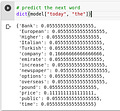

A language model learns to predict the probability of a sequence of words. But why do we need to learn the probability of words? Let’s understand that with an example.

I’m sure you have used Google Translate at some point. We all use it to translate one language to another for varying reasons. This is an example of a popular NLP application called Machine Translation.

In Machine Translation, you take in a bunch of words from a language and convert these words into another language. Now, there can be many potential translations that a system might give you and you will want to compute the probability of each of these translations to understand which one is the most accurate.

In the above example, we know that the probability of the first sentence will be more than the second, right? That’s how we arrive at the right translation.

Types of Language Models

There are primarily two types of Language Models:

Statistical Language Models: These models use traditional statistical techniques like N-grams, Hidden Markov Models (HMM) and certain linguistic rules to learn the probability distribution of words

Neural Language Models: These are new players in the NLP town and have surpassed the statistical language models in their effectiveness. They use different kinds of Neural Networks to model language

Now that you have a pretty good idea about Language Models, let’s start building one!

Building a Basic Language Model

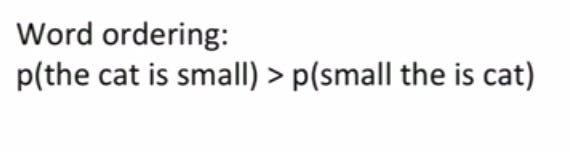

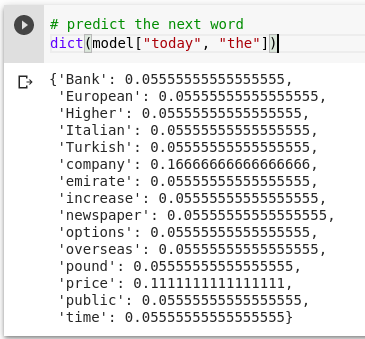

Now that we understand what an N-gram is, let’s build a basic language model using trigrams of the Reuters corpus. Reuters corpus is a collection of 10,788 news documents totalling 1.3 million words. We can build a language model in a few lines of code using the NLTK package:

Please subscribe to see the complete code. Subscribing will get you access to all of the source code, explanation and articles, and it will help me pay my tuition fees. SO please if you can then please subscribe.

from nltk.corpus import reuters

from nltk import bigrams, trigrams

from collections import Counter, defaultdict

# Create a placeholder for model

model = defaultdict(lambda: defaultdict(lambda: 0))

# Count frequency of co-occurance

for sentence in reuters.sents():

for w1, w2, w3 in trigrams(sentence, pad_right=True, pad_left=True):

model[(w1, w2)][w3] += 1

# Let's transform the counts to probabilities

for w1_w2 in model:

total_count = float(sum(model[w1_w2].values()))

for w3 in model[w1_w2]:

model[w1_w2][w3] /= total_countLet’s make simple predictions with this language model. We will start with two simple words – “today the”. We want our model to tell us what will be the next word:

import random

# starting words

text = ["today", "the"]

sentence_finished = False

while not sentence_finished:

# select a random probability threshold

r = random.random()

accumulator = .0

for word in model[tuple(text[-2:])].keys():

accumulator += model[tuple(text[-2:])][word]

# select words that are above the probability threshold

if accumulator >= r:

text.append(word)

break

if text[-2:] == [None, None]:

sentence_finished = True

print (' '.join([t for t in text if t]))Building Neural N-Gram Model