Deep Learning And Neural Network

An artificial neural network (ANN) is a type of neural network developed by Dr. Robert Hecht-Nielsen, the inventor of the first neurocomputer. The following is his definition of a neural network:

“A computing system composed of a number of simple, highly interconnected processing elements that respond dynamically to external inputs in order to process information.”

Neutral networks usually consist of multiple layers. A layer consists of a number of nodes that are interconnected and contain an activation function. Input layers communicate with hidden layers to delineate patterns. An output layer links the hidden layers.

A neural network can be used for a variety of purposes. The airline domain for instance relies heavily on t-12 months of data in order to predict passenger loads in month t as opposed to t-1 or t-2 data. Thus, neural networks typically produce better results than time-series models or even image classification. Chatbot dialogue systems often use memory networks, which are neural networks that store a bag of words from previous conversations. Neural networks can be implemented in many ways.

The backpropagation theory

A common method of training artificial neural networks is backpropagation, which usually replaces an optimization method like gradient descent. Based on the error in the outermost layer, the weights are updated in the input layer by backpropagating up to the outermost layer. Ultimately, the goal is to minimize the error.

The backpropagation approach

Due to the basic incompatibility between the machine and the problem, problems such as the noisy image to ASCII examples are challenging to solve by computer. Modern computers are designed to perform mathematical and logical functions at speeds beyond the capability of humans. Currently, even the most basic desktop computers are capable of performing a large number of numerical comparisons and combinations per second.

Computers are sequential by nature, which causes the problem. In the von Neumann architecture, only one function can be performed at a time through the “fetch-execute” cycle. Because the computer takes so little time to perform each instruction, even a large program takes a negligible amount of time.

BPN (backpropagation network) is a new processing system that evaluates all pixels in an image in parallel.

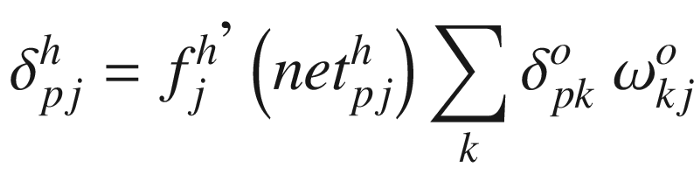

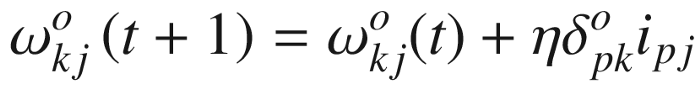

Rule for generalized deltas

For learning about internal representations, I will now introduce the backpropagation learning method. Mapping networks are neural networks that can compute certain functional relationships between their inputs and outputs.

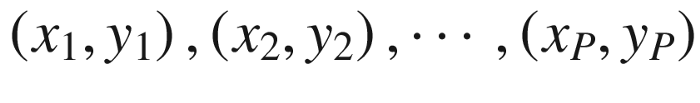

Suppose a set of P vector pairs,

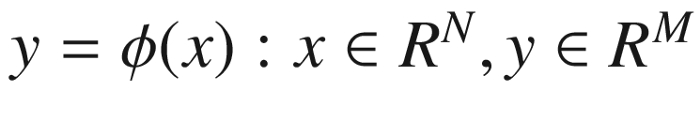

, which are examples of the functional mapping

.

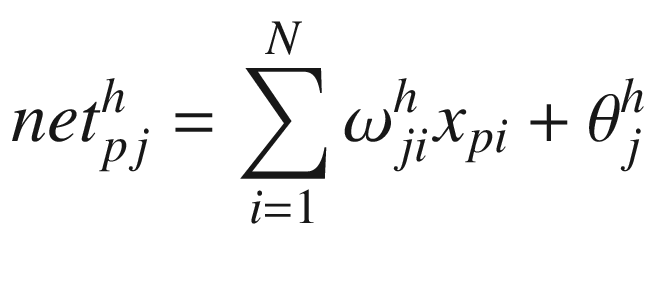

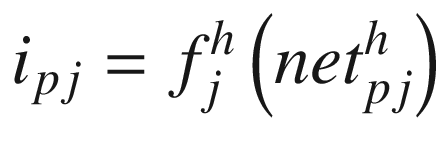

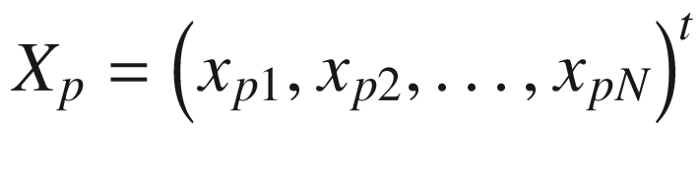

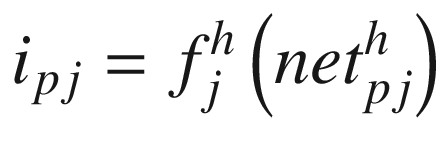

The following equations describe the three-layer network’s information processing. Here is an example of an input vector:

Input nodes are as follows:

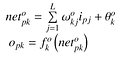

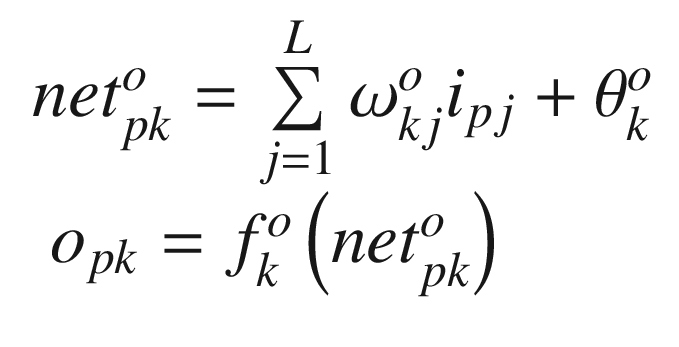

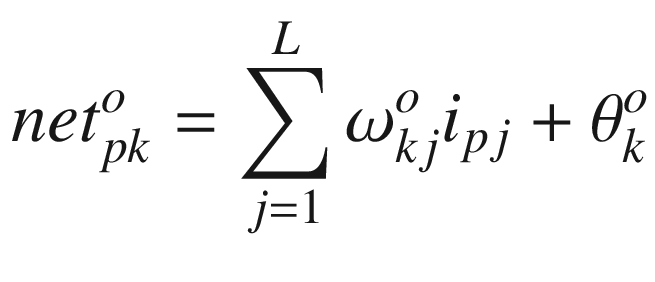

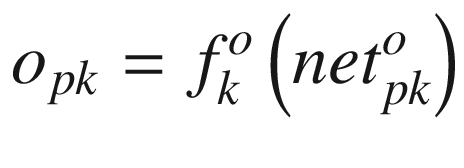

Output nodes are described by the following equations:

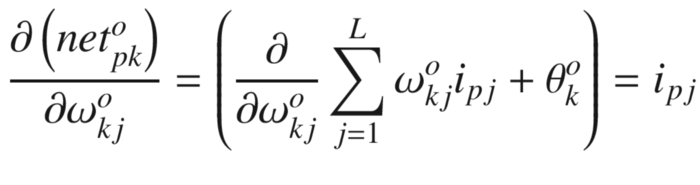

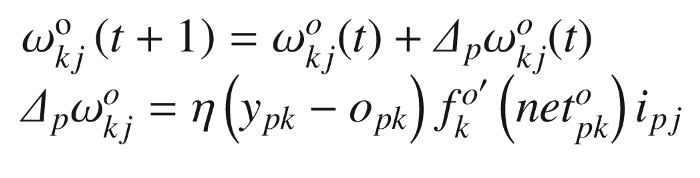

The weights of the output layer have been updated

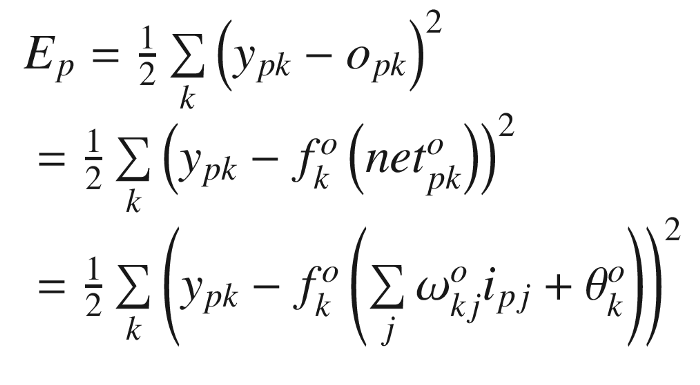

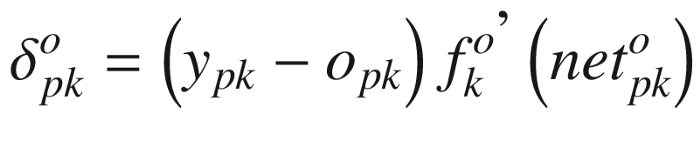

The error term is as follows:

Output layer weight is determined by the following factor:

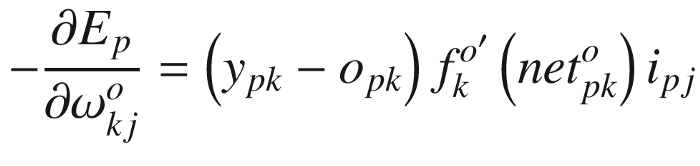

As a result of the negative gradient, we have:

Based on the following, the output layer weights are updated:

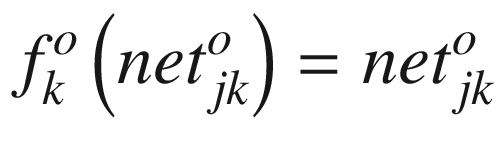

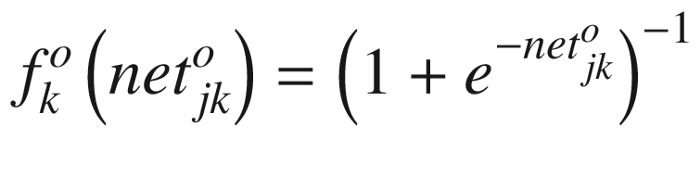

The output function can take two forms.

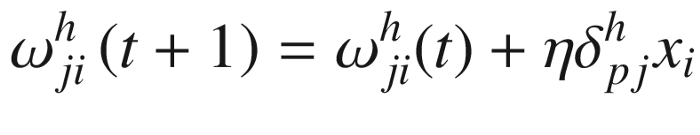

Weight update for hidden layers

Take a closer look at the network illustrating a hidden neuron layer and a neuron output layer. Based on the current set of weights, an output prediction is made for an input vector circulated through the network. A hidden layer’s output values somehow affect the total error. It goes like this:

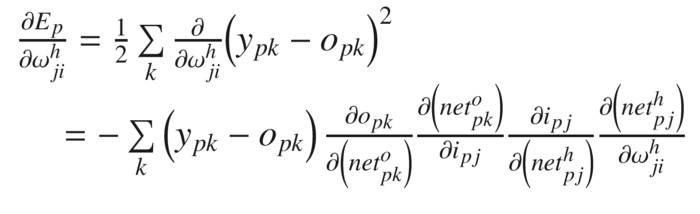

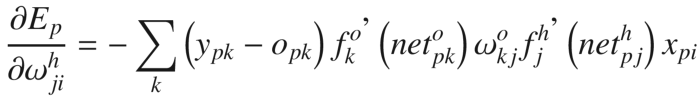

Based on the weights of hidden layers, you can calculate Ep’s gradient.

From the previous equation, each factor can be calculated explicitly. Accordingly, the results are as follows:

BPN Summary

Apply the input vector

to the input units.

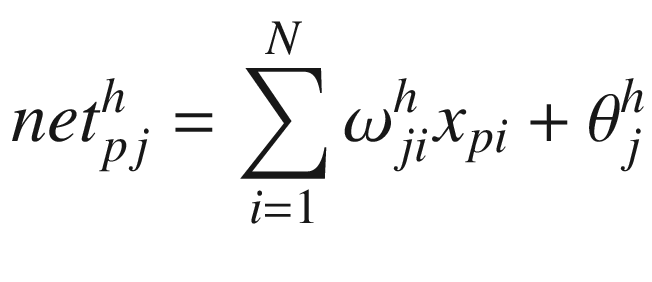

The net input values to the hidden layer units should be calculated.

Using the hidden layer, calculate the outputs.

The net input values for each unit should be calculated.

Produce the outputs by calculating them.

For each output unit, calculate the error terms.

The error terms for the hidden units should be calculated.

The output layer’s weights need to be updated.

Weights on the hidden layer should be updated.

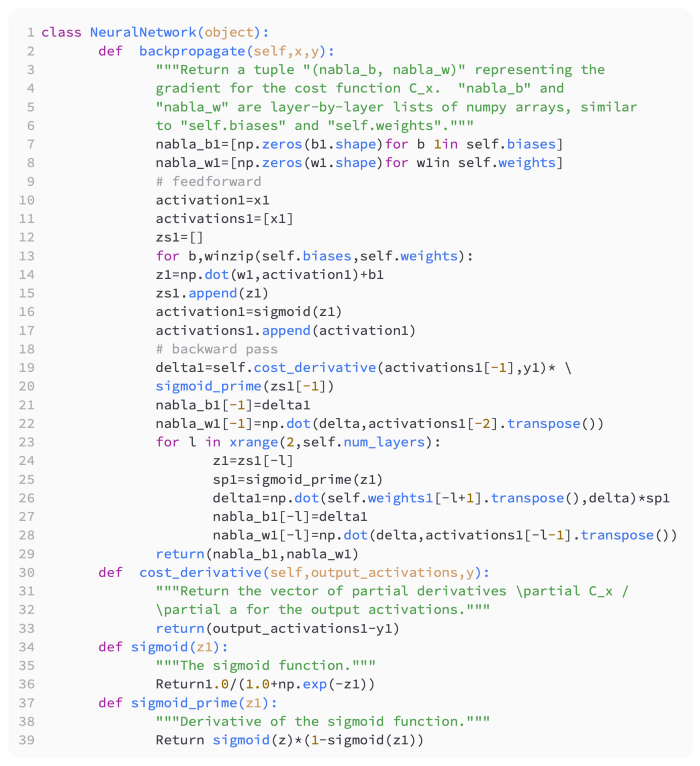

Backpropagation Algorithm

Here are some code examples:

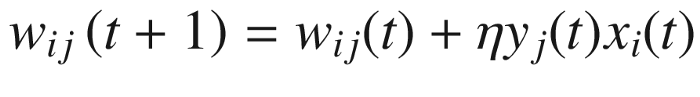

Algorithms of other types

In addition to backpropagation, there are many other techniques available for training neural networks. Various optimization algorithms can be used, including gradient descent, Adam Optimizer, etc. Also commonly used is the simple perception method. One of the most popular methods is Hebb’s postulate. According to Hebb’s learning theory, rather than the error, the input and output product is used to correct the weight.

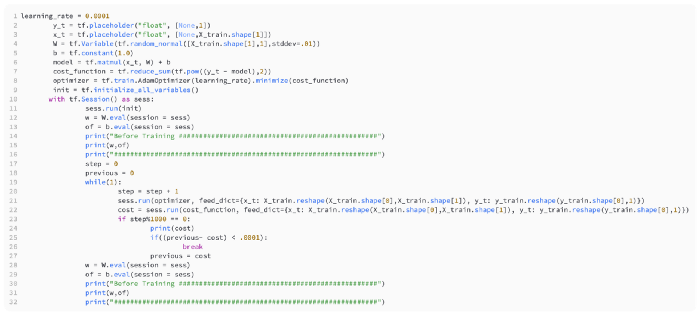

TensorFlow

The TensorFlow deep learning library is one of the most popular Python deep learning libraries. An original Python library is wrapped in a Python wrapper. Parallelization is supported on the GPU platform based on CUDA. With TensorFlow, we can perform simple linear regression by implementing the following code:

A multilayer linear regression can be achieved with a little change, as shown here: