Deep Learning Models for Predicting Stock Prices

For predicting numeric values and binary variables, machine learning uses linear regression.

In addition to nearest neighbors, support vector machines, decision trees, random forests, and boosting, you learn several other popular methods.

In addition to learning the common methods of sklearn, learning deep learning methods is another common next step. Keras is a popular library for building deep learning models. Assume that you are comfortable with Python and have some experience with deep learning and the sklearn library. There is no expectation of exposure to Keras.

As a result of deep learning, so many cases can now be predicted using these models. Most traditional classification and regression predictions can be made with deep learning models that do not have “fancy” layers.

Deep Learning with no “fancy” layers

In addition to their ability to predict a number of different targets, convolutional neural networks are becoming renowned for their ability to recognize images. Time-series data analyses can benefit greatly from Recurrent Neural Networks (RNNs) and Long-Short Term Memory (LSTM) models. In this article, we will focus on these last two methods — RNNs and LSTMs. By using the Keras library, these methods will be used for forecasting.

Data

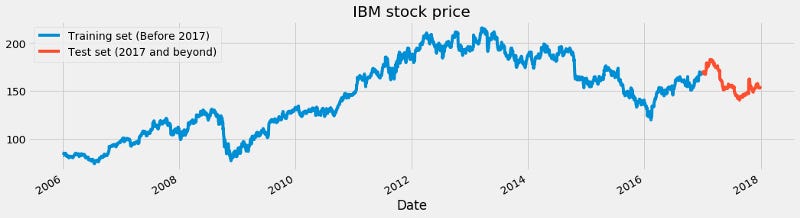

Time-series data for several stocks is used for this post. Here, we are training a model on the stock market data from 2006 to 2016, then using that model to predict 2017 prices.

We have provided two examples below: Apple’s stock price and IBM’s stock price. We selected the High of the day for each of these plots. A blue line shows the training data and a red line shows the test data we are trying to predict.

Apple and IBM stock prices are two examples of training and testing.

Models of traditional supervision versus models of recurrence

These machine learning algorithms use sklearn to predict values of y from columns of X, as described in the introduction.

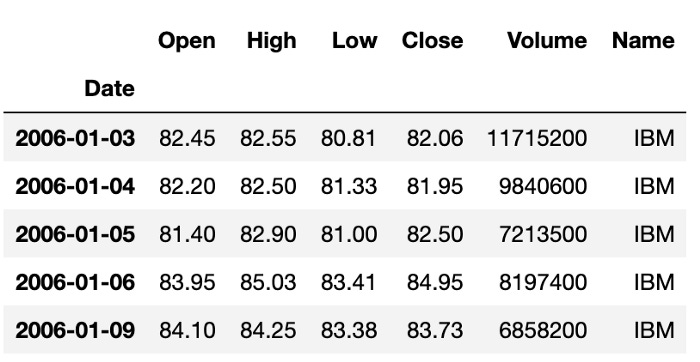

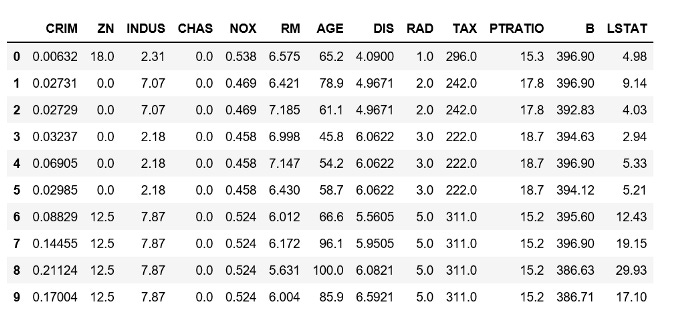

For instance, Boston home prices can be predicted using this method. Data on housing is represented by X in this example. Y represents the price of the corresponding home. The X data is shown below:

Home prices represent y for each row. Models are trained by connecting each row of X with the corresponding value of Y. It is not possible to have data that are all connected in this way when using time-series data. The trend from previous y values should be used instead to assist in predicting future y values.

You can see that each row represents a home in the Boston home price data. There is no connection between these homes. For time-series data, however, the price of the stock in the previous row is useful for predicting the price in the next row. Stock price relationships can be exploited using RNNs and LSTMs.

In A recurrent process can be implemented in Keras using SimpleRNN. LSTMs and GRUs.

Francois Chollet defines an RNN as a for loop that reuses values computed during previous iterations

Take advantage of these recurrent methods by putting them into practice.