Image Augmentation For Deep Learning With Keras

In order to work with deep learning models and neural networks, it is necessary to prepare data. Object recognition tasks that require more complex data augmentation are also becoming more common.

Here are some things you will learn after reading this post:

How to use Keras’ image augmentation API

Feature standardization: how to do it

What you need to know about ZCA whitening

Augmenting data with random rotations, shifts, and flips

Data saving for augmented images

Please support so I can keep writing. If you can please send me a tip. Click the link below, or click this!

Keras Image Augmentation API

This API is simple and powerful, just like the rest of Keras.

The ImageDataGenerator class in Keras defines the configuration for preparing and enhancing image data. Capabilities include:

Standardization at the sample level

Standardization by feature

Whitening with ZCA

Rotation, shift, shear, and flipping at random

Rearranging dimensions

Disk-based storage of augmented images

The following steps can be taken to create an augmented image generator:

from tensorflow.keras.preprocessing.image import ImageDataGenerator

datagen = ImageDataGenerator()By iterating the deep learning model fitting process, instead of performing the operations on your entire image dataset in memory, the API generates augmented image data at the right time. During model training, you will incur some additional time costs as a result, but your memory overhead will be reduced.

You must fit the ImageDataGenerator on your data after creating and configuring it. Your image data will be transformed based on these statistics. Fit() can be called on the data generator and passed to the training dataset.

datagen.fit(train)Whenever a batch of image samples is requested, the data generator itself returns iterations. With the flow() function, you can configure the batch size and prepare the data generator.

X_batch, y_batch = datagen.flow(train, train, batch_size=32)The data generator can also be used. By calling fit_generator() on your model, instead of the fit() function, you pass in the data generator, the number of epochs to train on, and the desired length.

fit_generator(datagen, samples_per_epoch=len(train), epochs=100)Point of Comparison for Image Augmentation

Let’s take a look at some examples illustrating how Keras’ image augmentation API works.

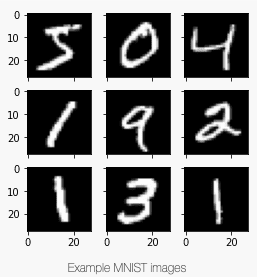

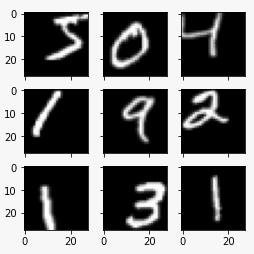

These examples use the MNIST handwritten digit recognition task. Let’s begin by looking at the first nine images from the training set.

# Plot images

from tensorflow.keras.datasets import mnist

import matplotlib.pyplot as plt

# load dbata

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_train[i*3+j], cmap=plt.get_cmap("gray"))

# show the plot

plt.show()You can compare the following image with the preparation and augmentation examples below by running this example.

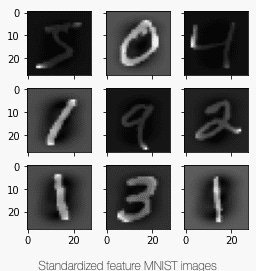

Feature Standardization

The pixel values can also be standardized across the entire dataset. A similar standardization process is often used in tabular datasets for each column, which is known as feature standardization.

The featurewise_center and featurewise_std_normalization arguments on the ImageDataGenerator class can be set to True to standardize features. The default value is False. There is, however, a bug in Keras’ feature standardization that calculates the mean and standard deviation across all pixels. You will see an image similar to the one above if you use the fit() function from the ImageDataGenerator class:

# Standardize images across the dataset, mean=0, stdev=1

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

datagen = ImageDataGenerator(featurewise_center=True, featurewise_std_normalization=True)

# fit parameters from data

datagen.fit(X_train)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train, y_train, batch_size=9, shuffle=False):

print(X_batch.min(), X_batch.mean(), X_batch.max())

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j], cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

breakAccording to the batch printed above, the minimum, mean, and maximum values are:

-0.42407447 -0.04093817 2.8215446Here is the image displayed:

Feature standardization can be calculated manually as a workaround. There should be a mean and standard deviation for each pixel, and they should be computed independently from other pixels in the same sample. Your own computation should be substituted for the fit() function:

# Standardize images across the dataset, every pixel has mean=0, stdev=1

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

datagen = ImageDataGenerator(featurewise_center=True, featurewise_std_normalization=True)

# fit parameters from data

datagen.mean = X_train.mean(axis=0)

datagen.std = X_train.std(axis=0)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train, y_train, batch_size=9, shuffle=False):

print(X_batch.min(), X_batch.mean(), X_batch.max())

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j], cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

breakIt now has a wider range of minimums, means, and maximums:

-1.2742625 -0.028436039 17.46127Here, you can see that the effect is different, appearing to darken and lighten different digits.

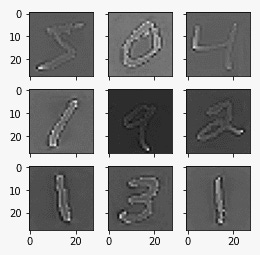

ZCA Whitening

Whitening transforms reduce redundancy in pixel image matrices by linear algebraic operations.

In order to highlight the structures and features of the image to the learning algorithm, less redundancy in the image is needed.

PCA is typically used to perform image whitening. In recent years, an alternative method called ZCA (reported in Appendix A) has shown better results in preserving all the original dimensions of transformed images. Unlike PCA, the transformed images retain their original appearance. Specifically, whitening converts each image into a white noise vector with zero mean, unit standard, and statistical independence between each element.

The zca_whitening argument can be set to True to perform a ZCA whitening transform. However, you must first zero-center your input data separately due to the same issue as feature standardization:

# ZCA Whitening

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

datagen = ImageDataGenerator(featurewise_center=True, featurewise_std_normalization=True, zca_whitening=True)

# fit parameters from data

X_mean = X_train.mean(axis=0)

datagen.fit(X_train - X_mean)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train - X_mean, y_train, batch_size=9, shuffle=False):

print(X_batch.min(), X_batch.mean(), X_batch.max())

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j].reshape(28,28), cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

breakAs you can see in the example, each digit’s outline is highlighted in the images.

Random Rotations

In your sample data, you may find that the scene rotation varies and differs from image to image.

When training your model, you can rotate images from your dataset artificially and randomly in order to make it more resilient to rotations.

Set the rotation_range argument to 90 degrees to create random rotations of the MNIST digits.

# Random Rotations

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

datagen = ImageDataGenerator(rotation_range=90)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train, y_train, batch_size=9, shuffle=False):

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j].reshape(28,28), cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

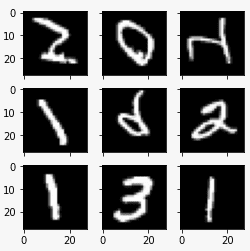

breakThe example shows how images have been rotated left and right up to a 90-degree limit. Due to MNIST’s normalized orientation of the digits, this transform is not helpful for this problem, but it might be helpful when learning from photographs where objects may have different orientations.

Random Shifts

You may not have centered your objects in your images. There are various ways in which they can be off-center.

Deep learning networks can be trained to detect and handle off-center objects by artificially shifting their training data. Using Keras’ width_shift_range and height_shift_range arguments, you can shift training data horizontally and vertically separately.

# Random Shifts

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

shift = 0.2

datagen = ImageDataGenerator(width_shift_range=shift, height_shift_range=shift)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train, y_train, batch_size=9, shuffle=False):

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j].reshape(28,28), cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

breakThe digits are shifted when this example is run. For MNIST, this is not necessary, since the handwritten digits are already centered, but it may be useful for more complex problems.

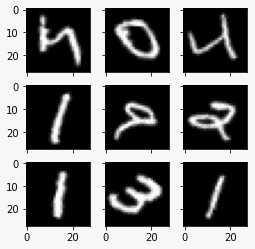

Random Flips

You can also increase performance on large and complex problems by flipping images randomly in your training data.

Using the vertical_flip and horizontal_flip arguments, Keras supports random flipping along both axes.

# Random Flips

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

datagen = ImageDataGenerator(horizontal_flip=True, vertical_flip=True)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train, y_train, batch_size=9, shuffle=False):

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j].reshape(28,28), cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

breakYou can see that the digits are flipped when you run this example. When photographing objects in a scene that have a variety of orientations, flipping digits is not useful because they will always have the correct orientation left and right.

Saving Augmented Images To FIle

In just-in-time data preparation and enhancement, Keras performs the work.

While this is efficient in terms of memory, you may need the exact images used during training. Consider, for example, generating them once and using them with several different deep learning models or configurations or using them with different software packages later.

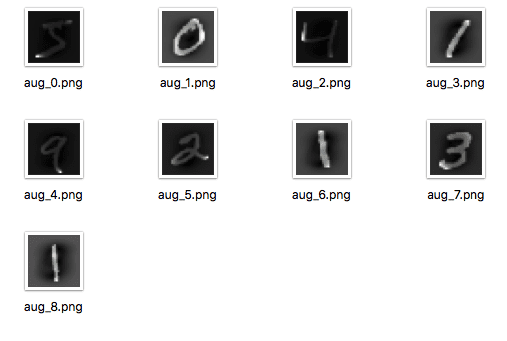

Images generated during training can be saved with Keras. Flow() can be used to specify a directory, filename prefix, and image file type before training. A file will then be created with the generated images during training.

Here is an example that writes nine images with the prefix “aug” and the file type PNG to the “images” subdirectory.

# Save augmented images to file

from tensorflow.keras.datasets import mnist

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import matplotlib.pyplot as plt

# load data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# reshape to be [samples][width][height][channels]

X_train = X_train.reshape((X_train.shape[0], 28, 28, 1))

X_test = X_test.reshape((X_test.shape[0], 28, 28, 1))

# convert from int to float

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

# define data preparation

datagen = ImageDataGenerator(horizontal_flip=True, vertical_flip=True)

# configure batch size and retrieve one batch of images

for X_batch, y_batch in datagen.flow(X_train, y_train, batch_size=9, shuffle=False,

save_to_dir='images', save_prefix='aug', save_format='png'):

# create a grid of 3x3 images

fig, ax = plt.subplots(3, 3, sharex=True, sharey=True, figsize=(4,4))

for i in range(3):

for j in range(3):

ax[i][j].imshow(X_batch[i*3+j].reshape(28,28), cmap=plt.get_cmap("gray"))

# show the plot

plt.show()

breakAs you can see from the example, images are only written when they are generated.

Tips for Augmenting Images Data With Keras

Unlike other types of data, image data allows quick assessment of how the model might perceive the data.

Here are some tips on how to prepare and augment image data for deep learning.

Review Dataset. Spend some time reviewing your dataset in detail. Check out the images. Consider image preparations and augmentations that can improve your model’s training, such as handling different shifts, rotations, or flips of objects in the scene.

Review Augmentations. After the augmentation is complete, review sample images. Understanding image transformations intellectually is one thing; examining examples is quite another. Make sure you review images with both individual augmentations and the full set of augmentations you plan to use. There may be ways to simplify or further enhance the training process for your model.

Evaluate a Suite of Transforms. A variety of image data preparation and augmentation schemes should be tried. Data preparation schemes you didn’t expect to be beneficial can often surprise you with their results.

Did you liked the article? Please leave a tip, it really helps me a lot. Check the link below