LSTM vs. ANN vs. CNN: A Deep Dive into Stock Price Forecasting

Neural networks are a type of advanced computing system commonly used for tasks like pattern recognition, machine learning, and decision making.

This section explains how the plan or idea is put into action.

Colab check, Drive mount, directory change

try:

from google.colab import drive

drive.mount('/content/drive')

IN_COLAB=True

import os.path

os.chdir('/content/drive/MyDrive/MachineLearning/Final Project/stock_prediction/') # Change the directory to yours if using Colab

!pip install nose

except:

IN_COLAB=False

if IN_COLAB:

print("We're running Colab")Drive already mounted at /content/drive; to attempt to forcibly remount, call drive.mount("/content/drive", force_remount=True).

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Requirement already satisfied: nose in /usr/local/lib/python3.10/dist-packages (1.3.7)

We're running ColabThis code starts by trying to connect to Google Drive using the google.colab library if it is being run in a Colab environment. It then navigates to a specified location within Google Drive. If the connection is successful, it proceeds to install the nose library. This code snippet helps determine if it is being executed in a Colab environment. If it is running in Colab, it sets a variable called IN_COLAB to True and outputs “We’re running Colab.” Otherwise, it sets IN_COLAB to False. It is commonly used to configure Google Colab for machine learning projects or activities.

This section explains how to load the helper module.

Download the source code from the link at the end of this article.

Activate interactive shell and autoreload, import helper module.

from IPython.core.interactiveshell import InteractiveShell

InteractiveShell.ast_node_interactivity = "all"

%reload_ext autoreload

%autoreload 1

import helper

%aimport helper

helper = helper.Helper()This code snippet sets up the Jupyter Notebook environment to automatically reload modules when they are updated. It then imports a module called “helper” and creates an instance of a class called “Helper” from that module. This is convenient for development scenarios where module updates need to be quickly reflected in the working environment without manual reloading or notebook restarts.

This section outlines what is needed.

Import necessary libraries and modules

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

%matplotlib inline

import sklearn

import os

import math

import tensorflow as tf

from sklearn.model_selection import TimeSeriesSplit

from sklearn.model_selection import GridSearchCV

import seaborn as sns

from sklearn.decomposition import PCA

from sklearn.pipeline import Pipeline

from sklearn.model_selection import GridSearchCV

from sklearn.preprocessing import StandardScaler, MinMaxScaler

from sklearn.model_selection import cross_val_score

from sklearn.metrics import accuracy_score, recall_score, precision_score, f1_score,make_scorer, precision_recall_curve, PrecisionRecallDisplay,confusion_matrix, classification_report, roc_curve, auc

from sklearn.metrics import mean_squared_error, mean_absolute_error, r2_score, mean_absolute_percentage_error

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, LSTM, Dropout, Flatten

from tensorflow.keras.layers import Conv2D, Conv1D, MaxPooling2D, AveragePooling2D, MaxPooling1D, AveragePooling1D

from tensorflow.keras.callbacks import EarlyStopping, ModelCheckpoint

from keras.regularizers import l1, l2This code includes commonly used libraries and modules for machine learning and deep learning tasks. The code imports libraries like NumPy, Pandas, Matplotlib, and TensorFlow to work with data, create visual representations, and construct neural networks. This code imports modules from scikit-learn to perform various tasks for time-series analysis, such as splitting data, selecting models, preprocessing data, evaluating metrics, and conducting regression analysis. This function allows for the importation of modules from TensorFlow to construct deep learning models with sequential layers such as Dense, LSTM, and Convolutional layers. It also brings in modules used for neural network callbacks, such as EarlyStopping and ModelCheckpoint. The code imports modules that are used to regulate deep learning models by applying regularizers. This code establishes a framework for conducting data analysis, preparing data, building models, and assessing them. It utilizes a range of machine learning and deep learning techniques.

Load stock and index data from CSV files

ticker = "AAPL"

index_ticker = "SPY"

dateAttr = "Dt"

DATA_DIR = os.path.join(".","Data","train")

data_train = pd.read_csv(DATA_DIR + "/{t}.csv".format(t=ticker), index_col=dateAttr)

index_data_train = pd.read_csv(DATA_DIR + "/{t}.csv".format(t=index_ticker), index_col=dateAttr)

DATA_DIR = os.path.join(".","Data","sample")

data_validate = pd.read_csv(DATA_DIR + "/{t}.csv".format(t=ticker), index_col=dateAttr)

index_data_validate = pd.read_csv(DATA_DIR + "/{t}.csv".format(t=index_ticker), index_col=dateAttr)This code sets up variables like ticker, index_ticker, and dateAttr. The program then sets up a pathway to a specific folder location containing the data files by using these variables. The program reads a CSV file with training data for a specific stock ticker and its corresponding index data from a designated directory. The program reads a CSV file that contains validation data for the ticker and its corresponding index data for the index_ticker from a specific directory in the sample dataset. This code fetches stock market data for a particular company, identified by its ticker symbol (e.g. “AAPL”), and its related index ticker symbol (e.g. “SPY”). The data is stored in CSV files located in different directories, and it is used for both training and validation tasks.

I will use SPY data to predict AAPL returns because I discovered through feature importance calculations that SPY returns have a significant impact on AAPL returns.

Plot closing prices of Apple (AAPL) and S&P 500 (SPY)

sns.set()

apple = data_train.loc[:,['Close','Adj Close']]

apple['lag1'] = apple['Close'].shift(periods = 1)

apple['returns'] = (apple['Close'] - apple['lag1']) / apple['lag1'] * 100

spy = index_data_train.loc[:,['Close','Adj Close']]

spy['lag1'] = spy['Close'].shift(periods = 1)

spy['returns'] = (spy['Close'] - spy['lag1']) / spy['lag1'] * 100

plt.figure(figsize=(15, 8))

apple['Close'].plot(label = 'Apple')

spy['Close'].plot(label = 'SPY')

plt.title('AAPL vs SPY')

plt.xlabel('Time')

plt.legend()This code program compares the daily returns of Apple (AAPL) stock prices and the S&P 500 index (SPY). It calculates these returns and then displays them visually on a graph for comparison. This code imports required libraries and sets the visualization style using sns.set(). It takes the ‘Close’ and ‘Adj Close’ columns from the training data for Apple and the index. It generates delayed versions of the ‘Close’ column for Apple stock and the market index. It computes the daily percentage change in the prices of Apple stock and the index using the previous day’s prices. It makes a new plot with a set size for the figure. It shows the closing prices of Apple and the index on a single graph. The title of the plot is ‘AAPL vs SPY’ and the x-axis is labeled as ‘Time’. A legend is included on the plot to distinguish between Apple and the index. This code gets the daily stock prices for Apple and the S&P 500 index, calculates their daily returns, and finally creates a graph showing how their prices change over time.

When comparing the prices of AAPL and SPY, it is not immediately clear if there is a direct relationship between the returns of SPY and AAPL. Therefore, additional analysis is required to determine if there is any correlation between the two.

Plot returns of Apple (AAPL) and S&P 500 (SPY).

plt.figure(figsize=(15, 8))

apple['returns'].plot(label = 'AAPL')

spy['returns'].plot(label = 'SPY')

plt.title('AAPL vs SPY returns')

plt.xlabel('Time')

plt.legend()This piece of code generates a line plot that compares the returns between Apple stock (‘AAPL’) and the S&P 500 ETF (‘SPY’). The plot displays how the returns of these two stocks change over time. The x-axis shows the passage of time, while the y-axis represents the returns of the stocks.

This code creates a plot figure that is 15 inches wide and 8 inches tall. It shows the returns of Apple (‘AAPL’) and S&P 500 ETF (‘SPY’) on the same plot with separate colors and labels. The plot is titled ‘AAPL vs SPY returns’ and the x-axis is labeled ‘Time’. A legend is included to explain which line corresponds to each stock.

The analysis showed a correlation between AAPL and SPY returns. Because of this, I will be adding SPY close price and percentage returns to the features used to predict AAPL returns.

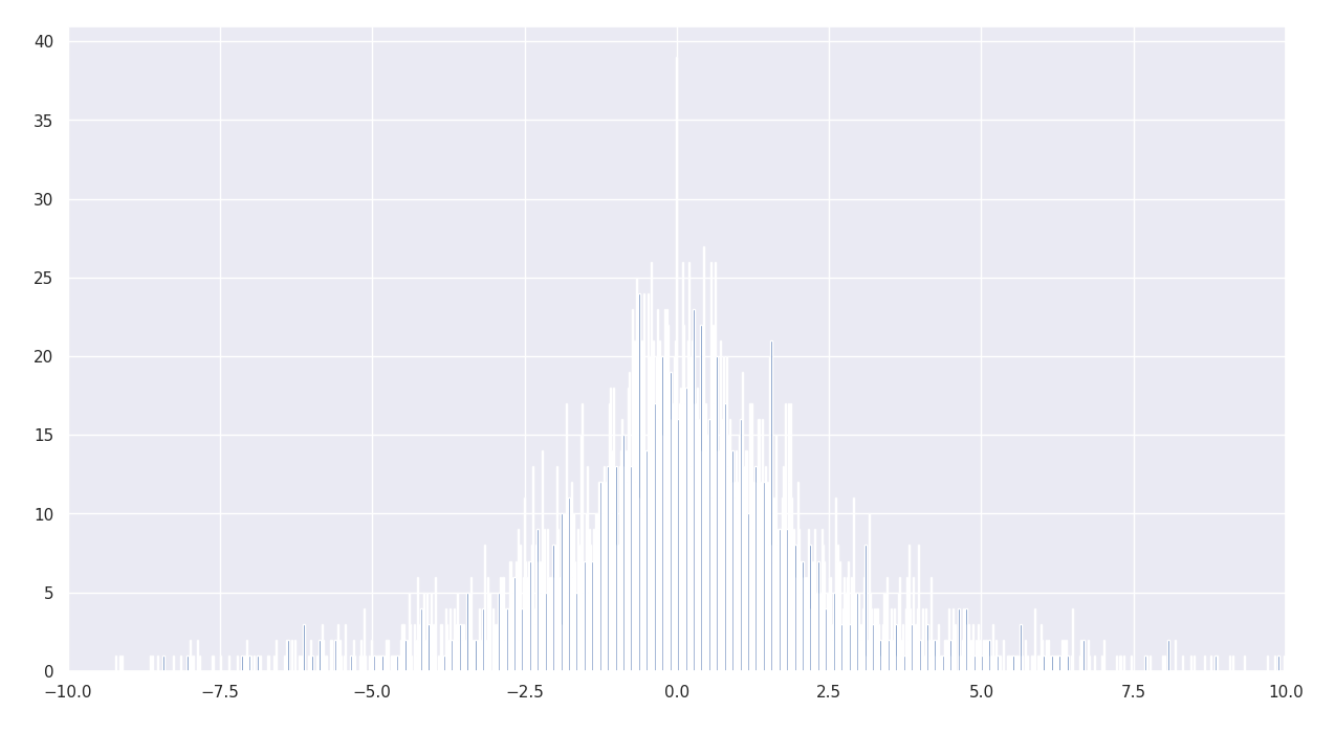

Histogram of Apple (AAPL) returns with limited x-axis range

plt.figure(figsize=(15,8))

plt.hist(apple['returns'],bins = 5000)

plt.xlim([-10, 10])

plt.show()This code generates a histogram that illustrates the distribution of returns for Apple stock data. The histogram is divided into 5000 bins and displays returns ranging from -10 to 10 on the x-axis. The plot size is set to 15 units wide and 8 units tall. To view the histogram, the plt.show() command is used.

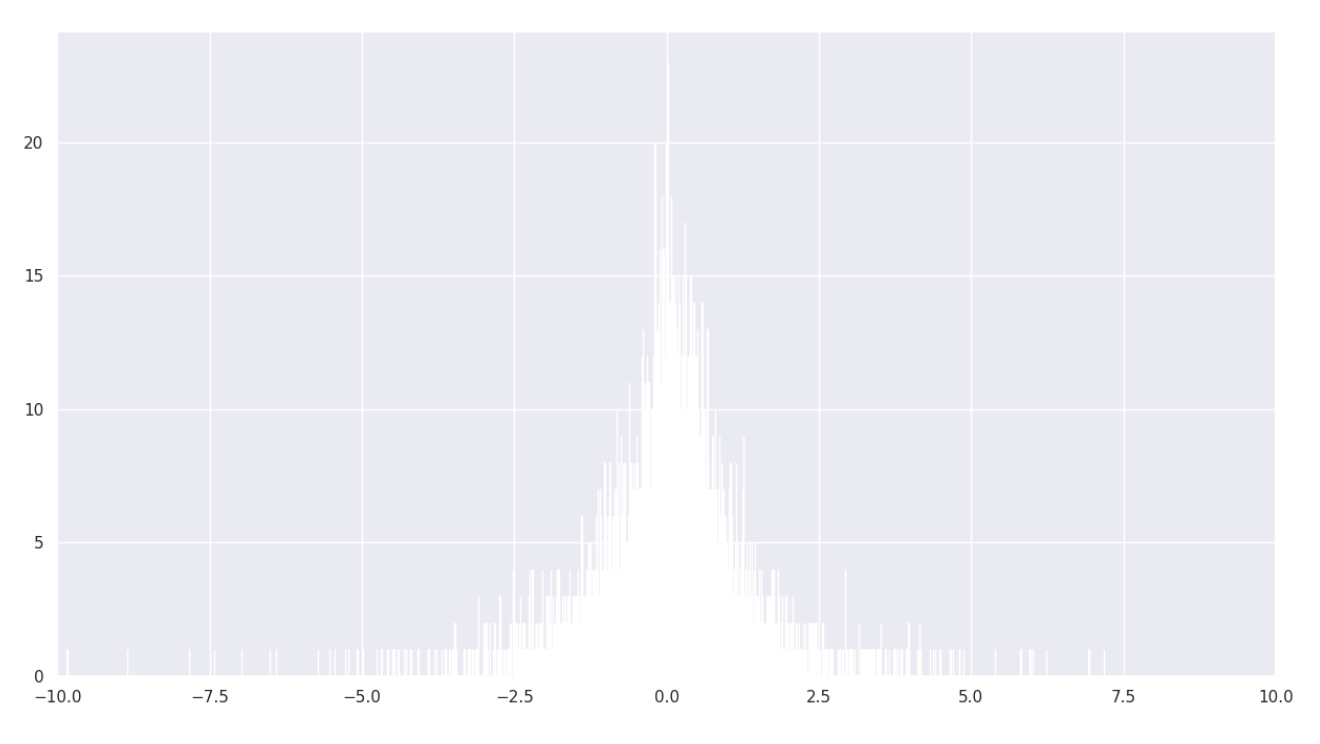

Histogram of S&P 500 (SPY) returns with limited x-axis range

plt.figure(figsize=(15,8))

plt.hist(spy['returns'],bins = 5000)

plt.xlim([-10, 10])

plt.show()This code generates a histogram for the returns data in the ‘spy’ dataset. The histogram is divided into 5000 bins, each representing an equal interval to display the frequency of returns. The x-axis ranges from -10 to 10, showing the distribution of returns in the ‘spy’ dataset.

Since both return distributions appear to be approximately normal, we can proceed with our analysis.

Comparison of adjusted close prices between Apple (AAPL) and S&P 500 (SPY)

plt.figure(figsize=(15, 8))

apple['Adj Close'].plot(label = 'AAPL')

spy['Adj Close'].plot(label = 'SPY')

plt.title('AAPL vs SPY Adjusted Close')

plt.xlabel('Time')

plt.legend()This code creates a plot that compares the adjusted close prices of Apple stock (AAPL) and an S&P 500 ETF (SPY). It defines the figure size, plots both assets on the same graph, sets the title to “AAPL vs SPY Adjusted Close,” labels the x-axis as “Time,” and adds a legend to show which line represents each asset.

Data preprocessing refers to the initial step in data analysis where raw data is cleaned, transformed, and prepared for further analysis. Tasks commonly involved in data preprocessing include handling missing data, removing duplicates, standardizing data formats, and scaling data for better performance in machine learning models. This process ensures that the data is in a usable format before conducting any analysis or modeling.

Data preprocessing and feature engineering.

def Preprocess(data,idx):

if(data.isna().any().any()):

data.dropna(inplace = True)

sma_5 = 5

sma_30 = 30

sma_45 = 45

sma_60 = 60

data['lag1'] = data['Close'].shift(periods = 1)

data['lag2'] = data['Close'].shift(periods = 2)

data['lag3'] = data['Close'].shift(periods = 3)

data['lag4'] = data['Close'].shift(periods = 4)

data['returns'] = (data['Close'] - data['lag1']) / data['lag1'] * 100

data['Lagged Returns1'] = data['returns'].shift(periods = 1)

data['Lagged Returns2'] = data['returns'].shift(periods = 2)

data['Lagged Returns3'] = data['returns'].shift(periods = 3)

data['Lagged Returns4'] = data['returns'].shift(periods = 4)

data['Lagged Returns5'] = data['returns'].shift(periods = 5)

data['sma_5'] = data['Close'].rolling(sma_5).mean()

data['sma_30'] = data['Close'].rolling(sma_30).mean()

data['sma_45'] = data['Close'].rolling(sma_45).mean()

data['sma_60'] = data['Close'].rolling(sma_60).mean()

data['SPY Close'] = idx['Close']

data['SPY returns'] = data['SPY Close'].pct_change(1)* 100

data.dropna(inplace = True)

return dataThis code prepares financial market data for analysis by creating additional columns and performing calculations on the dataset. This is what the code does explained simply: This process involves checking the dataset for any missing values and removing any rows with missing values if they are found. We are creating new columns in the dataset to store the ‘Close’ price of the asset from previous time periods up to lag 4. This process involves calculating the percentage returns using the ‘Close’ prices and then saving this information in a new column. The program computes past returns for a maximum of 5 time periods and adds these values to new columns in the dataset. The code calculates simple moving averages (SMA) for different time windows — 5, 30, 45, and 60 periods — based on the ‘Close’ prices. It then adds these calculated average values into new columns in the dataset. It adds the closing values of another asset, specifically ‘SPY’, as a new column in the dataset. The code calculates the percentage returns of the ‘SPY’ asset and includes this data as a new column in the dataset. Lastly, any rows with missing values are removed from the dataset before it is returned in a preprocessed form. The code analyzes financial market data by generating delayed values, computing returns, calculating moving averages, and integrating information from a different asset to facilitate analysis.

To simplify, I used the closing prices to calculate returns and focused on lagging values from 1 to 4. I chose to exclude lag values of 5 and higher as they did not significantly impact the target variable prediction. The Preprocess function computes the features needed for prediction using AAPL and SPY data. After testing multiple combinations, I determined that the selected lag values were the most suitable for the problem at hand.

Invokes data preprocessing function.

data2 = Preprocess(data_train, index_data_train)

data2The code calls a function called “Preprocess” and gives it two inputs: “data_train” and “index_data_train”. The function processes the data in “data_train” using the index in “index_data_train”. The updated data is saved in a new variable called “data2”. Finally, the code displays the information inside “data2”.

Generates statistical summary of data

data2.describe()The code “data2.describe()” is a function that provides a summary of statistical information about the data stored in the DataFrame “data2”. This includes details like the count, mean, standard deviation, minimum, maximum, and different percentiles (25%, 50%, 75%) for each column in the DataFrame. It offers a concise overview of the numerical data within the DataFrame, aiding in grasping the data’s distribution and key characteristics quickly.

Visualizes data correlation heatmap

correlated_matrix = data2.corr()

plt.figure(figsize=(15, 8))

sns.heatmap(correlated_matrix, cmap='coolwarm')

plt.show()This code computes the correlation matrix of a dataset, indicating how closely the variables are related. It then presents this matrix as a heatmap using the seaborn library. The heatmap graphically represents the correlations between the variables: strong positive correlations are shown in red, strong negative correlations in blue, and no correlation in white. Through this visualization, one can easily grasp the relationships between the various variables in the dataset.

The heatmap above displays the correlation between the features in the dataset and their relationship with the returns column. It is important to note that this analysis is based on unscaled data.

Functions are a way to represent a relationship between input values and output values in mathematics.

Splits data for time-series analysis.

def train_test_split(df):

tss = TimeSeriesSplit(n_splits = 5)

X = df.drop(labels = ['returns'], axis = 1)

y = df['returns']

for train_index, test_index in tss.split(X):

X_train, X_test = X.iloc[train_index, :], X.iloc[test_index,:]

y_train, y_test = y.iloc[train_index], y.iloc[test_index]

return X_train, y_train, X_test, y_testThis function splits a dataset into different sections based on time series data. I will simplify the explanation of each step for you. The function accepts a DataFrame called “df” as its input. The data is split into 5 parts using the TimeSeriesSplit function to perform time series cross-validation. It removes the ‘returns’ column from the DataFrame to separate the input features (X). In this step, the code isolates the target variable (denoted as ‘y’) by choosing just the ‘returns’ column from the DataFrame. The code iterates through the splits created by TimeSeriesSplit, allocating training and testing datasets for both input features (X) and output (y) in each iteration. In the end, the function provides the final split of the data as X_train (training data), y_train (training labels), X_test (testing data), and y_test (testing labels). This function divides the data into training and testing sets using time series cross-validation with 5 splits.

When performing time series analysis, it’s common to use TimeSeriesSplit instead of a regular train-test split from sklearn. This is because random splits of data can be less effective for time series data, where the order of data points matters. TimeSeriesSplit helps to split the data in a way that maintains the sequential nature of time series data, ensuring more accurate evaluation of models.

Applies rolling window normalization

def Normalization(df):

window_size = 30 scaler = StandardScaler() for i in range(df.shape[0]):

start_index = max(0, i - window_size)

end_index = min(i + 1, df.shape[0])

window = df[start_index:end_index]

scaler.fit(window)

current_observation = df[i:i+1]

scaled_observation = scaler.transform(current_observation)

df[i:i+1] = scaled_observation

return df, scalerThis code creates a function named Normalization that normalizes the data in a dataframe using the StandardScaler technique.

I will break down the code for you, step by step, to explain its function.

Set the window size to 30.

To create a StandardScaler object, you can use the following code:

I employed window scaling to adjust the data over a 30-day timeframe in order to prevent look-ahead bias and consider factors like inflation and time value. This period of 30 days generally produced more effective outcomes. I opted for Standard Scaling as opposed to min-max scaling due to its resistance to outliers, capability to maintain the distribution shape, and better performance for neural networks that factor in distance.

Normalize and split data for training.

def Normalization_Split_train(df, df1):

data_preprocessed = Preprocess(df, df1)

X_train_processed, y_train_processed, X_validate_processed, y_validate_processed = train_test_split(data_preprocessed)

X_train_processed, scaler_xtrain = Normalization(X_train_processed.to_numpy())

y_train_processed, scaler_ytrain = Normalization(y_train_processed.to_numpy().reshape(-1,1))

X_validate_processed, scaler_xval = Normalization(X_validate_processed.to_numpy())

y_validate_processed, scaler_yval = Normalization(y_validate_processed.to_numpy().reshape(-1,1))

return X_train_processed, y_train_processed, X_validate_processed, y_validate_processed, scaler_xtrain, scaler_ytrain, scaler_xval, scaler_yvalThis code creates a function called Normalization_Split_train that accepts two dataframes, named df and df1. The data is processed before using the function Preprocess, which takes the dataframes df and df1 as inputs. The data is divided into two parts for training and validation using the train_test_split function. The function normalizes the training and validation features and target values separately. It uses the Normalization function for this process. When data is normalized, it is scaled down to a common range. After this process, you get the normalized data and the scaler that was used to normalize it. The function ultimately provides normalized training and validation data, along with scalers for the features and target values. This code prepares the data by organizing it into training and validation sets, and standardizes its values for training and validation. It then gives back the standardized data and the tools used for standardizing for future use.

To prevent look-ahead bias in the data, I divided it into training and validation sets using TimeSeriesSplit with n_split = 5. Then, I applied scaling to the data. I have also separated the returns as the y variable, which is the variable that the neural networks will learn.

Normalize and prepare test dataset

def Normalization_Split_test(df, df1):

data_preprocessed = Preprocess(df, df1)

X_test_processed, y_test_processed = data_preprocessed.drop(labels = ['returns'], axis = 1), data_preprocessed['returns']

X_test_processed, scaler_xtest = Normalization(X_test_processed.to_numpy())

y_test_processed, scaler_ytest = Normalization(y_test_processed.to_numpy().reshape(-1,1))

return X_test_processed, y_test_processed, scaler_xtest, scaler_ytestThis code creates a function named Normalization_Split_test that receives two dataframes, df and df1, as arguments. The input dataframes are prepared for further processing by applying a function called Preprocess. The cleaned data is then saved in a variable called data_preprocessed. The code then takes the feature columns and the target column (‘returns’) from the preprocessed data and saves them as X_test_processed and y_test_processed. To prepare the data for analysis, the feature data X_test_processed and target data y_test_processed are normalized using a normalization function. This process results in two outputs for both feature and target data: the normalized data itself, and a scaler value (scaler_xtest for features and scaler_ytest for targets). These scalers allow for the data to be transformed back to its original scale if needed. The function ultimately outputs the normalized feature data, normalized target data, as well as the scalers for both the features and target data. This function takes input dataframes, separates the features and target, normalizes the data, and then returns the normalized data along with scalers that can be used to transform the data back to its original scale.

Plot actual vs. predicted stock returns

def plot(y_validate_processed, predicted_stock_price):

plt.figure(figsize=(15, 10))

plt.plot(y_validate_processed, color = 'red', label = 'Actual returns')

plt.plot(predicted_stock_price, color = 'blue', label = 'Predicted returns')

plt.title('Apple Stock Price Predictor')

plt.xlabel('Time')

plt.ylabel('Apple Stock Price')

plt.legend()This code creates a function named “plot” which requires two inputs: “y_validate_processed” and “predicted_stock_price”. In the function: A new plot is generated with dimensions of 15 units in width and 10 units in height. It shows the real stock returns from the processed y_validate data in red with a label “Actual returns.” The graph shows the expected stock returns based on the predicted stock prices in blue, and it includes a label ‘Predicted returns’. The title for the plot is ‘Apple Stock Price Predictor’. The x-axis is labeled as ‘Time’, and the y-axis is labeled as ‘Apple Stock Price’. A legend is included in the plot to help differentiate between the actual and predicted returns. The function is used to create a graph that shows the real and predicted stock returns for Apple stock prices, allowing for a comparison between them.The plot function is used to graphically represent the relationship between actual returns and predicted returns for all the models. It is a key step in error analysis to evaluate how well a model is performing.

Split and normalize training data

X_train_processed, y_train_processed, X_validate_processed, y_validate_processed, scaler_xtrain, scaler_ytrain, scaler_xval, scaler_yval = Normalization_Split_train(data_train, index_data_train)This code calls the function Normalization_Split_train with two arguments: data_train and index_data_train. This function is expected to normalize the data in data_train and then divide it into training and validation sets. The function will provide the normalized and split datasets for training and validation (X_train_processed, y_train_processed, X_validate_processed, y_validate_processed). It will also return the scalers used for normalization (scaler_xtrain, scaler_ytrain, scaler_xval, scaler_yval). This code snippet readies the data for training by standardizing it and dividing it into training and validation sets. It also provides the scalers that were utilized for standardization.

Split and normalize test data

X_test_processed, y_test_processed, scaler_xtest, scaler_ytest = Normalization_Split_test(data_validate, index_data_validate)This code prepares a test dataset by normalizing its features and target values. It then splits these normalized values and the corresponding normalization scalers into four variables: X_test_processed (normalized features), y_test_processed (normalized target), scaler_xtest (scaler used for normalizing features), and scaler_ytest (scaler used for normalizing target).

Feature importance refers to the process of identifying which features or variables in a dataset have the most influence on the target outcome. By determining the importance of different features, we can gain insights into which factors are driving the predictions made by a model. This analysis can help in understanding the underlying relationships in the data and in making informed decisions about feature selection and model improvement.

Train model, display feature importances

from sklearn.datasets import make_regression

from sklearn.ensemble import RandomForestRegressor

from matplotlib import pyplot

X, y = X_train_processed, y_train_processed

model = RandomForestRegressor()

model.fit(X, y)

importances = model.feature_importances_

feature_names = data_train.drop(labels=['returns'], axis=1).columns

for name, importance in zip(feature_names, importances):

print(f'{name}: {importance:.4f}')

pyplot.bar([x for x in range(len(importances))], importances)

pyplot.show()First, the code imports required libraries and generates a random regression dataset using the make_regression function. Then, it creates a RandomForestRegressor model, trains it on the dataset, and calculates the importance of each feature by accessing the feature_importances_ attribute of the trained model. Afterwards, the code assigns the feature names to a variable and then goes through each feature to display its name and importance score. The final step involves visualizing these feature importances through a bar plot created using matplotlib. This code creates a random forest regression model from a dataset. Then, it visualizes the importance of each feature in making predictions using a bar graph.

A Random Forest regressor calculates the importance of a feature by evaluating how much it decreases impurity or variance in decision trees when used to split the data. This method can show both linear and non-linear relationships between features and the target variable. In the case of this analysis, the Adjusted Close price, Lag-1 to Lag-5, and SPY returns features display higher importance compared to other variables.

Compute, display mutual information scores

from sklearn.feature_selection import mutual_info_regression

mi_scores = mutual_info_regression(X, y)

feature_names = data_train.drop(labels=['returns'], axis=1).columns

for name, score in zip(feature_names,mi_scores):

print(f'{name}: {score:.4f}')

pyplot.bar([x for x in range(len(mi_scores))], mi_scores)

pyplot.show()This code employs the mutual information regression method to determine the significance of individual features in predicting the target variable. The process begins by calculating the mutual information scores between the features in dataset X and the target variable y. After removing the target variable column, the program retrieves the names of the features from the training data. Afterwards, the program displays the names of the features along with their respective mutual information scores. Lastly, a bar plot is shown, displaying the mutual information scores for each feature. This code calculates the importance of each feature in predicting a target variable by measuring the mutual information between them. It then displays this information using a bar plot.

Mutual information quantifies how much information about the target variable is provided by a feature. It prioritizes features that are strongly associated with the target, even if the relationship is complex or non-linear. In this case, the SPY returns are the most important features based on this measure, highlighting their significant relationship with the target variable.