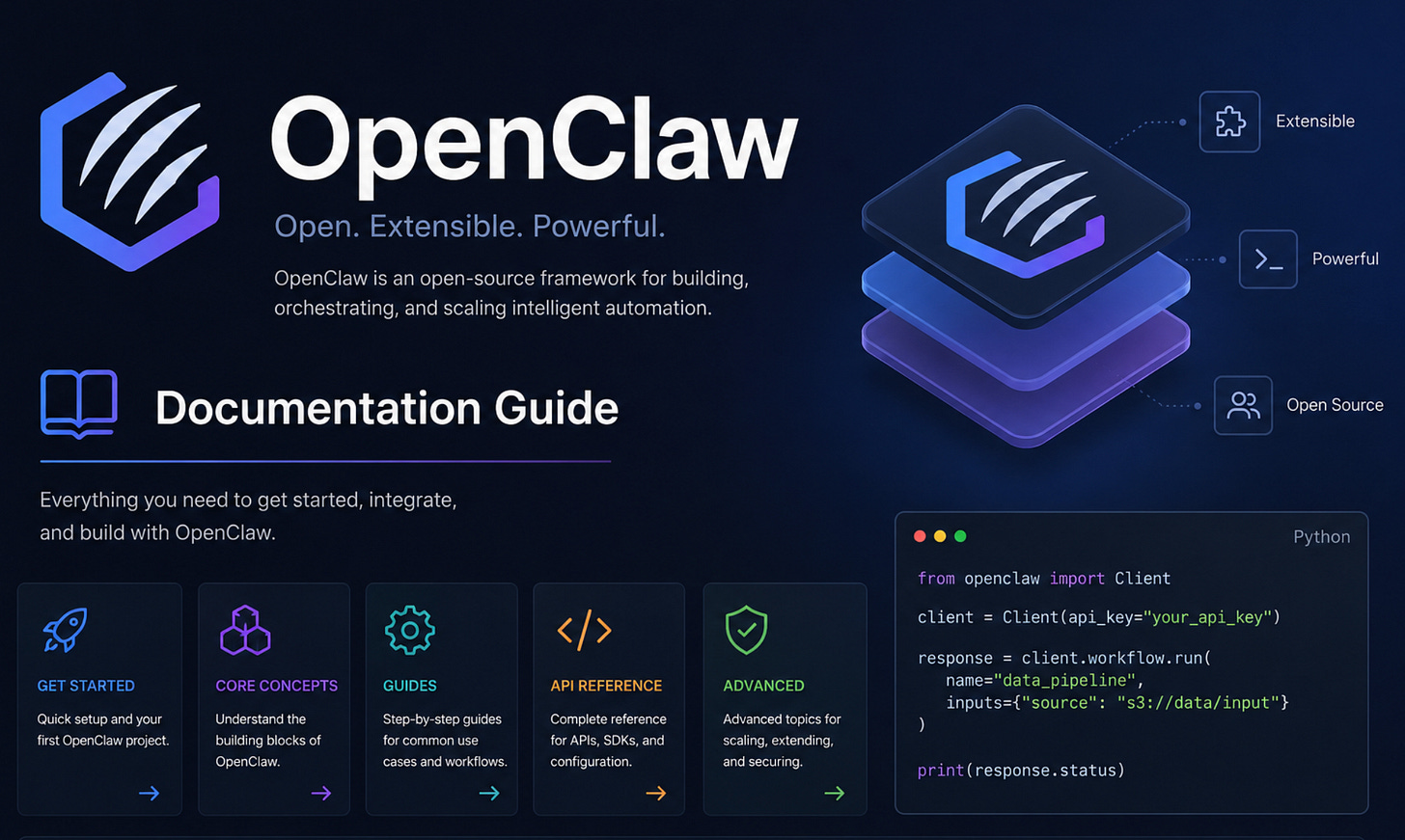

OpenClaw CLI Commands: The Complete Operator Reference

A deep-dive into every OpenClaw command — agents, sessions, inference, plugins, skills, channels, memory, gateway, configuration, and automation-ready JSON workflows.

Download the entire book using the button at the end of this article

Introduction: Agents, Sessions, and Gateway vs Embedded Execution

Agents are the Gateway-backed brains that make decisions and call tools; sessions are their conversation state, stored as append-only transcripts plus a mutable index (compaction and pruning are covered later). When you ask a turn to an agent from the CLI, you must first choose which session or agent should receive that turn and whether the run happens through the Gateway process or locally in an embedded runtime.

By default openclaw agent sends the turn through the Gateway daemon. Use --local to force the embedded execution path (useful for quick experiments or when the Gateway is unavailable). Note that --local still preloads the plugin registry, so plugins that register tools, providers, or channels are available to embedded runs.

Routing selectors You must pass at least one selector to choose the target for the run: --to, --session-id, or --agent. Each selector affects routing differently:

--to <address> (phone, matrix id, slack user/channel): resolves to a session via directory bindings and delivery targets; good for channel-focused workflows.

--session-id <id>: targets a specific session by its persistent id (useful for replaying or debugging).

--agent <id>: targets the named agent workspace; the Gateway will open or reuse a session according to agent defaults.

Delivery vs routing --deliver instructs OpenClaw to send the agent’s reply back to the selected channel/account. Reply routing fields (--reply-channel, --reply-to, --reply-account) affect delivery destination and formatting but do not change which session receives the turn. If you need to route a run to a different session while delivering to another destination, pass both the appropriate selector and delivery overrides.

Operational notes

Always include a selector; the CLI will error if you do not specify at least one of --to, --session-id, or --agent.

Use --thinking and --verbose to control internal progress and verbosity. Use --json for automation-friendly output.

If the command triggers regeneration of models.json, SecretRef-managed provider credentials may be persisted as non-secret markers rather than plaintext secrets; audit any credentials after such changes.

Canonical examples (runnable bash) The following are copy-paste-ready examples demonstrating Gateway vs local runs, selectors, delivery, and JSON output:

openclaw agent --to +15555550123 --message "status update" --deliver

openclaw agent --agent ops --message "Summarize logs"

openclaw agent --session-id 1234 --message "Summarize inbox" --thinking medium

openclaw agent --to +15555550123 --message "Trace logs" --verbose on --json

openclaw agent --agent ops --message "Generate report" --deliver --reply-channel slack --reply-to "#reports"

openclaw agent --agent ops --message "Run locally" --localKeep these patterns in mind: selectors choose session routing, --deliver controls outward delivery, and --local runs embedded but with plugin support available. Subsequent chapters show how sessions are stored and how compaction changes transcript shape.

Managing Agents and Bindings

An agent is the Gateway’s isolated persona: a workspace-backed runtime with its own auth, skills, and routing. The agents CLI group manages those personas and their inbound routing (bindings), identities, and lifecycle. Treat "bindings" as the pinning mechanism that directs inbound channel traffic to a particular agent.

openclaw agents with no subcommand behaves like openclaw agents list; it prints the configured agents and basic metadata.

Routing binding rules and behavior

A binding can be channel-only (e.g., telegram) or channel:accountId scoped (e.g., telegram:ops). Channel-only bindings match the channel’s default account. An explicit channel:accountId match is more specific than a channel-only binding.

accountId:"*" is a channel-wide fallback; it is less specific than an explicit account-scoped binding.

If you bind a channel-only entry first and later bind the same channel with an explicit accountId, OpenClaw upgrades the existing binding in place rather than creating a duplicate entry.

Omitting accountId on --bind lets OpenClaw resolve the accountId from channel defaults and plugin setup hooks when available.

Creating and listing agents

Creating an agent interactively runs the CLI wizard. Supplying explicit flags moves the command into non-interactive mode: you must provide the agent id/name and --workspace.

The agent id main is reserved. You cannot create or delete an agent with id main.

Identity and avatar behavior

set-identity writes into agents.list[].identity in configuration. Avatar paths are resolved relative to the workspace root when given as a relative path; they may also be URLs or data URIs.

Deletion semantics and safety

Deleting an agent moves its workspace, state, and session transcript directories to Trash by default. This provides a safety net. Without --force, deletion requires interactive confirmation. Use --force to bypass interactive confirmation and to perform a hard delete if supported by your platform. Remember main cannot be deleted.

Canonical command examples (recipes) The following examples show common workflows: listing, adding, binding/unbinding, setting identity, and deleting.

openclaw agents list

openclaw agents list --bindings

openclaw agents add work --workspace ~/.openclaw/workspace-work

openclaw agents add ops --workspace ~/.openclaw/workspace-ops --bind telegram:ops --non-interactive

openclaw agents bindings

openclaw agents bind --agent work --bind telegram:ops

openclaw agents unbind --agent work --bind telegram:ops

openclaw agents set-identity --workspace ~/.openclaw/workspace --from-identity

openclaw agents set-identity --agent main --avatar avatars/openclaw.png

openclaw agents delete workBindings inspection and JSON output

openclaw agents bindings

openclaw agents bindings --agent work

openclaw agents bindings --jsonMultiple binds in one command

openclaw agents bind --agent work --bind telegram:ops --bind discord:guild-aChannel-only → account-scoped upgrade (in-place)

# initial channel-only binding

openclaw agents bind --agent work --bind telegram

## later upgrade to account-scoped binding

openclaw agents bind --agent work --bind telegram:opsRemoving bindings

openclaw agents unbind --agent work --bind telegram:ops

openclaw agents unbind --agent work --allSet identity from workspace file

openclaw agents set-identity --workspace ~/.openclaw/workspace --from-identityExplicit identity fields

openclaw agents set-identity --agent main --name "OpenClaw" --emoji "🦞" --avatar avatars/openclaw.pngExample JSON shape for identity (openclaw.json configuration)

{

"agents": {

"list": [

{

"id": "main",

"identity": {

"name": "OpenClaw",

"theme": "space lobster",

"emoji": "🦞",

"avatar": "avatars/openclaw.png"

}

}

]

}

}Operational checklist

Use --workspace when adding agents non-interactively.

Prefer explicit account-scoped bindings for channel routing clarity.

Verify bindings with openclaw agents bindings --json before relying on routing.

Back up workspaces before destructive deletions; default Trash semantics can save recovery time.

Hooks: Discovery, Eligibility, Enabling, and Installing Hook Packs

Hooks let you attach small, event-driven automations to Gateway events (startup, commands, cron-like triggers). Discovering, inspecting, and toggling hooks is a common operational task: you need to confirm a hook is present, verify its requirements are satisfied, opt a workspace into using it, and—when a hook comes from a plugin—manage it via the owning plugin rather than the hooks CLI.

Discovery and listing The CLI discovers hooks in multiple locations: workspace, managed (plugin) directories, extraDirs, and bundled hooks shipped with OpenClaw. Gateway startup will not wire up internal hook handlers until at least one internal hook is configured to run. Use the simple list command to see what the CLI finds:

openclaw hooks listTypical human-readable output looks like this (illustrative):

Hooks (4/4 ready)

Ready:

🚀 boot-md ✓ - Run BOOT.md on gateway startup

📎 bootstrap-extra-files ✓ - Inject extra workspace bootstrap files during agent bootstrap

📝 command-logger ✓ - Log all command events to a centralized audit file

💾 session-memory ✓ - Save session context to memory when /new or /reset command is issuedFiltering, JSON output, and verbosity

--eligible shows only hooks whose requirements are met.

--json produces structured output suitable for automation.

--verbose prints missing requirements for ineligible hooks so you can resolve them.

Examples:

openclaw hooks list --verbose

openclaw hooks list --jsonInspect hook details To view a hook’s metadata, handler path, events, and requirement checks, call info:

openclaw hooks info <name>Example:

openclaw hooks info session-memoryIllustrative info output:

💾 session-memory ✓ Ready

Save session context to memory when /new or /reset command is issued

Details:

Source: openclaw-bundled

Path: /path/to/openclaw/hooks/bundled/session-memory/HOOK.md

Handler: /path/to/openclaw/hooks/bundled/session-memory/handler.ts

Homepage: https://docs.openclaw.ai/automation/hooks#session-memory

Events: command:new, command:reset

Requirements:

Config: ✓ workspace.dirEligibility check Run a quick summary of readiness:

openclaw hooks checkSample summary:

Hooks Status

Total hooks: 4

Ready: 4

Not ready: 0Enable and disable workspace hooks Enabling a workspace (or bundled) hook writes the opt-in into your config at hooks.internal.entries.<name>.enabled = true and persists it to disk. The Gateway will only load workspace hook handlers after this opt-in.

openclaw hooks enable session-memorySuccess confirmation:

✓ Enabled hook: 💾 session-memoryTo disable:

openclaw hooks disable <name>

# e.g.

openclaw hooks disable command-loggerConfirmation:

⏸ Disabled hook: 📝 command-loggerImportant: restart required After enabling or disabling hooks, restart the Gateway so the new hook state is loaded. On macOS this means restarting the menu-bar app; in other environments restart the gateway process or system service.

Plugin-managed hooks Hooks owned by plugins are shown with a plugin:<id> source in openclaw hooks list. These cannot be toggled with openclaw hooks enable/disable. To enable or disable such hooks, enable or disable the owning plugin with the plugins commands.

Installing hook packs (recommended) Hook packs should be installed via the plugin installer. openclaw plugins install is the canonical installer; openclaw hooks install exists only as a compatibility alias that forwards to plugins install and warns.

The plugin installer accepts registry-only NPM specs (git/URL/file specs and semver ranges are rejected). Installs run dependency steps with --ignore-scripts for safety; use --pin to pin versions when needed.

Examples:

openclaw plugins install <package> # ClawHub first, then npm

openclaw plugins install <package> --pin # pin version

openclaw plugins install <path> # local path

# Local directory

openclaw plugins install./my-hook-pack

## Local archive

openclaw plugins install./my-hook-pack.zip

## NPM package

openclaw plugins install @openclaw/my-hook-pack

## Link a local directory without copying

openclaw plugins install -l./my-hook-packUpdating plugins

openclaw plugins update <id>

openclaw plugins update --allInspecting command logs Command-related hooks (e.g., command-logger) write to the Gateway logs. Use standard tools to inspect or filter them:

# Recent commands

tail -n 20 ~/.openclaw/logs/commands.log

# Pretty-print

cat ~/.openclaw/logs/commands.log | jq.

# Filter by action

grep '"action":"new"' ~/.openclaw/logs/commands.log | jq.Safety notes

Plugin-managed hooks must be controlled via the plugin lifecycle; attempting to toggle them with the hooks CLI will fail.

Installers run with --ignore-scripts to limit arbitrary lifecycle scripts; you cannot install via Git/URL/file specs or open semver ranges—use registry or local archives/directories and pin when you require reproducible installs.

The Infer CLI: Capability-Oriented Provider Workflows

OpenClaw exposes a single, capability-oriented CLI for provider-backed tasks: openclaw infer. Treat it as the canonical, headless surface for model runs, image/video generation and description, audio transcription, TTS, web search/fetch, and embedding creation. The infer CLI groups operations by capability (model, image, audio, tts, video, web, embedding) rather than by raw RPC names or provider tool IDs. That makes scripting and skill routing predictable: map user intents to an infer subcommand and pass provider/model overrides only when necessary.

Top-level command vocabulary The following lists the infer subcommands and capability families. Use this as a quick reference when authoring scripts or agent skills that call openclaw infer.

openclaw infer

list

inspect

model

run

list

inspect

providers

auth login

auth logout

auth status

image

generate

edit

describe

describe-many

providers

audio

transcribe

providers

tts

convert

voices

providers

status

enable

disable

set-provider

video

generate

describe

providers

web

search

fetch

providers

embedding

create

providersWhy use --json Prefer --json whenever a command's output will be consumed by another command, script, or an agent skill. That flag produces a stable, machine-readable response shape that commonly includes ok, capability, transport, provider, model, attempts, and outputs. Example JSON output:

{

"ok": true,

"capability": "image.generate",

"transport": "local",

"provider": "openai",

"model": "gpt-image-1",

"attempts": [],

"outputs": []

}Transport and targeting rules

Stateless inference commands (single-run model calls, image/video generate, embeddings) default to the local transport. Use --transport gateway if you need Gateway routing or logging.

Commands that interact with Gateway-managed state default to the gateway transport.

When you must target a particular backend, use --provider or --model with the provider/model form (for example openai/whisper-1). Some capabilities require explicit provider qualification when specifying a model: image describe, audio transcribe, and video describe are examples.

Concrete examples Model runs and inspection:

openclaw infer model run --prompt "Reply with exactly: smoke-ok" --json

openclaw infer model run --prompt "Summarize this changelog entry" --provider openai --json

openclaw infer model providers --json

openclaw infer model inspect --name gpt-5.4 --jsonImage generation and description:

openclaw infer image generate --prompt "friendly lobster illustration" --json

openclaw infer image generate --prompt "cinematic product photo of headphones" --json

openclaw infer image describe --file./photo.jpg --json

openclaw infer image describe --file./ui-screenshot.png --model openai/gpt-4.1-mini --jsonAudio transcription:

openclaw infer audio transcribe --file./memo.m4a --json

openclaw infer audio transcribe --file./team-sync.m4a --language en --prompt "Focus on names and action items" --json

openclaw infer audio transcribe --file./memo.m4a --model openai/whisper-1 --jsonTTS:

openclaw infer tts convert --text "hello from openclaw" --output./hello.mp3 --json

openclaw infer tts convert --text "Your build is complete" --output./build-complete.mp3 --json

openclaw infer tts providers --json

openclaw infer tts status --jsonVideo and web:

openclaw infer video generate --prompt "cinematic sunset over the ocean" --json

openclaw infer video describe --file./clip.mp4 --json

openclaw infer web search --query "OpenClaw docs" --json

openclaw infer web fetch --url https://docs.openclaw.ai/cli/infer --jsonEmbeddings:

openclaw infer embedding create --text "friendly lobster" --json

openclaw infer embedding create --text "customer support ticket: delayed shipment" --model openai/text-embedding-3-large --json

openclaw infer embedding providers --jsonWhen to qualify model names Always use provider/model form when the model requires an explicit provider. This avoids ambiguous failures.

# Bad

openclaw infer audio transcribe --file./memo.m4a --model whisper-1 --json

## Good

openclaw infer audio transcribe --file./memo.m4a --model openai/whisper-1 --jsonSmall usability warning Some describe/transcribe operations will fail or select an unexpected provider if you omit the provider prefix. If you depend on a specific model behavior (transcription quality, image-describe format), explicitly set --model openai/whisper-1 or --provider openai.

Routing infer from an agent skill When authoring a skill that routes to infer, map intents to the relevant subcommands (model run, image generate, audio transcribe, tts convert, web search, embedding create) and prefer --json for downstream parsing. A minimal prompt to bootstrap such a skill:

Read https://docs.openclaw.ai/cli/infer, then create a skill that routes my common workflows to `openclaw infer`.

Focus on model runs, image generation, video generation, audio transcription, TTS, web search, and embeddings.Summary Use openclaw infer as the single, consistent entrypoint for provider-backed capabilities. Prefer the capability families it defines, use --json for automation, and qualify models with provider/model when precision matters.

Memory: Status, Indexing, Promotion, and Dreaming

Memory in OpenClaw is provided by the active memory plugin. By default that plugin is memory-core. If you prefer to disable semantic memory entirely, set plugins.slots.memory = "none" in your configuration; otherwise memory commands act against the active plugin. Many memory operations can be targeted to a single agent with --agent <id>; without --agent they run for each configured agent or fall back to a default agent.

Run these commands to inspect health, reindex, search, preview promotions, apply promotions, and preview REM-stage reflections:

openclaw memory status

openclaw memory status --deep

openclaw memory status --fix

openclaw memory index --force

openclaw memory search "meeting notes"

openclaw memory search --query "deployment" --max-results 20

openclaw memory promote --limit 10 --min-score 0.75

openclaw memory promote --apply

openclaw memory promote --json --min-recall-count 0 --min-unique-queries 0

openclaw memory promote-explain "router vlan"

openclaw memory promote-explain "router vlan" --json

openclaw memory rem-harness

openclaw memory rem-harness --json

openclaw memory status --json

openclaw memory status --deep --index

openclaw memory status --deep --index --verbose

openclaw memory status --agent main

openclaw memory index --agent main --verboseKey behaviors and rules

Plugin ownership: openclaw memory commands call the active memory plugin. The default is memory-core. Disable by setting plugins.slots.memory = "none".

Status probes: memory status reports plugin health. Use --deep to probe vector store and embedding availability. --index implies --deep and triggers reindexing when the store is marked dirty.

Reindexing: memory index re-creates vector indexes. Use --force to bypass store-dirty checks and rebuild from source documents.

Search requirement: memory search requires either a positional query string or --query. If neither is provided, the command exits with an error.

Agent scoping: add --agent <id> to run operations for a single agent; otherwise commands iterate agents or run against the default.

Promotion and REM

Memory promote ranks short-term promotion candidates using multiple weighted signals: frequency, relevance, query diversity, recency, consolidation score, and conceptual richness. By default promote runs in preview mode—no writes—so you can inspect candidates before changing state. Apply promotions by adding --apply. Use --json for machine-friendly output in automation.

To understand why a candidate is promoted, use memory promote-explain <selector> which returns a score breakdown and reasoning. For deeper consolidation previews (the REM stage), run memory rem-harness; it simulates REM reflections without committing changes.

Dreaming and background consolidation

Dreaming is an optional background consolidation pipeline consisting of light, deep, and REM phases. Enable it in the memory-core plugin config; when enabled, memory-core auto-manages a background cron for consolidation. Beware: enabling dreaming causes periodic background work (CPU, embedding calls, storage writes) and may increase provider usage and disk I/O.

Enable dreaming with this configuration (strict JSON for your config file):

{

"plugins": {

"entries": {

"memory-core": {

"config": {

"dreaming": {

"enabled": true

}

}

}

}

}

}Quick command templates

openclaw memory promote [--apply] [--limit <n>] [--include-promoted]openclaw memory promote-explain <selector> [--agent <id>] [--include-promoted] [--json]openclaw memory rem-harness [--agent <id>] [--include-promoted] [--json]Warning: run promote --apply and enable dreaming only after reviewing previews and estimating cost. Background consolidation can consume embedding API credits and produce write-heavy workloads on large stores.

Messaging Commands: send, poll, react, and Provider-specific Targets

The Gateway CLI can perform full message-level actions across channels: sending text and media, creating polls, adding reactions, editing or deleting messages, and pin/unpin operations. Use the message subcommands to perform these actions from scripts or the command line; the general invocation pattern is:

openclaw message <subcommand> [flags]Channel selection and target formats If your installation has more than one configured channel you must pass --channel; when only one channel exists it becomes the default. Targets are provider-specific strings: WhatsApp uses E.164 numbers or group JIDs, Discord accepts channel:<id> or user:<id>, Google Chat uses spaces/<spaceId> or users/<userId>, and Slack accepts channel:<id> or user:<id>. OpenClaw also caches a directory of names and will attempt a live lookup on a cache miss for providers that support it.

SecretRef resolution and failure semantics Before running the action, openclaw message resolves required channel/account SecretRefs. Resolution scope depends on flags: --channel makes channel-scoped SecretRefs apply; --account targets account-scoped credentials. If the selected channel or account SecretRef is unresolved the command fails closed and the action is not attempted. Unresolved SecretRefs for unrelated channels do not block a targeted action.

Send and rich payloads Most send payloads accept provider-native rich fields. Some examples:

openclaw message send --channel discord \

--target channel:123 --message "hi" --reply-to 456To pass interactive components or blocks you can supply provider JSON. Discord uses --components:

openclaw message send --channel discord \

--target channel:123 --message "Choose:" \

--components '{"text":"Choose a path","blocks":[{"type":"actions","buttons":[{"label":"Approve","style":"success"},{"label":"Decline","style":"danger"}]}]}'Google Chat uses a separate --interactive payload:

openclaw message send --channel googlechat --target spaces/AAA... \

--message "Choose:" \

--interactive '{"text":"Choose a path","blocks":[{"type":"actions","buttons":[{"label":"Approve"},{"label":"Decline"}]}]}'Provider-specific flags and media Many providers expose unique flags. Telegram supports --buttons, --force-document, and --thread-id; Teams accepts --card with Adaptive Card JSON; Discord and Slack use --components. Example Adaptive Card for Teams:

openclaw message send --channel msteams \

--target conversation:19:abc@thread.tacv2 \

--card '{"type":"AdaptiveCard","version":"1.5","body":[{"type":"TextBlock","text":"Status update"}]}'Send media (Telegram example with force-document):

openclaw message send --channel telegram --target @mychat \

--media./diagram.png --force-documentPolls Polls require --target, --poll-question, and one or more --poll-option. Providers expose extra controls: Discord supports multi-select and --poll-duration-hours; Telegram supports --poll-duration-seconds and --silent. Examples:

openclaw message poll --channel discord \

--target channel:123 \

--poll-question "Snack?" \

--poll-option Pizza --poll-option Sushi \

--poll-multi --poll-duration-hours 48openclaw message poll --channel telegram \

--target @mychat \

--poll-question "Lunch?" \

--poll-option Pizza --poll-option Sushi \

--poll-duration-seconds 120 --silentReactions, pins and edits Reaction actions typically need --message-id and --target. Some providers require extra attribution fields (Signal uses --target-author-uuid; WhatsApp may need --participant or --from-me). Slack example:

openclaw message react --channel slack \

--target C123 --message-id 456 --emoji "✅"Signal example with author UUID:

openclaw message react --channel signal \

--target signal:group:abc123 --message-id 1737630212345 \

--emoji "✅" --target-author-uuid 123e4567-e89b-12d3-a456-426614174000Operational notes and gotchas

Sending components or cards to a provider that doesn't support them will be rejected or ignored; check provider capability before automation.

Live lookups may fail for some providers — if name resolution fails, prefer explicit target IDs.

Because the CLI resolves SecretRefs before actions, ensure gateway secrets and auth-profiles are present when scripting; missing credentials cause a closed failure rather than a silent fallback.

These commands are intended for automation-friendly usage; combine them with --json output when scripting or capturing responses.

Models and Provider Auth: Discovery, Status, and Probing

OpenClaw resolves which provider and model an agent will use before sending any inference requests. Use the models commands to inspect the resolved default, fallbacks, and the authentication state that OpenClaw will attempt when a run is executed.

The quick command set for discovery, inspection, and configuration:

openclaw models status

openclaw models list

openclaw models set <model-or-alias>

openclaw models scanopenclaw models status shows the current resolved default model, the fallback chain, and an auth overview. When providers expose usage windows or quota snapshots, status will surface that information.

openclaw models list enumerates discovered models across configured providers (local caches plus any scanned results).

openclaw models scan performs provider discovery; pair this with status to verify what will be chosen at runtime.

Be cautious with live probes. The status and scan commands accept a --probe mode which issues real requests to providers to validate credentials and availability. These are real network calls that can consume quotas or trigger rate limits; do not run --probe in bulk or from automated loops without rate‑limit controls.

Model reference parsing rules

Model refs are parsed by splitting on the first '/'. The prefix before the first slash is treated as the provider identifier and the remainder as the model id.

If a model name itself contains additional slashes (for example, OpenRouter-style refs), include the provider prefix to avoid parsing ambiguity; otherwise only the first slash is considered.

Omitting a provider when setting a model When you run openclaw models set with a value that omits the provider, OpenClaw resolves it in this order:

Treat the value as an alias (agents.defaults or models.aliases). If an alias matches, that mapped provider/model is used.

If not an alias, check whether the string uniquely matches a model across all configured providers. If unique, select that provider.

If still ambiguous or no match, fall back to the configured default provider. This final fallback path emits a deprecation warning; to avoid ambiguity, always include the provider prefix when setting models programmatically.

Auth flows and token setup

openclaw models aliases list

openclaw models fallbacks listopenclaw models auth add

openclaw models auth login --provider <id>

openclaw models auth setup-token --provider <id>

openclaw models auth paste-tokenopenclaw models auth add is an interactive helper that can start provider OAuth flows or guide manual token entry.

setup-token and login require an interactive TTY; setup-token in particular will prompt you to paste a long-lived token.

Example: run an interactive provider login and make that auth profile the default:

openclaw models auth login --provider openai-codex --set-defaultRecommended practice: prefer fully qualified provider/model refs (provider/model) in scripts and CI. Use --probe deliberately and sparingly to verify credential health before production runs.

Sessions: Listing, Scoping, and Cleanup

Sessions are stored as per-agent session stores on disk (sessions.json index plus per-session JSONL transcripts). Before running any destructive maintenance, locate the stores you intend to operate on and preview the changes: use the sessions listing and the cleanup dry-run modes so you can script safely.

What scope openclaw sessions uses

By default openclaw sessions looks at the configured default agent store (the agent store selected by your active workspace or gateway config).

Override the default store with --agent <agentId> to target one agent, --all-agents to aggregate every configured agent store the Gateway knows about, or --store <path> to point at an explicit sessions.json file.

When you run --all-agents, OpenClaw discovers configured agent stores and reports the sessions.json path for each regular store it finds. Discovery intentionally skips symlinks and out-of-root paths; those are not reported in the aggregated scan.

Listing examples The following CLI examples show common listing and output modes. Use --json for machine-friendly output and --verbose to include more detail when debugging.

openclaw sessions

openclaw sessions --agent work

openclaw sessions --all-agents

openclaw sessions --active 120

openclaw sessions --verbose

openclaw sessions --jsonExample JSON output This is representative output from openclaw sessions --all-agents --json. Parse the top-level fields to automate tasks: path is the scoped store path (null when scanning multiple), stores lists resolved sessions.json paths, allAgents indicates aggregated mode, count is total sessions found, activeMinutes echoes any --active window, and sessions is an array of session metadata entries you can inspect or feed into other tooling.

{

"path": null,

"stores": [

{ "agentId": "main", "path": "/home/user/.openclaw/agents/main/sessions/sessions.json" },

{ "agentId": "work", "path": "/home/user/.openclaw/agents/work/sessions/sessions.json" }

],

"allAgents": true,

"count": 2,

"activeMinutes": null,

"sessions": [

{ "agentId": "main", "key": "agent:main:main", "model": "gpt-5" },

{ "agentId": "work", "key": "agent:work:main", "model": "claude-opus-4-6" }

]

}Running cleanup safely Session cleanup applies the session.maintenance policy from your config. Always preview with --dry-run first; that shows what would be pruned or capped without making changes. If the configured mode is "warn" you can force cleanup by passing --enforce. Protect currently active sessions by passing an active key or short active window.

Key flags and behaviors

--dry-run: Preview pruning/capping; no writes. Combine with --json for automation.

--enforce: Apply cleanup even if session.maintenance.mode is "warn".

--fix-missing: Remove index entries whose transcript files are missing on disk — useful to repair stores with incomplete file operations; this removes entries even if they wouldn't otherwise age out.

--all-agents / --agent / --store: Scope the cleanup to all discovered agent stores, a single agent store, or an explicit sessions.json path.

--active-key or --active <minutes>: Protect a session by key or protect sessions active within the last N minutes (useful to avoid pruning live conversations).

Cleanup command examples These commands illustrate preview and enforcement workflows. Prefer --dry-run --json in CI or automation to capture structured output for audits.

openclaw sessions cleanup --dry-run

openclaw sessions cleanup --agent work --dry-run

openclaw sessions cleanup --all-agents --dry-run

openclaw sessions cleanup --enforce

openclaw sessions cleanup --enforce --active-key "agent:main:telegram:direct:123"

openclaw sessions cleanup --jsonCleanup preview JSON A sample preview response from openclaw sessions cleanup --all-agents --dry-run --json. Each store entry reports beforeCount/afterCount and the number pruned or capped so scripts can summarize impact.

{

"allAgents": true,

"mode": "warn",

"dryRun": true,

"stores": [

{

"agentId": "main",

"storePath": "/home/user/.openclaw/agents/main/sessions/sessions.json",

"beforeCount": 120,

"afterCount": 80,

"pruned": 40,

"capped": 0

},

{

"agentId": "work",

"storePath": "/home/user/.openclaw/agents/work/sessions/sessions.json",

"beforeCount": 18,

"afterCount": 18,

"pruned": 0,

"capped": 0

}

]

}Operational cautions

Backup session state before applying destructive cleanup operations. Sessions combine an index (sessions.json) and per-session JSONL transcripts; losing both is often irreversible.

Use --dry-run --json for any automated retention job and review the JSON summary before running with --enforce.

When using --all-agents in multi-Gateway or shared storage setups, confirm the paths reported are the intended physical files (discovery skips symlinks/out-of-root paths to reduce accidental cross-store operations).

System Helpers: Events, Heartbeats, and Presence (Gateway RPC)

System helpers let you send short-lived control signals to a running Gateway: enqueue ephemeral events, toggle or inspect heartbeat behavior, and query presence. These commands are RPCs to the Gateway — they do not modify persisted session transcripts or configuration and they require a reachable Gateway instance.

All system subcommands speak Gateway RPC and accept the same shared flags to target a local or remote Gateway:

--url: WebSocket or HTTP address of the Gateway (for example ws://127.0.0.1:18789).

--token: gateway token for authentication (useful for remote Gateways).

--timeout: RPC timeout in seconds for the request.

--expect-final: make the CLI wait for the Gateway to return a final response (useful for event acknowledgement).

Be explicit about the target Gateway. The examples below show both the default local invocation and an explicit remote RPC invocation.

Runnable CLI examples (enqueue an event, control heartbeats, view presence)

openclaw system event --text "Check for urgent follow-ups" --mode now

openclaw system event --text "Check for urgent follow-ups" --url ws://127.0.0.1:18789 --token "$OPENCLAW_GATEWAY_TOKEN"

openclaw system heartbeat enable

openclaw system heartbeat last

openclaw system presenceWhat these do and operational notes

system event: enqueues an ephemeral system event. Use --text to provide the event payload and --mode to control scheduling semantics (now in the examples). Events are transient — they are sent into the Gateway runtime and are not written to on-disk session transcripts. They do not survive Gateway restarts.

system heartbeat enable / disable (or pause): toggle heartbeat emission state. enable resumes or turns on heartbeat reporting; a disable/pause command suspends it. Use heartbeat last to inspect the most recent heartbeat timestamp/state reported by the Gateway.

system presence: lists presence entries known to the Gateway (nodes, clients, sessions with recent activity).

Requirements and warnings

A running, reachable Gateway is required. If your configuration cannot reach the Gateway, RPC calls will time out or fail; verify openclaw gateway status or use --url/--token to point at the correct instance.

Do not rely on system events for durable state changes. If you need persistence, use configuration or agent-level APIs that update stored state.

When issuing commands remotely, keep tokens secret and prefer SSH/Tailscale tunnels or other secure transport for --url to avoid exposing the Gateway control plane.

Checklist before issuing system RPCs

Confirm Gateway is running: openclaw gateway status or a local health probe.

If remote, confirm reachability and authentication: --url and --token set correctly.

Expect ephemeral behavior: events are runtime-only and cleared on restart.

Practical Reference and Automation Notes

Automation-first CLI runs should emit stable, machine-readable output and avoid side effects that consume provider quota. Prefer --json when you plan to parse openclaw command output in scripts or pipelines — that flag is the contract for stable fields and simple success/failure checks.

The infer command returns a predictable JSON shape suitable as an automation contract. Treat the block below as strict JSON you can parse directly from CI, a webhook, or another agent:

{

"ok": true,

"capability": "image.generate",

"transport": "local",

"provider": "openai",

"model": "gpt-image-1",

"attempts": [],

"outputs": []

}Key operational rules to encode in automation:

Use --json for any output you consume. Scripts should fail fast if JSON parsing fails.

Stateless "execution" commands (for example infer with local transport) default to the local transport. Commands that manage Gateway state (agents list, sessions, hooks, gateway install) default to the gateway transport and may require Gateway access or a running daemon.

openclaw message resolves any channel SecretRefs before it executes. The scope of SecretRef resolution depends on flags:

--channel limits resolution to a channel-scoped SecretRef.

--account limits to an account-scoped SecretRef.

Be explicit in automation to avoid accidental resolution against an unexpected credential.

The --probe flag performs live authentication probes. These are real network requests: they may consume provider tokens, count against rate limits or billing, and trigger auth-side rate limits. Treat probes as potentially costly and avoid running them in tight test loops.

Practical automation checklist

Always run destructive or cleanup commands with --dry-run first; combine with --json to programmatically inspect the planned changes.

When scripts interact with Gateway-managed items (hooks, agents, sessions), confirm the Gateway is reachable (openclaw gateway status) and back up ~/.openclaw state before mass deletes.

Interactive flows (provider auth-token interactive setup, some onboarding flows) require a TTY. Detect non-interactive CI environments and use pre-seeded SecretRefs/auth-profiles instead.

When changing models or auth, remember that models.set or models configuration can be ambiguous; prefer explicit auth-profile + provider/model references in automation.

Follow these patterns and your automation will be auditable, safe against accidental token consumption, and robust across local vs gateway transports.

Managing Channels, Devices, and Pairing via CLI

What this chapter covers and how to use the CLI

Most channel and device CLI commands follow the same operational pattern: they either read configuration and print a summary, or they perform a live probe/action against the running Gateway (and, through it, the provider). Understanding which mode a command uses, how auth is resolved, and who is permitted to do what will save time and prevent accidental exposure of secrets or destructive changes.

First, a small example showing how to read the configured gateway auth token from the CLI configuration (useful when scripting or debugging auth resolution):

openclaw config get gateway.auth.tokenLive probing versus config-only summaries

Commands that accept --probe (for example openclaw channels status --probe) invoke per-account probeAccount routines and optional auditAccount checks. This produces realtime transport state and probe results from the Gateway and its adapters.

If the Gateway is unreachable the CLI falls back to printing a config-only summary. You will see account entries and declared settings but not live probe results. Use openclaw doctor --fix to repair mixed states where named accounts and legacy top-level single-account values coexist; doctor will suggest or apply safe rewrites so the config and accounts map are consistent.

Interactive vs non-interactive account flows

openclaw channels add supports both interactive and non-interactive modes. The interactive flow can bind the created account to agents during the add; the non-interactive path does not create or rewrite bindings automatically. Per-provider flags vary widely (token, private-key, app-token, webhook URL, signal-cli paths, Matrix homeserver fields, Nostr relays, etc.); consult openclaw channels add --help for provider-specific options.

When you add a non-default account for channels that historically used top-level single-account settings, OpenClaw will often promote those top-level values into channels.<name>.accounts.default. Doctor --fix helps sort out these migrations safely.

Auth precedence and CLI overrides

CLI flags such as --token and --password override configuration and environment secrets. When you pass --url, the CLI will not fall back to config or environment credentials; you must provide explicit credentials (--token or --password) or the command will error.

For remote QR/setup operations --remote, OpenClaw requires gateway.remote.url or gateway.tailscale.mode set to serve|funnel. If the active remote credentials are SecretRefs and no CLI override was given, the command attempts to resolve them from the active gateway snapshot and fails fast if the Gateway is unreachable.

Devices, pairing, and permissions

Device pairing operations are gated: openclaw devices approve requires an explicit requestId to mint a token. Passing --latest or omitting requestId merely prints the chosen pending request and exits.

Non-admin callers may remove, rotate, or revoke only their own paired devices. Cross-device management needs operator.admin privileges. Token rotation cannot elevate scopes: the rotated token will carry only the scopes already approved for that device; rotating returns the token payload as JSON — treat it as sensitive.

Bulk destructive commands (for example openclaw devices clear) are guarded by confirmation flags like --yes; do not run these without a backup or explicit intent.

Directory and pairing UX

Directory lookups are intended to produce identifiers you paste into subsequent commands (for example openclaw message send --target...). Default directory output is id separated by tabs; use --json when scripting. Many channel directory results are config-backed (allowlists and configured groups) rather than live provider directories—expect differences between what you see here and what the provider API would return.

If multiple pairing-capable channels are configured you must supply a channel positionally or via --channel for pairing list/approve. pairing approve supports --account for multi-account channels and --notify to send a confirmation back to the requester.

QR and remote setup tokens

The QR/setup-code payload contains a short-lived opaque bootstrapToken (not the shared gateway token/password). For openclaw qr, --token and --password are mutually exclusive. Mobile pairing is intentionally conservative: it fails closed for public ws:// gateway URLs — prefer wss:// or Tailscale Serve/Funnel for mobile pairing.

Voice-call plugin

Voice-call commands appear only if the voice-call plugin is installed and enabled on the Gateway. Exposing webhook endpoints via openclaw voicecall expose should be limited to trusted networks; prefer Tailscale Serve to Funnel when possible.

Follow these patterns and checks when scripting or operating channels: prefer --probe for diagnostics, use explicit CLI auth overrides for remote actions, run openclaw doctor --fix for mixed config anomalies, and treat rotated tokens and printed token JSON as high-sensitivity secrets.

Manage channel accounts and runtime status

Channels are the Gateway’s adapters to external messaging systems. You’ll use the channels CLI to inspect which transports are configured, probe per-account health, add or remove accounts, and resolve human-friendly names into canonical channel targets. These commands are the first line of operational checks when a channel behaves oddly or an account needs onboarding.

Quick command cheat-sheet Use this compact set to discover and inspect channel state. These examples are CLI snippets you can run directly.

openclaw channels list

openclaw channels status

openclaw channels capabilities

openclaw channels capabilities --channel discord --target channel:123

openclaw channels resolve --channel slack "#general" "@jane"

openclaw channels logs --channel allWhat each command does and what to expect

openclaw channels list prints configured channel plugins and any named accounts. Expect a short table of channels and counts. If a channel seems missing, check plugins and gateway logs.

openclaw channels status shows per-account state. By default this reads the Gateway’s current runtime view; run with --probe to perform live per-account probeAccount checks (see warning below).

openclaw channels capabilities shows general capability profiles (for example: text, media, buttons). Supplying --channel narrows to that adapter; adding --target asks the adapter whether the specified target supports extra features (file uploads, threading, ephemeral messages).

openclaw channels resolve turns human names or paths into canonical identifiers (useful before scripting sends or bindings).

openclaw channels logs streams channel-adapter logs; use --channel all or a specific channel id.

Status probing and Gateway reachability The --probe flag triggers live probeAccount calls for each configured account and may run optional auditAccount checks. probeAccount exercises transport-level connectivity and permission checks, so you’ll see auth errors (expired token, insufficient scopes) or network failures. If the Gateway is unreachable—daemon stopped, firewall blocking, or wrong socket address—the status command falls back to a config-only summary and will not surface live probe results. If you expect live probes but see only config summaries, verify gateway reachability (openclaw gateway status / gateway probe) before further investigation.

Adding accounts: flags, interactive flows, and binding behavior openclaw channels add accepts many provider-specific flags: token, private-key, app-token, webhook URL, Matrix homeserver/user fields, nostr relays, signal-cli path, etc. Use --help for per-channel options; flags differ per adapter. Example patterns:

openclaw channels add --channel telegram --token <bot-token>

openclaw channels add --channel nostr --private-key "$NOSTR_PRIVATE_KEY"

openclaw channels remove --channel telegram --deleteInteractive add flows will often prompt to bind the new account to an agent during onboarding. Non-interactive adds (scripting/automation) do not create or rewrite agent bindings; you must explicitly bind afterward with openclaw agents bind. A common pitfall: some channels historically supported a single top-level account value in config. When you add a named non-default account, OpenClaw will promote existing top-level single-account values into channels.<channel>.accounts.default to avoid losing configuration. Think of this promotion like adding a new user to a multi-tenant system: the global “single-user” settings become a named user to preserve behavior, which can surprise scripted configs.

Remediation for mixed-state configs If your configuration ends up with both top-level single-account entries and named accounts causing confusion, run openclaw doctor --fix. The doctor can normalize and migrate top-level values into the accounts map and report the final layout.

Interactive login/logout For channels that require interactive pairing or OAuth flows, use:

openclaw channels login --channel whatsapp

openclaw channels logout --channel whatsappIf only one login-capable channel exists, the CLI may infer the channel and let you omit --channel.

Inspecting capabilities with and without a target Querying capabilities without a --target returns the adapter’s general feature set. Supplying --target asks the adapter to evaluate the specific destination (channel:123, room name, matrix room id) and returns target-scoped capabilities and constraints; this is helpful to detect per-room restrictions or upload limits before sending large media.

Operational warnings

probeAccount is live and can trigger rate limits or ephemeral locks on provider APIs—avoid running --probe in tight loops.

Channel credentials and private keys are sensitive; never log or store them in public places.

Removing accounts with --delete is destructive. Backup config before bulk removals.

Use these commands as your daily toolbox for channel health, onboarding, and troubleshooting; they are the starting point before digging into adapter logs, gateway probes, or device pairing flows.

Device pairing: list, approve, rotate, revoke, and safe bulk actions

Always inspect paired devices and pending requests before mutating anything. The list command is your safe read-only starting point; use --json for machine-parsable output you can pipe into scripts or audits.

openclaw devices list

openclaw devices list --jsonThe list shows active paired devices and any pending pairing requests (requestId, requested roles/scopes, originating client metadata). Record the requestId for approvals or rejections; it is the canonical reference used by mutating commands.

Approving and rejecting pairing requests

Approvals must reference an explicit requestId. Running approve without an ID does not create a token. Instead, openclaw devices approve with no arguments will print the selected pending request and exit so you can inspect it first. The --latest flag behaves as a preview helper: it prints the most recent pending request and exits — it does not perform approval.

To mint a token you must run approve with the exact requestId.

Preview and approve examples:

openclaw devices approve

openclaw devices approve <requestId>

openclaw devices approve --latestImportant rule: openclaw devices approve requires an explicit requestId to mint a token; using --latest or omitting requestId only prints the selected pending request and exits.

Device token rotation and revocation Rotation issues a new token for an existing device/role. Rotation cannot expand the device’s privileges: you may not rotate to mint a token with a broader role or scopes than were originally approved for that device. The rotation command returns the new token payload as JSON on success — treat that output as a secret. Persist it to a secure store immediately (secret manager, vault) and rotate any consumers that used the old token.

Example: rotate for operator role with explicit scopes:

openclaw devices rotate --device <deviceId> --role operator --scope operator.read --scope operator.writeYou can also rotate with a minimal command when no scope change is needed:

openclaw devices rotate --device <deviceId> --role operatorTo revoke an issued token for a specific role:

openclaw devices revoke --device <deviceId> --role nodePermission boundaries Non-admin callers can only manage their own device entries. remove, rotate, and revoke operations invoked by a non-admin are restricted to the caller’s deviceId. Cross-device management requires operator.admin privileges. Always verify your caller privileges before attempting cross-device actions to avoid confusing "permission denied" failures.

Removing devices and bulk clears Remove deletes a single paired device entry:

openclaw devices remove <deviceId>

openclaw devices remove <deviceId> --jsonBulk removal is gated by an explicit confirmation flag to prevent accidents. Use --pending to target only outstanding pairing requests. This command is destructive and irreversible; take a backup of ~/.openclaw or your device store if you need a recoverable snapshot.

openclaw devices clear --yes

openclaw devices clear --yes --pending

openclaw devices clear --yes --pending --jsonWarning: clear requires --yes. Without it the command will refuse to run. Also, when you pass --url to any devices command, the CLI will not fall back to config or environment credentials — you must supply --token or --password explicitly; omitting credentials in that case is an error.

Audit and operational notes

Rotate tokens trigger a sensitive JSON output; treat it like any secret: copy to a vault and rotate dependent clients immediately.

Approvals and revocations should be logged and correlated with requestId and deviceId for auditing. Expect your system logs or gateway audit trail to include these operations if auditing is enabled.

Common safe workflow: list → preview pending with --latest → approve by requestId → store returned token securely.

Directory lookups and constructing message targets

You need a canonical, machine-safe identifier before sending a message. The directory commands return those identifiers so you can paste or pipe them into openclaw message send. By default directory output prints a human-oriented line with the identifier as the first field (tab-separated), which is convenient to copy by hand. For automation or scripts always use --json so you parse a stable structure instead of relying on visual output.

The directory is often a local/config-backed index rather than a live provider lookup. Channels frequently expose an allowlist or workspace-configured group membership; results may not reflect external provider-side changes until you refresh pairing or re-sync the channel. Treat directory lookups as authoritative for Gateway-aware routing, but validate freshness if you need up-to-the-second provider state.

When your host has multiple channels configured, include --channel to disambiguate. If only one channel is configured, the CLI may infer the channel; do not rely on inference in scripts or automation — opt for explicit --channel to avoid accidental delivery to the wrong account.

Example: find a peer and then send a message using the returned canonical ID. The first command is illustrative input; copy the returned ID into the second command or pipe it in a script.

openclaw directory peers list --channel slack --query "U0"

openclaw message send --channel slack --target user:U012ABCDEF --message "hello"To discover which local account the Gateway is using for a given channel, query the special self entry. This returns the configured identity you should use for system messages or self-targeted flows.

openclaw directory self --channel zalouserLarge directories can be filtered and limited. Use --query to search by name or partial id, and --limit to bound results when paging or scanning large directories.

openclaw directory peers list --channel zalouser

openclaw directory peers list --channel zalouser --query "name"

openclaw directory peers list --channel zalouser --limit 50Group workflows: list groups to find the group id, then fetch members by group id before messaging the group target.

openclaw directory groups list --channel zalouser

openclaw directory groups list --channel zalouser --query "work"

openclaw directory groups members --channel zalouser --group-id <id>Quick scripting checklist

Use --json for machine parsing.

Always pass --channel in scripts when more than one channel exists.

Validate identifier format before send (e.g., user:U..., group:G..., channel-specific prefixes).

Remember directory may be config-backed; re-sync or re-pair if results seem stale.

Pairing approvals and generating QR/setup codes

Pairing is the operator gate for allowing mobile or companion devices to send messages into a Gateway-managed account. Treat pairing requests like access requests: inspect them, scope approvals to the correct channel/account, and send a confirmation to the requester when appropriate.

When you list pending pairing requests use openclaw pairing list. If your installation has more than one pairing-capable channel configured you must supply which channel to inspect either positionally or with --channel; otherwise the CLI will refuse to proceed. Example queries for a Telegram workspace look like this:

openclaw pairing list telegram

openclaw pairing list --channel telegram --account work

openclaw pairing list telegram --jsonThe --account flag filters requests for multi-account channels (Telegram, WhatsApp, etc.). Use --json for machine-readable output you can feed into automation or approval UIs.

Approving a pairing code follows the same scoping rules: provide a channel when multiple pairing-capable channels exist, and pass --account if the request is tied to a specific account. You can optionally notify the requesting device; --notify sends a confirmation message back to that device after approval. Typical approval flows:

openclaw pairing approve <code>

openclaw pairing approve telegram <code>

openclaw pairing approve --channel telegram --account work <code> --notify--notify is useful when the requester expects an in-channel acknowledgment or a short “paired” message; it does not change the permissions the pairing grants.

Generating a mobile pairing QR or setup code uses openclaw qr. The setup payload contains a short-lived, opaque bootstrapToken rather than the shared gateway token or password; this token is scoped for onboarding and expires quickly. You can request just the setup code, render a QR image in your UI, or emit JSON for tooling:

openclaw qr

openclaw qr --setup-code-only

openclaw qr --json

openclaw qr --remote

openclaw qr --url wss://gateway.example/wsKey rules and remote-mode behavior

--token and --password are mutually exclusive for openclaw qr. Do not pass both.

When you use --remote, the command requires either gateway.remote.url to be configured or gateway.tailscale.mode set to serve or funnel. openclaw qr --remote will attempt to resolve active remote credentials from the Gateway snapshot. If those credentials are stored as SecretRefs and the active Gateway supports secrets.resolve, the CLI asks the Gateway to resolve them; if the Gateway is unreachable or does not support secrets.resolve, the command fails fast. This prevents exposing secret material in the local environment unintentionally.

The setup payload uses a bootstrapToken unique to the setup operation. It is not the persistent gateway token/password; treat it as short-lived and single-use.

Operational warnings

Mobile pairing is intentionally conservative: public, non-TLS ws:// endpoints and Tailscale public ws:// URLs may fail closed. Prefer Tailscale Serve or Funnel or expose a wss:// endpoint for reliable mobile pairing.

Treat pairing codes and bootstrap payloads as sensitive. Do not publish them in public logs.

Realistic sequence

Run openclaw pairing list telegram to find the pending code and identify account context.

Approve it with openclaw pairing approve --channel telegram --account work <code> --notify to both grant access and inform the requester.

If onboarding a phone remotely, run openclaw qr --remote --url wss://gateway.example/ws (or ensure gateway.tailscale.mode=serve). If the Gateway delegates secret resolution, ensure it is reachable so the command can return a valid setup payload.

These steps let you validate, scope, approve, and distribute pairing tokens safely while respecting remote-secret resolution and transport constraints.

Voice-call plugin: call lifecycle and safe webhook exposure

Voice calling is controlled by a gateway-side plugin; the CLI surface appears only after you install and enable that plugin. If the voice-call plugin is not enabled, openclaw voicecall will not be present—verify installation before troubleshooting call failures.

The voicecall commands implement a simple call lifecycle: initiate a call (call), send follow-up content into an ongoing call (continue), terminate a call (end), and probe call state (status). Use status to check delivery, current media/webhook events, or a provider-assigned call identifier. These examples are runnable CLI commands you can use from an operator shell once the plugin is active:

openclaw voicecall status --call-id <id>

openclaw voicecall call --to "+15555550123" --message "Hello" --mode notify

openclaw voicecall continue --call-id <id> --message "Any questions?"

openclaw voicecall end --call-id <id>Exposing a webhook endpoint for inbound provider events (call progress, DTMF, recordings) is a separate operational decision. The plugin supports three exposure modes: serve, funnel, and off. Serve binds a publicly reachable endpoint through Tailscale Serve (recommended when you run Tailscale); Funnel is broader and may be easier in some deployments but increases surface area; off disables external exposure, leaving only loopback/websocket delivery.

Switch exposure modes with the CLI:

openclaw voicecall expose --mode serve

openclaw voicecall expose --mode funnel

openclaw voicecall expose --mode offSecurity checklist before opening webhooks:

Verify the voice-call plugin is enabled and up-to-date.

Prefer --mode serve (Tailscale Serve) to restrict traffic to your Tailscale network and audit who can hit the endpoint.

If you must use funnel or public endpoints, restrict by IP, require HMAC/replay protection from providers, and keep tokens secret.

Monitor gateway logs for unexpected inbound webhook spikes immediately after enabling exposure.

Warning: only expose webhooks to trusted networks. Funnel or public endpoints can surface provider-facing attack vectors—audit access and rotate provider keys after testing.

Commands cheatsheet and scripting tips

When you automate channel tasks or need a quick lookup at the shell, favor small, copy-pasteable commands and predictable machine-readable outputs. The two canonical command groups below (channel probes and pairing/QR generation) cover the most common day-to-day operations: discovering channel capabilities and producing pairing artifacts for mobile or companion nodes.

Channel probes — quick checks and discovery

openclaw channels list

openclaw channels status

openclaw channels capabilities

openclaw channels capabilities --channel discord --target channel:123

openclaw channels resolve --channel slack "#general" "@jane"

openclaw channels logs --channel allopenclaw channels list: enumerate configured channel adapters and their IDs.

openclaw channels status: health and connection state for each adapter.

openclaw channels capabilities: what features (messages, attachments, threads) the gateway sees; use --channel and --target to scope checks to a specific account or target object.

openclaw channels resolve: translate human identifiers to canonical target refs (useful before sending messages or approving pairings).

openclaw channels logs: fetch channel adapter logs; --channel all is useful for broad troubleshooting.

Pairing and QR generation — produce the payloads clients consume

openclaw qr

openclaw qr --setup-code-only

openclaw qr --json

openclaw qr --remote

openclaw qr --url wss://gateway.example/wsopenclaw qr: prints a pairing QR and setup code for local clients; suitable for interactive pairing flows.

--setup-code-only: emit the textual setup code without a QR image wrapper (handy for voice or keyboard entry).

--json: machine-readable artifact — required for scripts that pass the payload to other processes.

--remote: request a QR/setup object that is valid for remote onboarding (respect gateway.remote settings and tokens).

--url: override the gateway URL encoded into the payload for non-default network topologies.

Three scripting tips

Always use --json for automation. Human-friendly text is fragile; JSON is stable for parsing and plumbing into CI or companion daemons.

When your instance has multiple channel adapters, pass --channel explicitly (or resolve a target first) so scripts operate on the intended account.

Before running probes or pairing flows in scripts, assert gateway connectivity (openclaw gateway status or a small /status probe). Failing fast avoids generating invalid pairing tokens.

Safety notes

Rotated tokens and pairing secrets are sensitive. Treat any setup code or QR payload as credential material; store them like API keys.

Avoid --yes on destructive device or channel removal commands in unattended scripts unless you intentionally want irreversible deletes.

OpenClaw CLI: Flags, Output, and Workflows

What this CLI chapter covers

OpenClaw’s CLI is both the operator’s control surface and the automation entry point. You’ll use it interactively for troubleshooting and day‑to‑day tasks, and programmatically from scripts and CI. This chapter organizes the surface so you can quickly find a human‑friendly example or a machine‑friendly flag depending on the task.

Start here for two quick facts you’ll reuse throughout the book: global flags control state isolation and output format, and TTY presence changes behavior and coloring. Use --dev or --profile to isolate runtime state under alternate workspaces; this avoids accidental cross‑workspace operations. For automation and parsers prefer --json or --plain; --json yields machine‑parseable objects, --plain suppresses ANSI styling while preserving readable text.

The chapter is laid out for both discovery and execution:

Global flags and the state‑isolation model, including --dev, --profile, and container/targeting flags.

Output and TTY behavior: --json, --plain, --no-color, and when the CLI emits progress/interactive prompts vs non‑interactive summaries.

A single canonical command‑tree reference (one-line flattened sheet) for fast lookups. Treat the command‑tree as a reference map only — it’s not a runnable command.

Practical, copy‑paste examples grouped by common operator tasks: channels (add/login/status), logs and probes (gateway status, gateway probe, openclaw doctor), and pairing/approval flows.

Examples in this chapter are runnable shell snippets unless marked otherwise. When scripting, always pass --json and check exit codes rather than relying on colored output. When a command can be destructive (reset, uninstall, gateway uninstall), the examples explicitly show backup and confirm steps. Use this chapter as a quick reference: consult the command‑tree to find the command name, then run the nearby example block for the exact flags and expected machine output.

Global flags, profiles, and state isolation

Commands that change which workspace OpenClaw reads and writes let you create reproducible, isolated testing environments and target containerized runtimes without touching your primary installation. Use --dev to create a developer-local state area and --profile <name> to create named, separate state directories; both flags move all persisted CLI-visible state (configuration, sessions, plugins, approvals, device pairings, workspace files) into their own directory.

When you run with --dev the runtime stores state under ~/.openclaw-dev. When you run with --profile staging the runtime stores state under ~/.openclaw-staging. This isolation is persistent: any command you run with the same flag will read and write the same profile directory until you remove it.

One-line examples:

Quick developer sandbox:

openclaw --dev plugins install./local-plugin

openclaw --dev gateway startSeparate Gateway instance for staging:

openclaw --profile staging onboard --non-interactive

openclaw --profile staging gateway installWarning: these flags are not ephemeral overrides. They affect live data — sessions, approvals, installed plugins, and agent state — so use them deliberately. Back up or snapshot the profile directory before destructive actions like openclaw --profile staging reset or uninstall.

Targeting container runtimes: use --container <name> to direct the CLI at a named container target. This lets the same CLI command execute against a containerized Gateway rather than the local daemon. Example:

openclaw --container gateway-container statusTypical workflows:

Develop a plugin locally under --dev so you can iterate without polluting your primary ~/.openclaw.

Run a second, isolated Gateway using --profile staging for integration testing, CI, or blue/green experiments.

Combine --container with a profile to point at a containerized Gateway that uses a separate profile-backed volume.

Keep these flags in your operational playbook for safe experimentation and predictable, reproducible CLI-driven workflows.

Output formatting, colors, and TTY behavior

Interactive runs show richer, human-friendly output; scripts and CI need stable, machine-friendly output. OpenClaw adapts automatically based on whether it detects a TTY, and provides explicit flags and an environment variable for operators to force the mode they need.

When OpenClaw detects a TTY it will emit ANSI colors, OSC-8 hyperlinks, and progress indicators. Long-running commands display a progress indicator; when the terminal supports it this uses the OSC 9;4 escape for progress. In non‑TTY contexts (pipes, CI, logs) OpenClaw falls back to plain URLs, no progress styling, and no animated indicators.

You can override detection and control formatting:

--no-color disables ANSI colors. The NO_COLOR=1 environment variable is also honored and has the same effect.

--json emits structured JSON output where supported; it suppresses styling regardless of TTY and is the preferred mode for scripts and automation.

--plain (where supported) requests text output without styling or extra decorations; it similarly disables colors and rich links but preserves human-readable plaintext.

Examples:

bash openclaw agents list --json openclaw gateway status --no-color openclaw logs tail --plain

Note the difference: --no-color only removes ANSI color sequences; it does not change the output format to JSON. Use --json when you need parseable output. Use NO_COLOR=1 to force colorless output for entire CI jobs without changing every command.

OpenClaw’s visual identity (the lobster color palette) is defined in the repository at src/terminal/palette.ts; that file is the source of truth for CLI colors. Think of TTY vs non‑TTY like a GUI that hides animations and hyperlinks when running headless: OpenClaw preserves semantics but removes transient/terminal-dependent flourishes so logs and CI remain stable and machine-consumable.

Flattened command‑tree reference (compact)

Warning: the block below is a flattened, human-readable reference of the openclaw command tree. It is not a single runnable command; treat each token as a separate subcommand or namespace to call (for example: openclaw channels add...). Use this listing to discover namespaces and aliases, then consult the runnable examples later in this chapter.

openclaw [--dev] [--profile <name>] <command>

setup

onboard

configure

config

get

set

unset

file

schema

validate

completion

doctor

dashboard

backup

create

verify

security

audit

secrets

reload

audit

configure

apply

reset

uninstall

update

wizard

status

channels

list

status

capabilities

resolve

logs

add

remove

login

logout

directory

self

peers list

groups list|members

skills

search

install

update

list

info

check

plugins

list

inspect

install

uninstall

update

enable

disable

doctor

marketplace list

memory

status

index

search

wiki

status

doctor

init

ingest

compile

lint

search

get

apply

bridge import

unsafe-local import

obsidian status|search|open|command|daily

message

send

broadcast

poll

react

reactions

read

edit

delete

pin

unpin

pins

permissions

search

thread create|list|reply

emoji list|upload

sticker send|upload

role info|add|remove

channel info|list

member info

voice status

event list|create

timeout

kick

ban

agent

agents

list

add

delete

bindings

bind

unbind

set-identity

acp

mcp

serve

list

show

set

unset

status

health

sessions

cleanup

tasks

list

audit

maintenance

show

notify

cancel

flow list|show|cancel

gateway

call

usage-cost

health

status

probe

discover

install

uninstall

start

stop

restart

run

daemon

status

install

uninstall

start

stop

restart

logs

system

event

heartbeat last|enable|disable

presence

models

list

status

set

set-image

aliases list|add|remove

fallbacks list|add|remove|clear

image-fallbacks list|add|remove|clear

scan

infer (alias: capability)

list

inspect

model run|list|inspect|providers|auth login|logout|status

image generate|edit|describe|describe-many|providers

audio transcribe|providers

tts convert|voices|providers|status|enable|disable|set-provider

video generate|describe|providers

web search|fetch|providers

embedding create|providers

auth add|login|login-github-copilot|setup-token|paste-token

auth order get|set|clear

sandbox

list

recreate

explain

cron

status

list

add

edit

rm

enable

disable

runs

run

nodes

status

describe

list

pending

approve

reject

rename

invoke

notify

push

canvas snapshot|present|hide|navigate|eval

canvas a2ui push|reset

camera list|snap|clip

screen record

location get

devices

list

remove

clear

approve

reject

rotate

revoke

node

run

status

install

uninstall

stop

restart

approvals

get

set

allowlist add|remove

browser

status

start

stop

reset-profile

tabs

open

focus

close

profiles

create-profile

delete-profile

screenshot

snapshot

navigate

resize

click

type

press

hover

drag

select

upload

fill

dialog

wait

evaluate

console

pdf

hooks

list

info

check

enable

disable

install

update

webhooks

gmail setup|run

pairing

list

approve

qr

clawbot

qr

docs

dns

setup

tuiUse this listing as an index: search for high-level namespaces (channels, plugins, agents, gateway, nodes, models, auth, logs, cron, sandbox). The leftmost token is the CLI root; follow with subcommands shown below it. Aliases are indicated inline (for example: infer (alias: capability)). Global flags you’ll commonly use are --dev (developer isolation) and --profile <name> (workspace/profile isolation); many commands accept --json or --plain for machine-friendly output—see the CLI chapter for output-mode flags.

When you need a runnable example, jump to the practical examples that follow in this chapter (channels add/login, gateway probe/health, streaming logs). If a listing entry looks destructive (reset, uninstall, delete), stop and run openclaw backup create first. The flattened block is intentionally exhaustive; use it to surface the right namespace and then invoke that specific subcommand with its arguments.

Channels: add, remove, and status — practical examples