Prompt Repetition Improves Non-Reasoning LLMs

A zero-latency, hardware-free trick to fix your AI’s attention span.

Explaining the most trending paper right now: https://arxiv.org/pdf/2512.14982

Result — repeat the question out loud, and the model suddenly listens better

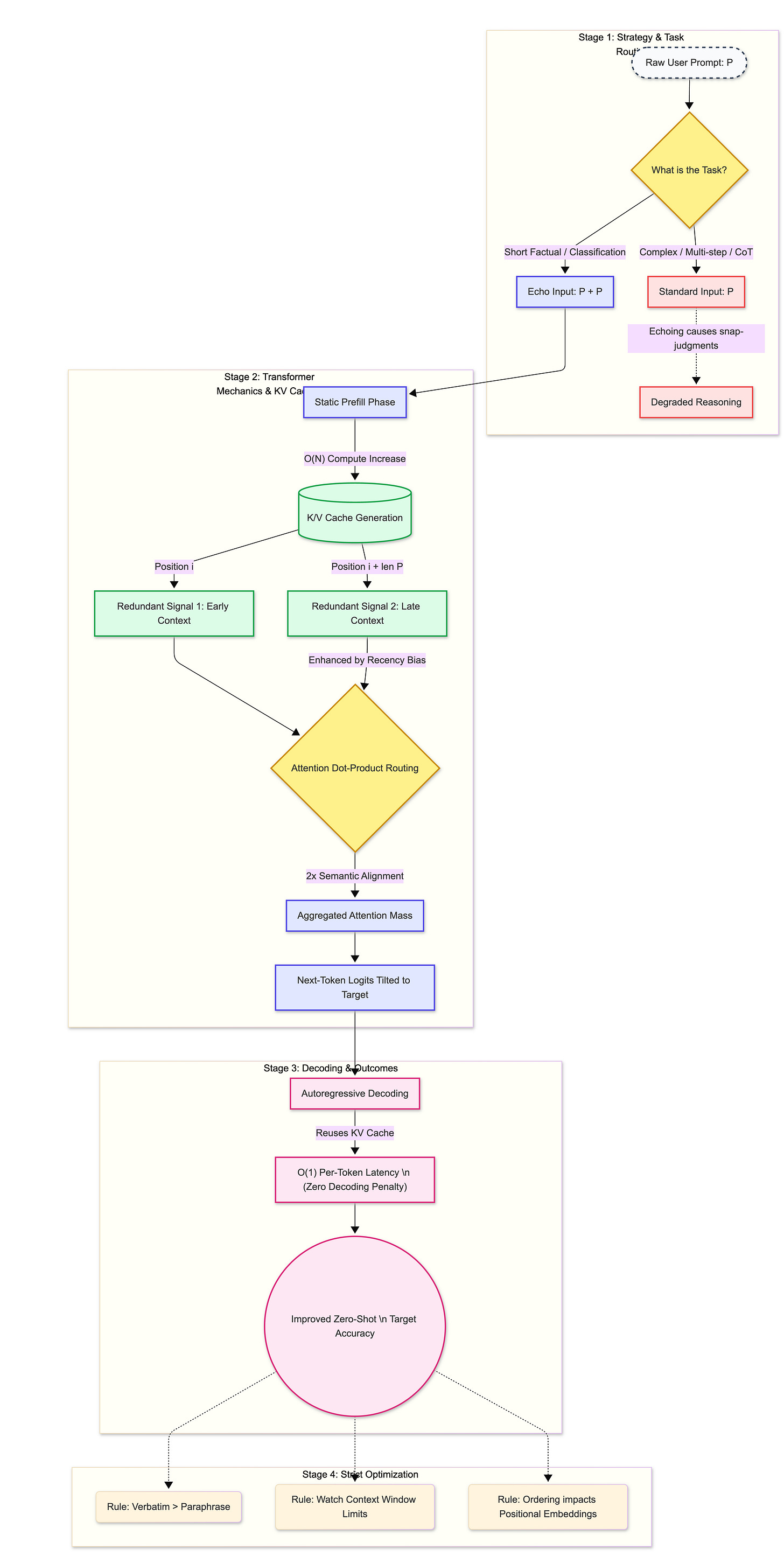

Here’s the simple, jaw-dropping takeaway: by verbatim-duplicating the user prompt inside the model’s context — an “echo” — off‑the‑shelf LLMs like Gemini, GPT, Claude and others get measurably better at short factual and classification tasks. The magic: you don’t ask the model to generate extra tokens, you don’t retrain it, and you don’t slow down decoding. You just put the same words in the input twice, and the model’s next-token probabilities tilt toward the right answers. Isn’t that delightfully tiny and surprisingly powerful?

Now let’s unpack why this works, when it helps, how to do it in practice, and why this little trick deserves a place in your prompt-engineering toolbox.

The pain: big, slow, or brittle — the existing ways of fixing LLM mistakes

Why should a tiny trick like repeating the prompt even matter? Because large language models are excellent, but annoyingly brittle.

They miss or underweight important bits of short prompts. Ask a multiple‑choice question or a short factual query and sometimes the model simply “forgets” the crucial phrase.

The common remedies are expensive: scale up the model (bigger weights = more cost), ensemble multiple models (more forward passes), or fine‑tune for the task (retraining and maintenance). Each is like turning up the volume, hiring more listeners, or rewiring the listener — effective but costly.

Some clever prompting tricks (chain-of-thought, few-shot examples) also help but either rely on the model’s reasoning behavior or add decoding overhead.