Quant Trading with Python: A Guide to Limit Order Book Analysis EP-2/365

High-Frequency Quant Trading Strategies: Modeling Market Microstructure and Momentum Ratios in Python

Use the button at the end of this article to download the source code!

Machine learning has revolutionized many industries, yet applying it to quant trading remains one of the most difficult tasks due to the low signal-to-noise ratio of financial time series. Success in this field requires more than just a powerful algorithm; it requires a deep understanding of how market orders interact to form prices. To build a winning quant trading model, one must master the art of data transformation — turning irregular exchange messages into a structured, feature-rich environment.

This guide provides a comprehensive walkthrough of a professional-grade quant trading workflow. We begin by implementing a custom order book reconstruction engine, followed by advanced feature engineering techniques like 10-minute rise ratios and forward-looking minima/maxima. Finally, we implement a rolling-window model selection pipeline that dynamically chooses the best-performing classifier for each market regime, ensuring our backtest results are as close to reality as possible.

Download the source code from here:

Let’s start coding now

%pylab inline

import numpy as np

import pandas as pd

import matplotlib.pyplot as pltThis small block is about preparing an interactive analysis environment for quantitative trading work rather than implementing any trading logic itself. The notebook magic at the top configures plotting to render inline in the notebook so charts show up next to the code and results; this is a convenience for exploratory analysis and rapid iteration when you are visually inspecting time series, performance charts, or diagnostics. Historically %pylab inline also injects a lot of names into the global namespace for convenience, but that behavior can cause subtle name collisions and make scripts less explicit — so in reusable code or shared projects prefer %matplotlib inline and explicit imports instead.

Numpy is the numerical foundation: it provides compact, contiguous multi-dimensional arrays and fast, vectorized arithmetic that you rely on for bulk operations (returns, normalizations, matrix algebra, covariance and correlation computations, linear algebra used in factor models, PCA, and optimization inner loops). Using numpy arrays and broadcasting is a performance imperative in quant work because it avoids Python-level loops and enables BLAS/LAPACK-backed operations that are orders of magnitude faster for large datasets. Be mindful of dtype (float64 is the default and usually appropriate for market-level analytics) and of NaN handling — many numpy routines will propagate NaNs, so cleaning or masking missing data is often required before aggregations.

Pandas sits on top of numpy and provides the time-series semantics and metadata that make market data manageable. DataFrame and Series give indexed access (DateTimeIndex, business-day calendars), automatic alignment on joins, groupby/resample/rolling window primitives, and convenient I/O for common market data formats. In practice you’ll use pandas to align multiple instruments across different timestamps, forward-fill or backfill intraday/overnight gaps as your strategy requires, compute rolling statistics (volatility, moving averages), and to preserve timestamps when plotting or aggregating — these behaviors are why pandas is central to feature engineering and backtest input pipelines.

Matplotlib.pyplot is the low-level plotting API used to render price paths, cumulative returns, drawdown curves, scatter plots of factor exposures, and heatmaps of correlation matrices. In a typical workflow you’ll query and process data in pandas/numpy, then call plt to produce charts that help diagnose model behavior (e.g., look for regime shifts, nonstationarity, outliers, or data leaks). For more polished visuals consider layering seaborn or plotly on matplotlib, but matplotlib gives you full programmatic control which is useful for reproducible reporting.

Operationally, the interplay matters: prefer to do as much as possible with pandas methods so you retain timestamp alignment and metadata; when a hot inner loop needs speed, extract numpy arrays (.values or .to_numpy()) and operate on them, then rewrap results into a Series/DataFrame to keep index alignment. Also adopt best practices: avoid %pylab in production code, set a plotting style for consistency, manage random seeds for reproducibility of stochastic simulations, and be careful with very large datasets (downsample for visualization or use tools like datashader).

All of these pieces are the standard toolkit you’ll use repeatedly in quant trading for data ingestion, feature engineering, backtest construction, statistical analysis, and visualization. The imports in this snippet simply prepare that toolkit so you can move quickly from raw time-series ticks and bars to the numerical transforms and charts needed to develop and validate strategies.

def order_book(month,day):

data1 = []

datapath = ‘stat_order_book/CNF14_0’+str(month)+’_’+str(day)+’_order_book_final.csv’

data1 = pd.read_csv(datapath,sep=’\t’,encoding = ‘utf-8’)

data_book = data1[[’0’,’1’,’2’,’3’]]

return data_bookThis small function is responsible for locating and loading a specific day’s order-book snapshot file and returning the four columns the downstream trading logic needs. The data flow is straightforward: given month and day inputs, the code concatenates them into a hard-coded file path that follows the naming pattern “CNF14_0{month}_{day}_order_book_final.csv”, opens that file as a tab-separated CSV (explicitly using UTF-8), and then slices out the columns labeled ‘0’, ‘1’, ‘2’, and ‘3’ before returning that subset as a DataFrame. In a quant-trading context this is typically done because the model or signal generator only needs the top N order-book fields (e.g., top 4 price/size levels or four precomputed features) rather than the entire file; selecting them immediately reduces downstream noise and (to some extent) memory pressure and clarifies what features are fed into the strategy.

There are several implicit design choices and failure modes to be aware of. First, the filename construction is brittle: it always prepends a literal ‘0’ before the month string and leaves the day untouched, so single- versus double-digit months/days will produce inconsistent filenames unless the stored files follow that exact convention; using zero-padding (e.g., str(month).zfill(2)) or a formatted string would make intent explicit and robust. Second, the path is hard-coded and absolute, which makes the function less portable and harder to test; switching to a configurable base path or using pathlib/os.path.join is advisable. Third, reading the entire CSV into memory each call can become expensive for tick-level order-book data; if files are large, usecols in pd.read_csv, chunked reads, or caching recently used DataFrames will reduce I/O and memory churn. Fourth, selecting columns by string labels ‘0’..’3’ assumes those exact column names exist and represent the features you expect; it’s safer for maintainability to either rename those columns to descriptive names (e.g., top_bid_price, top_ask_price, bid_size_0, ask_size_0) or to validate the columns and types before returning the DataFrame.

Operationally, also consider adding explicit error handling and logging around the file read so missing or corrupted files are handled gracefully (and to surface which date failed during backtests or live runs). Finally, confirm that the separator and encoding match the actual files; mismatches here can silently corrupt data parsing and produce subtle signal errors. Taken together, the current function does the core job — build path, read tab-separated file, return a 4-column slice — but hard-coded path/formatting, lack of validation, and no performance guards are the main things to address to make this robust for production quant workflows.

def day_time(month,day):

data = []

datapath = ‘CN_Futures_2014.0’+str(month)+’.’+str(day)+’.csv’

data = pd.read_csv(datapath)

data_CNF14 = data[data.Series == ‘CNF14’]

data = data_CNF14

market_open_time = data[data[’TimeStamp’].str.contains(’2014-0’+str(month)+’-’+str(day)+’D09:00’)].index.tolist()[0]

market_close_time = data[data[’TimeStamp’].str.contains(’2014-0’+str(month)+’-’+str(day)+’D16:00’)].index.tolist()[0]

data_open = data[market_open_time:market_close_time + 1]

timestamp_ = data_open.TimeStamp.unique()

return timestamp_This function extracts the unique timestamps for a single trading session (market open through market close) for one futures contract from a daily CSV. It builds a file path for the requested month and day, reads the CSV into a DataFrame, then immediately filters to the instrument of interest (Series == ‘CNF14’) so that further indexing operates only on that contract’s tick stream rather than interleaved instruments. Constraining to a single contract is important in quant trading because every downstream calculation (VWAP, orderbook reconstruction, feature windows) must operate on a consistent symbol; mixing symbols would corrupt time-series alignment and signals.

To define the session boundaries the code searches the TimeStamp string column for the first occurrence of the date/time at 09:00 and the first occurrence at 16:00 and takes those rows’ integer positions as market_open_time and market_close_time. Using index positions it then slices the contract DataFrame from the open row through the close row (notice the inclusive +1 on the close index) so the slice contains every tick between the two boundary rows in original row order. Finally it extracts the unique TimeStamp values from that sliced view and returns them; downstream consumers will typically need those distinct timestamps to align bars, compute per-tick features, or drive event-based strategies across the session.

A few important behavioral assumptions and reasons behind the implementation choices: the code relies on string matching because the raw TimeStamp appears stored in a single string field that encodes date and time with a specific format, and finding the first exact 09:00/16:00 occurrence is a cheap way to identify session boundaries without parsing every value to datetime. Slicing by integer index preserves the original tick order and implicitly handles possible repeated timestamps (you remove duplicates only at the end with .unique(), which is often what you want when building per-timepoint aggregates). Returning TimeStamp.unique() provides a compact list of time points instead of the full tick rows, which is useful when you only need the timeline for resampling or synchronizing other data feeds.

However, the implementation is brittle in several ways worth noting for robustness: the filename and TimeStamp patterns are built with hardcoded string prefixes like ‘2014–0’+str(month) which assume single-digit month/day formatting and the exact same delimiter conventions; the .str.contains(…).index.tolist()[0] calls will raise an IndexError if the match does not exist (e.g., holiday, early close, file naming differences), and string-matching ignores timezones and any minor timestamp format drift. For production quant workflows it’s typically safer to parse TimeStamp into pandas datetime once, then use boolean masks or between(start, end) with .first_valid_index() to locate boundaries, and to construct file paths and date strings with zero-padding (or better, use datetime.strftime) and error handling so the routine is stable across multi-digit months/days and unexpected data issues. These changes reduce the risk of silent failures and make the session extraction reliable for downstream feature engineering and backtesting.

def time_transform(timestamp_time):

time_second = []

for i in range(0,len(timestamp_time),1):

second = float(timestamp_time[i][11])*36000 + float(timestamp_time[i][12])*3600 \

+float(timestamp_time[i][14])*600 + float(timestamp_time[i][15])*60\

+float(timestamp_time[i][17])*10 + float(timestamp_time[i][18])

time_second.append(second - 32400.0)

return time_secondThis small function’s goal is to convert a list of timestamp strings into a numeric time axis expressed in seconds relative to a fixed baseline. Conceptually it walks each timestamp, computes the number of seconds that have elapsed since midnight (by reconstructing hours, minutes and seconds from the character digits), then subtracts a constant offset (32400 seconds, i.e. 9 hours) so that the returned values are anchored to a reference time (for example, a market session start or a timezone offset).

Concretely, for each timestamp string the code takes the characters at positions 11–12, 14–15 and 17–18 (which correspond to the hour, minute and second digits in a timestamp formatted like “YYYY-MM-DD HH:MM:SS”), treats each character as a digit and multiplies them by the appropriate place values to rebuild seconds since midnight: hour tens * 36000 + hour units * 3600 + minute tens * 600 + minute units * 60 + second tens * 10 + second units * 1. That gives the total seconds past midnight for that timestamp; the function then subtracts 32400.0 (9 hours) and appends the result to the output list. The net effect is a list of floats representing time in seconds relative to 09:00:00 (or equivalently shifted by a 9‑hour timezone offset).

Why this is done in quant trading: models and aggregation logic are much easier to implement when time is numeric and anchored to a consistent reference — you can compute time deltas, bin ticks into equal-length intervals, align trades to market open, or compute time‑based features (time-to-close, elapsed session seconds) directly. Subtracting the 9‑hour constant is an explicit normalization step so all timestamps share the same baseline used elsewhere in the pipeline (e.g., features that assume zero at session start or a particular timezone).

A few important caveats and suggestions: the implementation relies on a strict fixed-format timestamp string and extracts individual digit characters, which is brittle (it breaks if timestamps are ISO with ‘T’, include milliseconds, or have different spacing). Converting each digit to float and manually multiplying is unnecessarily error-prone and inefficient — parsing the hour/minute/second substrings with int() or using datetime.strptime (with explicit timezone handling) is clearer and safer. The hard-coded 32400 is a magic number; make its intent explicit with a named constant (e.g., MARKET_OPEN_OFFSET = 9 * 3600) or derive it from a timezone or exchange calendar so the code is self-documenting and robust to different markets. Finally, the function returns negative values for timestamps earlier than 09:00:00 (which may be intended for pre-market data but should be handled explicitly), and it’s not vectorized — for large tick datasets prefer numpy/pandas datetime facilities to get both performance and correctness.

def bid123_ask123_Q(data_book_28_open):

Bid1 = []

Bid2 = []

Bid3 = []

Bid1_Quantity = []

Bid2_Quantity = []

Bid3_Quantity = []

Ask1 = []

Ask2 = []

Ask3 = []

Ask1_Quantity = []

Ask2_Quantity = []

Ask3_Quantity = []

TimeStamp = []

for i in range(1,len(data_book_28_open),4):

#print data_book_28_open.iloc[i][’0’]

#print data_book_28_open.iloc[i][’2’]

Bid1.append(float(data_book_28_open.iloc[i][’0’])/100.0)

Bid1_Quantity.append(float(data_book_28_open.iloc[i][’1’]))

Bid2.append(float(data_book_28_open.iloc[i + 1][’0’])/100.0)

Bid2_Quantity.append(float(data_book_28_open.iloc[i + 1][’1’]))

Bid3.append(float(data_book_28_open.iloc[i + 2][’0’])/100.0)

Bid3_Quantity.append(float(data_book_28_open.iloc[i + 2][’1’]))

Ask1.append(float(data_book_28_open.iloc[i][’2’])/100.0)

Ask1_Quantity.append(float(data_book_28_open.iloc[i][’3’]))

Ask2.append(float(data_book_28_open.iloc[i + 1][’2’])/100.0)

Ask2_Quantity.append(float(data_book_28_open.iloc[i + 1][’3’]))

Ask3.append(float(data_book_28_open.iloc[i + 2][’2’])/100.0)

Ask3_Quantity.append(float(data_book_28_open.iloc[i + 2][’3’]))

TimeStamp.append(data_book_28_open.iloc[i-1][1])

return Bid1,Bid1_Quantity,Bid2,Bid2_Quantity,Bid3,Bid3_Quantity,Ask1,Ask1_Quantity,Ask2,Ask2_Quantity,Ask3,Ask3_Quantity This function’s goal is to walk a raw order-book DataFrame arranged in 4-row blocks and produce the top three bid and ask price levels and their quantities for each snapshot — data commonly used to compute liquidity, imbalance and microstructure features in quant trading. The code assumes each snapshot occupies four consecutive rows: the first row of the block (i-1 in the loop) holds the timestamp, and the next three rows (i, i+1, i+2) hold level-1, level-2 and level-3 entries. The loop starts at 1 and advances by 4 so each iteration processes one snapshot (rows i-1 through i+2). Within each snapshot the function reads four columns labeled ‘0’..’3’ where ‘0’ is the bid price, ‘1’ the bid quantity, ‘2’ the ask price and ‘3’ the ask quantity. Prices are cast to float and divided by 100.0 — a normalization step that converts stored integer price ticks (e.g., cents or tick units) into a more natural price scale and keeps magnitude consistent for downstream models or signals. Quantities are converted to floats but not scaled, because volume is meaningful in its native units for liquidity calculations.

As data flows: for each snapshot the code appends Bid1/Bid2/Bid3 and corresponding Bid*_Quantity from rows i, i+1, i+2 respectively; similarly it appends Ask1..Ask3 and Ask*_Quantity from the same rows. The timestamp for that snapshot is taken from the preceding row (i-1) column index 1 and appended to a TimeStamp list. Finally the function returns the twelve lists for bid/ask prices and quantities.

def rise_ask(Ask1,timestamp_time_second):

rise_ratio = []

index = np.where(np.array(timestamp_time_second) >= 600)[0][0]

for i in range(0,index):

rise_ratio_ = round((Ask1[i] - Ask1[0])*(1.0)/Ask1[0]*100,5)

rise_ratio.append(rise_ratio_)

for i in range(index,len(Ask1),1):

#print timestamp_time_second[:i]

#print timestamp_time_second[i] - 600

#print np.where(np.array(timestamp_time_second[:i]) >= timestamp_time_second[i] - 600)[0][0]

index_start = np.where(np.array(timestamp_time_second[:i]) >= timestamp_time_second[i] - 600)[0][0]

rise_ratio_ = round((Ask1[i] - Ask1[index_start])*(1.0)/Ask1[index_start]*100,5)

rise_ratio.append(rise_ratio_)

return rise_ratioThis small function computes a short-term percentage “rise” feature for the best ask price (Ask1) using a 10-minute lookback window (600 seconds). The overall goal in a quant trading context is to produce, for each tick, a measure of how much the current ask has moved relative to a recent baseline price so that downstream models or signals can detect momentum, abrupt moves, or regime changes.

Execution flow and decisions: first the code finds the earliest sample whose timestamp is at least 600 seconds from the start (index). For every tick before that index (i.e., the initial period where fewer than 10 minutes of history exist) the function uses the very first observed ask price as the baseline and computes the percent change from that first price to each early ask. This is an explicit design choice to avoid undefined lookbacks when you don’t yet have a full 10-minute history — it gives a consistent baseline for the “warm-up” period. Once the data reaches and surpasses 600 seconds, the function switches to a sliding 10-minute window: for each tick i it searches backwards among the earlier timestamps to find the first timestamp that is >= (current_timestamp − 600). That index_start points to the earliest tick that falls inside the 10-minute window, and the code computes percent change from Ask1[index_start] to Ask1[i], rounds it to five decimal places, and appends it to the output list. In other words, for each tick the feature is the percentage change from the earliest available price inside the last 10 minutes (not from the last price before the window), which is a deliberate choice to anchor the change to the start of the windowed interval.

Why this matters: using a fixed-duration lookback (10 minutes here) standardizes the temporal context of the feature so signals across time are comparable and not biased by variable sampling density. Choosing the earliest sample inside the window (instead of the most recent sample prior to the window, or an interpolated price) ensures the baseline is an actual observed price and provides a consistent directionality for trend detection — it measures growth from the window’s beginning to now. Rounding to five decimal places is likely to keep numeric stability and reduce noise in downstream storage or models, though it also slightly coarsens the signal.

Important assumptions and edge cases you should be aware of: timestamps must be monotonically increasing and represented in seconds; otherwise the window selection logic is incorrect. The code assumes there is at least one timestamp >= 600 so that the initial np.where(…) call yields a valid index; if that’s not true it will raise an exception. There is also a subtle bug if the very first timestamp is already >= 600: index becomes 0, the initial loop is skipped and the subsequent window computation will attempt to look inside timestamp_time_second[:0] (an empty slice) and fail. The implementation also repeatedly converts lists to numpy arrays and performs linear searches inside the loop, so performance is O(n²) in the worst case for long tick series.

def rise_bid(Bid1,timestamp_time_second):

rise_ratio = []

index = np.where(np.array(timestamp_time_second) >= 600)[0][0]

for i in range(0,index):

rise_ratio_ = round((Bid1[i] - Bid1[0])*(1.0)/Bid1[0]*100,5)

rise_ratio.append(rise_ratio_)

for i in range(index,len(Bid1),1):

index_start = np.where(np.array(timestamp_time_second[:i]) >= timestamp_time_second[i] - 600)[0][0]

rise_ratio_ = round((Bid1[i] - Bid1[index_start])*(1.0)/Bid1[index_start]*100,5)

rise_ratio.append(rise_ratio_)

return rise_ratioThis function constructs a time-series of percentage “rise” values for each bid quote by comparing the current bid to a baseline bid from up to 600 seconds earlier, producing a feature you can use for momentum or mean-reversion signals in a quant trading pipeline.

First, the function assumes timestamp_time_second is a monotonically increasing sequence of timestamps (seconds) aligned one-to-one with Bid1. It finds the first index whose timestamp is at least 600 seconds (index = first timestamp >= 600). That split determines two regimes: early data points that do not yet have a full 600-second history, and later points that do.

For early observations (i from 0 to index-1), it uses the very first bid (Bid1[0]) as the baseline because there is no 600-second lookback window available; the rise is computed as (current_bid — initial_bid) / initial_bid * 100 and rounded to five decimal places. For later observations (i >= index), it finds the earliest timestamp within the trailing 600-second window — specifically, the first position j in the history before i such that timestamp[j] >= timestamp[i] — 600 — and uses Bid1[j] as the baseline. The function then computes the percent change from that baseline to the current bid, again multiplied by 100 and rounded to five decimals. The results are appended sequentially, so the output rise_ratio is aligned with the input Bid1 and has the same length.

Why this approach: using the earliest timestamp within the last 600 seconds gives a consistent definition of a “10-minute rise” anchored to the start of the 10-minute interval. For early timestamps without a full 10-minute lookback, using the first observation prevents dropping those rows and still gives a monotonic bootstrap of the feature. The rounding to five decimals is a cosmetic/precision choice likely intended to keep numeric stability and reduce downstream storage/variance in features.

Important assumptions and limitations to be aware of: if no timestamp >= 600 exists the code will throw an exception when computing index; similarly, the inner np.where(…) call will error if no timestamp in the prefix meets the condition, so the function assumes dense, monotonic timestamps that cover at least a 10-minute range. The implementation also repeatedly constructs arrays and performs a search inside the main loop, giving a worst-case O(n²) behavior; for high-frequency data this can be a performance bottleneck. More robust and efficient implementations would use searchsorted (taking advantage of monotonic timestamps), a two-pointer sliding window, or vectorized/pandas rolling techniques to achieve O(n) or faster performance and to handle missing or irregular timestamps explicitly. Finally, be sure the bid array and timestamps are aligned and that you’ve considered how to treat equal timestamps, microstructure noise, and whether you want the baseline to be the earliest point within the window (as done here) versus, say, the most recent point exactly 600 seconds earlier or an interpolated value — each choice has different implications for signal behavior in a trading model.

data_book = order_book(1,16)

data_book_open = data_book[1380:285495+1] # 9:00 ~ 16:00

data_book_open = data_book_open.reset_index(drop = True)

timestamp_time = day_time(1,16)

timestamp_time_second = time_transform(timestamp_time)This block is preparing a contiguous, time-aligned slice of the order-book data that corresponds to regular trading hours, and then creating a numeric time axis that lines up with those rows so downstream models and features can be built without accidental misalignment.

First, order_book(1,16) returns the full raw order-book table for the scope you asked for (the exact semantics of the arguments are defined elsewhere in the codebase — e.g., day or instrument range). The next line takes a contiguous chunk of that table using hard-coded row boundaries (1380 through 285495 inclusive) because those row indices represent the market’s regular session (9:00–16:00) in this dataset. The +1 ensures the final index is included, so the slice covers the complete open-to-close interval. We then call reset_index(drop=True) so the resulting DataFrame has a clean, zero-based integer index; this is important because later operations will treat row position as a time-sync key and you don’t want the original (noncontiguous or multi-index) index to cause misalignment when joining or iterating.

Parallel to extracting the book, day_time(1,16) generates the timestamp sequence for the same overall scope. time_transform(timestamp_time) converts those timestamps into a numeric, second-level representation (for example seconds since midnight or seconds since market open), which is far easier and safer to use inside quantitative pipelines: numeric seconds are efficient for interpolation, window calculations, feature binning, and supervised label alignment, and they avoid timezone or string-parsing pitfalls during vectorized operations.

The why behind these steps is alignment and determinism: by slicing the book to regular trading hours and producing a matching numeric time axis you guarantee that each row in data_book_open corresponds to exactly one time value in timestamp_time_second. That prevents label leakage or off-by-one errors in model training and simplifies time-based feature engineering (e.g., elapsed time since event, time-to-close, or fixed-interval aggregation).

import time

start = time.time()

Bid1_16,Bid1_Quantity_16,Bid2_16,Bid2_Quantity_16,Bid3_16,Bid3_Quantity_16,Ask1_16,Ask1_Quantity_16,Ask2_16,Ask2_Quantity_16,Ask3_16,Ask3_Quantity_16 = bid123_ask123_Q(data_book_open)

end = time.time()

print “Total time = %f”%(end - start)This block is doing two things in sequence: it snapshots a market-data-derived order-book extraction and measures how long that extraction took. The code records the current time, calls a helper function named bid123_ask123_Q with the current book snapshot (data_book_open), unpacks the function’s twelve outputs into variables that represent the top three bid prices and quantities and the top three ask prices and quantities, then captures the time again and prints the elapsed interval. Conceptually, the function is performing the domain work — pulling the best three price levels and their available sizes from whatever internal representation of the order book you maintain — and the surrounding timing code is there to quantify how long that work takes.

Why we do this matters for trading: the top-of-book and near-book levels (Bid1/Bid2/Bid3 and Ask1/Ask2/Ask3 plus their quantities) are the immediate inputs for most short-horizon quant strategies — spread checks, mid-price calculation, crossing-detection, liquidity checks, order sizing and smart-order-routing decisions — so extracting these values correctly and quickly is central to decision correctness and execution latency. The unpacking into separate, descriptive variables makes subsequent logic clearer (you can compute spread = Ask1_16 — Bid1_16, assess available liquidity at Bid2/Ask2, etc.) and avoids repeatedly indexing into a more complex structure during hot-path logic.

Why measure the elapsed time here: in a latency-sensitive system every millisecond (or microsecond) can change P&L, so profiling the cost of converting raw book data into easily consumable primitives is essential. The timing call gives a quick single-run measurement so you can identify obvious bottlenecks in the extraction function (parsing, validation, conversions, memory allocation). However, a single wall-clock measurement is noisy and subject to system scheduling, cold caches, and I/O/printing overhead. For reliable profiling you should run many iterations, discard warm-up runs, collect distributions (mean, p50, p95), and use higher-resolution monotonic timers available in newer Python versions (e.g., perf_counter or monotonic_ns) to avoid clock adjustments and improve granularity. Also be aware that printing to stdout is itself a blocking operation that can perturb timing; use non-blocking logging or aggregate measurements off the latency-critical path if you need accurate numbers in production.

import time

start = time.time()

rise_ratio_ask_16 = rise_ask(Ask1_16,timestamp_time_second)

end = time.time()

print “Total time = %f”%(end - start) This block is a very small benchmarking wrapper around a single computation — it records the wall-clock time immediately before calling rise_ask(…), invokes that function with the ask-price series and a timestamp array, then records the wall-clock time immediately after and prints the elapsed seconds. Conceptually, data flows into rise_ask via Ask1_16 (presumably the top-of-book ask series for the 16th instrument, a 16-sample window, or a similarly named feed) and timestamp_time_second (the corresponding second-resolution timestamps). rise_ask consumes those inputs, performs whatever domain logic it implements (most likely computing a rise ratio, slope, or percent change of the ask price over the given timestamps), and returns the result into rise_ratio_ask_16 for downstream use in signal generation or further analytics.

We capture start and end timestamps to measure latency because in quantitative trading the timeliness of a signal matters: if a preprocessing or feature computation takes too long, the derived signal can miss execution opportunities or violate latency SLAs. Measuring the elapsed wall time here gives an operational sense of how long this one call costs in the live pipeline. The printed value uses a %f format, so you get the elapsed seconds as a floating-point value (default six decimal places) which is easy to read when profiling single calls. If you need a more robust measurement for optimization work, don’t rely on a single call. Warm up any caches, run multiple iterations and compute mean/percentiles, and use profilers (cProfile, pyinstrument) to see where time is actually spent inside rise_ask. Finally, handle errors: if rise_ask raises an exception you’ll never capture the end time or a metric for that attempt — wrap the call in try/finally or instrument exception paths so you can track failed latencies. Also keep in mind the code shown uses the Python 2 print statement; for modern codebases migrate to print() or, better, structured logging so timing data integrates with your observability stack.

import time

start = time.time()

rise_ratio_bid_16 = rise_bid(Bid1_16,timestamp_time_second)

end = time.time()

print “Total time = %f”%(end - start) This snippet measures how long a single call to rise_bid takes and preserves the function’s output for downstream trading logic. Execution begins by capturing a wall-clock timestamp immediately before invoking rise_bid with two inputs (Bid1_16 and timestamp_time_second) and captures another timestamp right after the call completes; the difference printed as “Total time” represents the elapsed wall-clock time spent inside rise_bid. The result of the computation, assigned to rise_ratio_bid_16, is presumably a numeric metric (for example, a rise ratio or momentum indicator derived from the top-of-book bids or a 16-element bid series) that will feed subsequent signal generation, risk checks, or order-sizing decisions in the quant strategy.

We measure elapsed time because latency and determinism matter in quant trading: slow or variable execution in a calculation path that contributes to signal generation or order submission can degrade P&L or create missed opportunities. By timing only the call (start immediately before and end immediately after), the snippet isolates rise_bid’s runtime from other code, allowing you to detect regressions, spot occasional stalls, or determine whether the function is fast enough to run at the desired cadence (tick-by-tick, per second, etc.). Storing the function’s return separately also keeps the measurement side-effect-free with respect to later logic that consumes rise_ratio_bid_16.

Finally, remember that a single elapsed-time print is useful for ad-hoc profiling, but consistent performance validation benefits from repeated, controlled measurements (timeit-style runs, disabling GC during microbenchmarks, and running under production-like loads). If rise_bid touches I/O, network, or shared resources (order book updates, database reads), you’ll want to separate deterministic computational cost from external latency and ensure thread-safety and immutability of inputs like Bid1_16 and timestamp_time_second when this code runs in a multi-threaded or low-latency execution path.

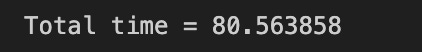

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Bid1_Quantity_16_,label = ‘Bid1 Quantity’,color = ‘r’)

plot(Bid2_Quantity_16_,label = ‘Bid2 Quantity’,color = ‘g’)

plot(Bid3_Quantity_16_,label = ‘Bid3 Quantity’,color = ‘b’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

plt.legend(loc = 1)This block builds a single, annotated time-series plot of the top three bid‑side quantities so you can visually inspect intraday liquidity dynamics and identify regime changes relevant to execution and microstructure analysis. It starts by allocating a wide plotting canvas (16x7) and turning on a grid to make small fluctuations and relative levels easier to read; the three series — Bid1_Quantity_16_, Bid2_Quantity_16_, Bid3_Quantity_16_ — are then drawn on the same axes with distinct colors and labels so you can directly compare the immediate bid size at level 1, 2 and 3 over the session. Plot order matters for visibility when lines overlap, and labeling each series allows the legend to map colors back to queue depth.

The x-axis is explicitly constrained from 0 to 25,200, which in this context is being used as the trading session window (25,200 seconds = 7 hours). The hard limits focus the view on a single trading day and avoid distracting pre/post‑session noise. Three vertical lines (at x=900, 9000, 14,400) are drawn and annotated as 09:15, 11:30 and 13:00; these are anchors that segment the session into meaningful intraday periods (first 15 minutes, mid‑morning to lunch boundaries, and post‑lunch), which are commonly relevant for feature engineering, regime detection, or gating execution strategies because order flow and volatility often change at those times.

Why this matters for quant trading: visualizing the three top bid quantities together helps you spot liquidity depletion, sudden replenishment events, and persistent imbalances that would affect fill probability, market impact estimates, or smart‑order‑routing decisions. The vertical markers help correlate those microstructure events with canonical intraday phases that you may use as cut points for model training, evaluation windows, or different execution parameter sets.

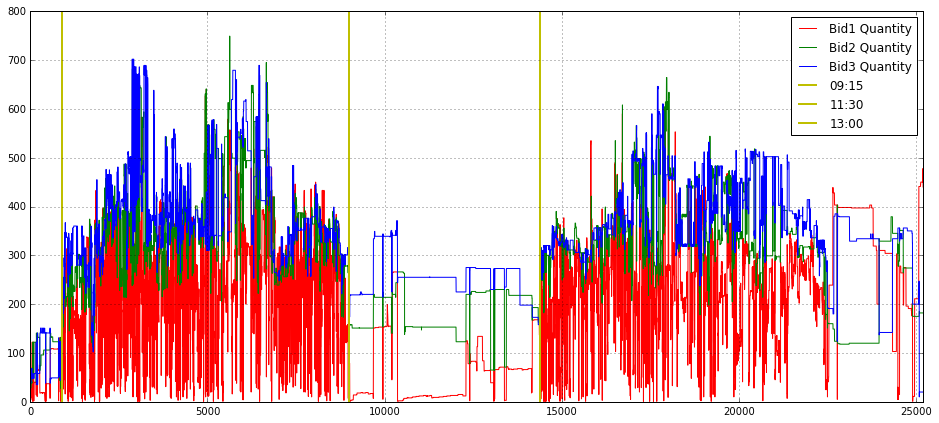

import matplotlib.pyplot as plt

plt.figure(figsize = (16,9))

plt.subplot(221)

plt.grid()

plot(Bid1_Quantity_16_,label = ‘Bid1 Quantity’,color = ‘r’)

plot(Bid2_Quantity_16_,label = ‘Bid2 Quantity’,color = ‘g’)

plot(Bid3_Quantity_16_,label = ‘Bid3 Quantity’,color = ‘b’)

plt.xlim(0.0,25200)

plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

#plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

#plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

#plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)

plt.subplot(222)

plt.grid()

plot(Ask1_Quantity_16_,label = ‘Ask1 Quantity’,color = ‘r’)

plot(Ask2_Quantity_16_,label = ‘Ask2 Quantity’,color = ‘g’)

plot(Ask3_Quantity_16_,label = ‘Ask3 Quantity’,color = ‘b’)

plt.xlabel(”Second”)

#plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

#plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

#plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

plt.xlim(0.0,25200)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)This block is constructing a two-panel visualization of order-book quantities over a trading day so you can inspect intra-day liquidity dynamics and spot imbalances or structural shifts. It opens a wide 16:9 canvas and allocates the top-left and top-right slots of a 2×2 grid for the bid and ask quantity series respectively; leaving the bottom row free implies the author intended to add more diagnostics (e.g., price, spread, or imbalance) below. The overall intent is exploratory — to make temporal patterns and sudden volume events in the top-of-book visible at second resolution.

In the first (top-left) panel the code plots the first three bid-level quantities (Bid1, Bid2, Bid3) as separate colored lines, turns on a grid for easier visual alignment, and labels axes with “Second” and “Quantity”. The x-axis is clipped to 0–25,200 seconds, which equals 7 hours: this ties the horizontal range to the full trading session so that each second index maps to a time-of-day. Plotting the top 3 bid levels lets you observe how available buying interest is stacked at the best prices and immediately behind them — useful for detecting liquidity suction or replenishment that matters for execution algorithms and short-term market-making strategies.

The second (top-right) panel mirrors the first but for the top three ask-level quantities (Ask1, Ask2, Ask3). Keeping bids and asks on separate subplots reduces visual clutter and makes asymmetries easier to read; if you want direct pointwise comparisons you could overlay them or compute a bid/ask imbalance series and plot that as a separate diagnostic. Both subplots use small legend padding and consistent coloring so you can quickly identify which depth-level is moving and whether changes are persistent or transient.

There are three commented vertical lines (axvline) at 900, 9,000 and 14,400 seconds that are left disabled — these are clearly intended to mark schedule landmarks like the opening micro-period and mid-session break points (for example, if your session starts at 09:00 then 900 s = 09:15, 9000 s ≈ 11:30, 14,400 s = 13:00). Turning those on helps correlate liquidity shifts with known market structure events (open/close auctions, lunch breaks, or known news windows). Finally, note that the plots do not fix y-limits or apply smoothing/normalization: that preserves raw quantity magnitudes which is good for execution sizing, but for comparative analyses you might want consistent y-scaling across panels, log-scaling to handle spikes, or a rolling-average overlay to reduce tick noise depending on the hypothesis you’re testing in your quant strategy.

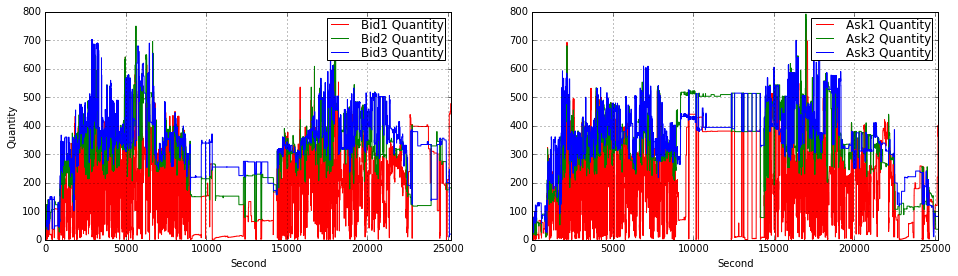

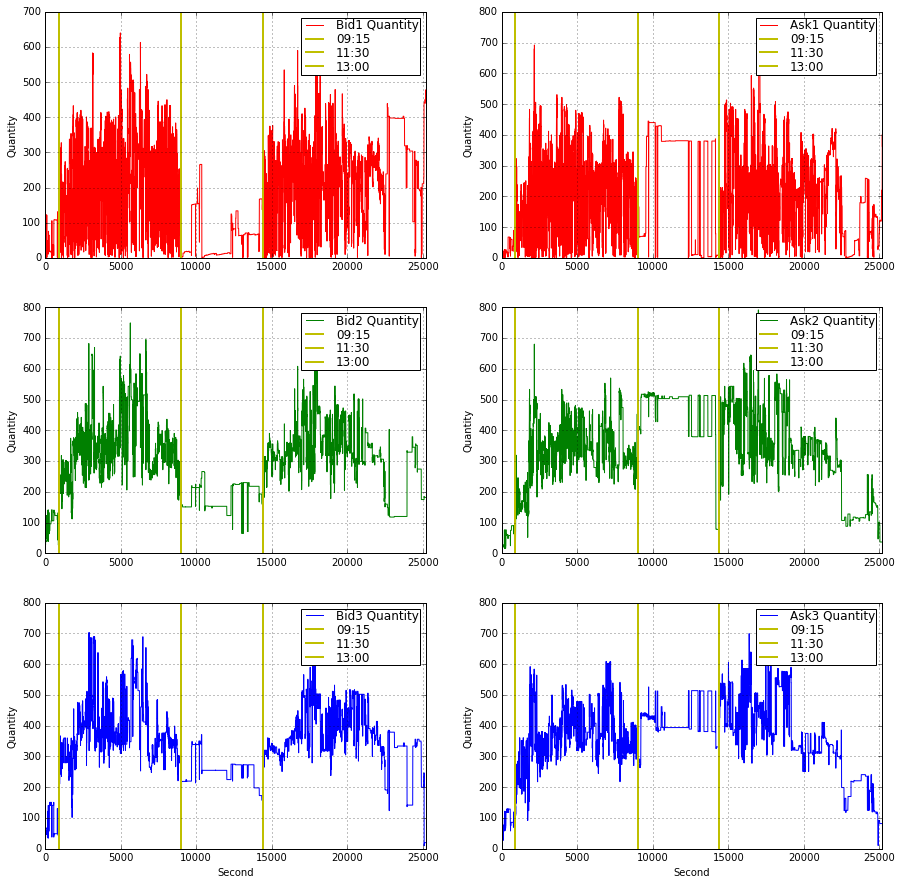

plt.figure(figsize = (15,15))

plt.subplot(321)

plt.grid()

plot(Bid1_Quantity_16_,label = ‘Bid1 Quantity’,color = ‘r’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

#plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)

plt.subplot(323)

plt.grid()

plot(Bid2_Quantity_16_,label = ‘Bid2 Quantity’,color = ‘g’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

#plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)

plt.subplot(325)

plt.grid()

plot(Bid3_Quantity_16_,label = ‘Bid3 Quantity’,color = ‘b’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)

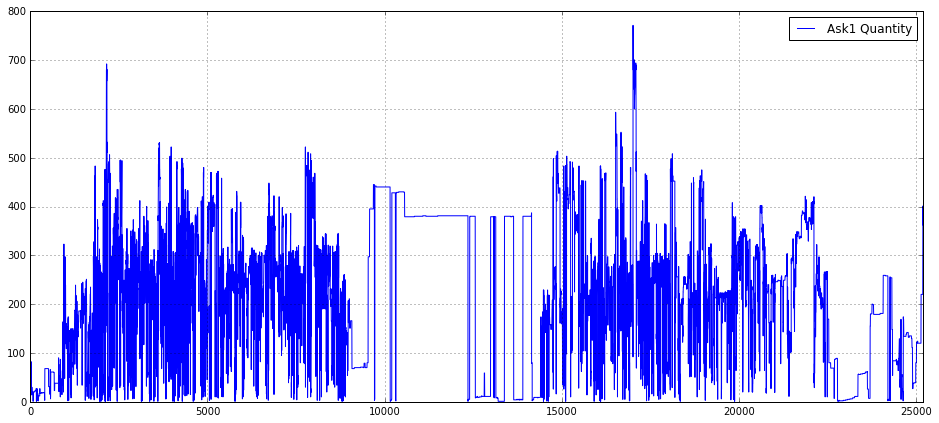

plt.subplot(322)

plt.grid()

plot(Ask1_Quantity_16_,label = ‘Ask1 Quantity’,color = ‘r’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

#plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)

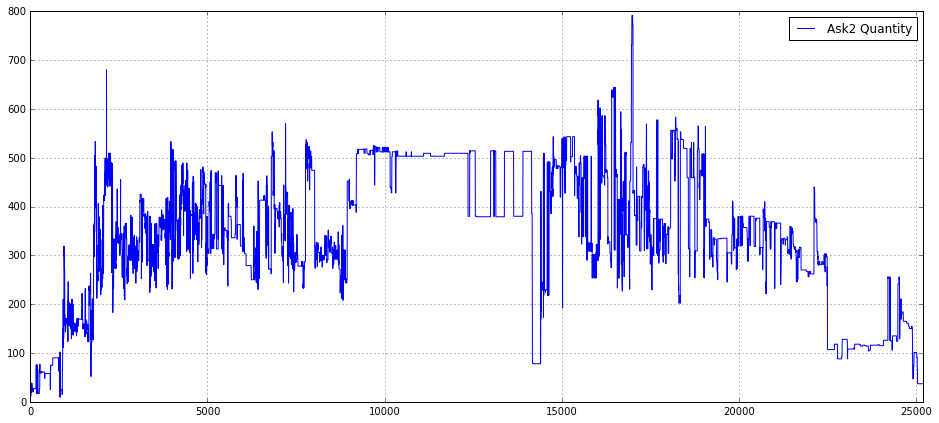

plt.subplot(324)

plt.grid()

plot(Ask2_Quantity_16_,label = ‘Ask2 Quantity’,color = ‘g’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

#plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)

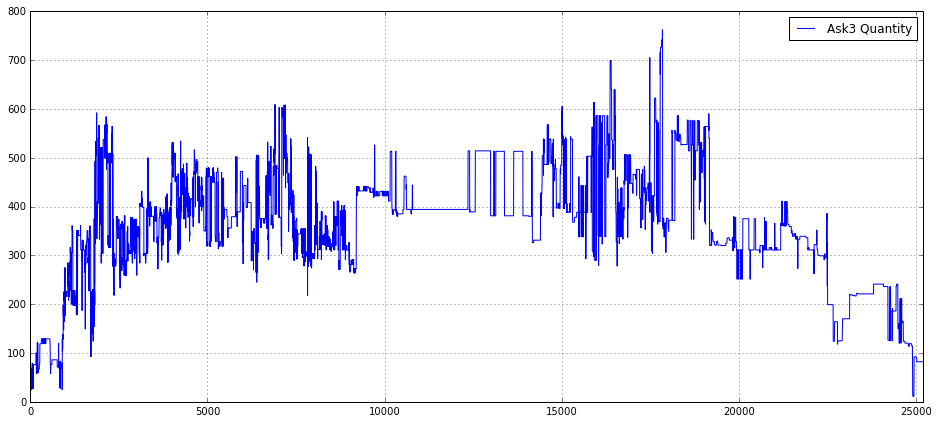

plt.subplot(326)

plt.grid()

plot(Ask3_Quantity_16_,label = ‘Ask3 Quantity’,color = ‘b’)

plt.xlim(0.0,25200)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.legend(loc = 1,borderpad = 0.08,labelspacing = 0.08)This code builds a 3×2 visualization of order‑book quantities so you can inspect intraday depth dynamics at the first three bid and ask levels. It starts by creating a large square canvas (figsize = 15×15) to give each small subplot enough room; the layout uses explicit subplot indices so the left column (321, 323, 325) is reserved for Bid1–Bid3 and the right column (322, 324, 326) for Ask1–Ask3. That arrangement intentionally separates side (bid vs ask) and level (1–3), which reduces overplotting and makes it easy to compare how liquidity evolves across price levels and across sides.

Each subplot draws a single time series of quantities (e.g., Bid1_Quantity_16_) with a consistent color scheme (red/green/blue for levels 1/2/3) and turns on a grid to make short‑term structure easier to read. The x axis is clipped to 0…25200 seconds; this enforces a common time window for all panels (the full trading interval of interest) so events line up vertically between plots. The clipping also prevents extreme timestamps or gaps from stretching the axis and hiding intraday patterns.

Every panel adds three vertical reference lines via axvline at x = 900, 9000, and 14400 and labels them as ‘09:15’, ‘11:30’, and ‘13:00’. These are deliberate intraday anchors — likely mapping seconds since market open to meaningful clock times — and they make it straightforward to correlate liquidity changes (spikes, thinning, or regime shifts) with known session boundaries or scheduled events. Placing identical markers on every subplot ensures you can visually compare how each depth level responds at the same moments.

The code also pays attention to readability: only the bottom row includes an x‑axis label (“Second”) to avoid cluttering every panel, while every subplot uses a y label “Quantity” so vertical scale meaning is explicit. Legends are positioned consistently at loc=1 (upper right) with small paddings to keep them out of the main data area but still informative; consistent labeling across panels lets you quickly identify the series and its color without scanning for a caption.

From a quant‑trading perspective this visualization is serving two main goals: diagnosing microstructure behavior (e.g., where liquidity concentrates, when depth collapses, asymmetric reactions between bid and ask) and generating features or hypotheses for models (e.g., intraday seasonality, level‑specific volatility, or event‑driven liquidity shifts). Practically, because the code repeats the same annotation and styling for each subplot, it would be straightforward to refactor into a small loop or helper to reduce duplication and to generalize the time markers if you need different session breakpoints or a different sampling frequency.

Bid1_Quantity_16_ = []

for i in range(0,25200,1):

index = np.where(array(timestamp_time_second) <= i)[0][-1]

Bid1_Quantity_16_.append(Bid1_Quantity_16[index])At a high level this loop is taking an irregular, tick-level time series of the best bid quantity (Bid1_Quantity_16) and producing a fixed-length, per-second sequence for a 7‑hour window (25200 seconds). The outer loop walks second-by-second from 0 up to 25199; for each second i it looks up the most recent tick whose timestamp is at or before that second and copies that tick’s bid quantity into the output list. In other words, you are resampling the tick stream to a uniform one-second cadence using a “last known value” or forward‑fill rule so that the quoted bid quantity persists until the next tick updates it.

Mechanically, the code finds the index by selecting all indices where timestamp_time_second <= i and then taking the last of those indices ([0][-1]), and then it appends Bid1_Quantity_16 at that index into Bid1_Quantity_16_. The decision to use the last index <= i is deliberate: for market microstructure and backtesting we usually want the most recent known state at each evaluation timestamp (we do not extrapolate or interpolate between ticks because quotes are stepwise and you typically want right-continuous values). Using <= also means that if there is a tick exactly at second i, that tick is used; otherwise the previous tick is carried forward.

A few important caveats and reasons to revisit the implementation for production use in a quant setting. First, if there are seconds before the first tick (no timestamp <= i), the np.where (…) [0][-1] pattern will raise an IndexError — you need an explicit boundary check or an initial fill value to avoid crashes. Second, this loop repeatedly calls the search operation for every second which is O(T * N) in the worst case and will be slow for long windows or large tick arrays. If the timestamp array is sorted (which it should be), a much faster and clearer approach is to use np.searchsorted once (vectorized) or pandas’ reindex/resample with forward-fill; these give the same right-continuous semantics at far better performance. Finally, make sure timestamp_time_second is aligned to the same origin as i (e.g., seconds since session open), and that types and monotonicity are guaranteed; otherwise alignment errors will silently produce incorrect series.

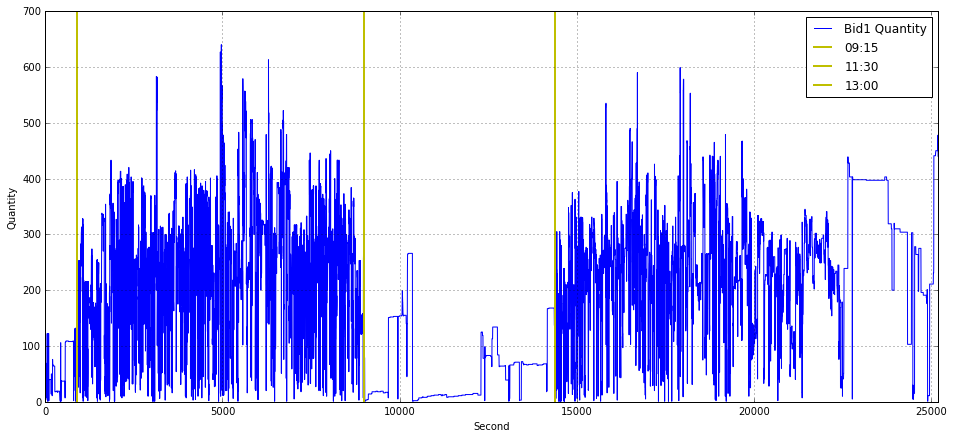

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Bid1_Quantity_16_,label = ‘Bid1 Quantity’)

plt.xlabel(”Second”)

plt.ylabel(”Quantity”)

plt.axvline(x = 900 ,color = ‘y’,linestyle = ‘-’,label = ‘09:15’, linewidth = 2)

plt.axvline(x = 9000 ,color = ‘y’,linestyle = ‘-’,label = ‘11:30’, linewidth = 2)

plt.axvline(x = 14400 ,color = ‘y’,linestyle = ‘-’,label = ‘13:00’, linewidth = 2)

plt.xlim(0.0,25200)

plt.legend(loc = 1)This block builds a simple time-series visualization of the best-bid quantity (Bid1_Quantity_16_) across a trading day so you can visually relate liquidity dynamics to fixed calendar times. It treats the series as an array indexed by elapsed seconds from a day-start (the x-axis label is “Second”), so the call to plot uses the series’ positional index as the time axis. The figure size and grid are set up first to make the chart legible and to help the eye follow level changes and short-lived spikes — useful when scanning for transient liquidity events or algorithmic activity.

Three vertical reference lines are drawn at x = 900, 9000 and 14400, labeled 09:15, 11:30 and 13:00 respectively. These numbers are in seconds: 900s = 15 minutes, 9000s = 2.5 hours, 14400s = 4 hours, so the code assumes the day starts at 09:00 (or otherwise that those second offsets correspond to the named clock times). Placing these markers directly on the chart lets you quickly correlate changes in Bid1 quantity with specific market schedule points (for example auction windows, known micro-structure shifts, or a lunch/auction break), which is crucial for understanding intraday liquidity patterns and for engineering time-dependent features in quant models.

The x-axis is clipped to the window 0.0–25200 seconds (7 hours), which enforces a consistent trading-hours view even if the underlying data contain extra timestamps or gaps; this keeps comparisons across days or instruments aligned. The legend and labels identify the series and the annotated times so the plot is immediately interpretable when reviewing many days or when presenting to stakeholders. In practice, this code implicitly assumes the data are sampled or reindexed to per-second resolution and aligned to the day-start — if that assumption doesn’t hold you’ll get misleading alignment between the plotted index and the labeled vertical lines. For robustness in a quant workflow you often want to explicitly convert timestamps to elapsed seconds, forward-fill or mark gaps, and consider smoothing or aggregating noisy per-second ticks before plotting so the visual highlights true liquidity structure rather than measurement noise.

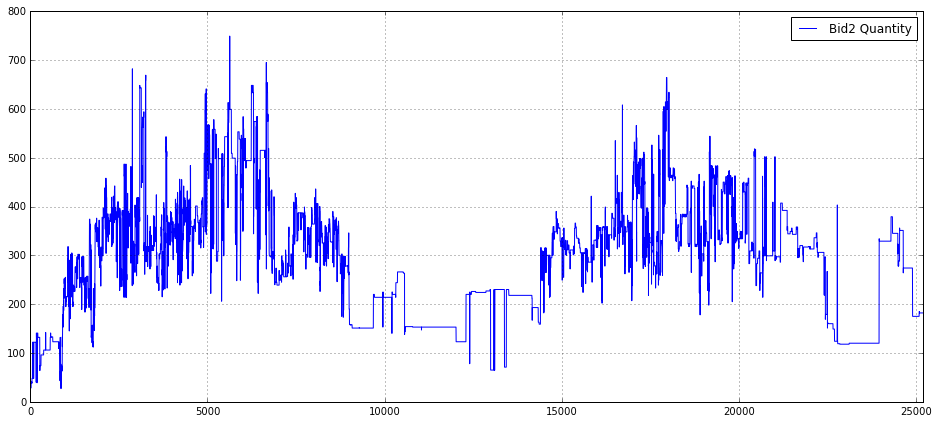

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Bid2_Quantity_16_,label = ‘Bid2 Quantity’)

plt.xlim(0.0,25200)

plt.legend(loc = 1)This snippet builds a focused time‑series visualization of a single order‑book quantity series so you can inspect liquidity at the second bid level over a trading session. First, it creates a wide plotting canvas (16×7 inches) so long horizontal time series are displayed with enough horizontal resolution to reveal intra‑session structure; the explicit figure size is chosen to make patterns and short‑lived events visible when you share or analyze a full‑day view. Enabling the grid provides regular visual reference lines, which helps when eyeballing level changes, jumps, or cyclic patterns in quantity.

The core drawing call plots the array/series Bid2_Quantity_16_ and attaches the label “Bid2 Quantity”. Depending on the object type, matplotlib will use either the Series’ datetime index (if it’s a pandas Series) or a numeric index (if it’s a numpy array); the plotted values represent the volume (or order count) at the second best bid level over time, which you inspect to detect liquidity shifts, microstructure events, or features useful for execution and alpha modeling. After plotting, the x‑axis is explicitly bounded to [0.0, 25200]; that constraint fixes the visible time window (likely representing a fixed session length or a fixed number of seconds/ticks), which prevents autoscaling to outliers and ensures consistency when comparing multiple plots or backtests. Using a hard limit like 25200 enforces a common frame of reference across figures, but it’s worth documenting or computing that limit from session metadata rather than leaving it as a “magic number.”

Finally, the legend is placed at location 1 (upper right) so the plotted series is identified on the figure; this is important whenever you overlay multiple series or want quick clarity on what each line means. In the context of quant trading, this plot is a quick diagnostic: it helps you validate feature behavior, spot anomalous order‑book activity, and decide whether further preprocessing (resampling, smoothing, outlier clipping, or alignment to timestamps) is needed before feeding the series into models or execution logic. Note that for production‑grade inspection you should add explicit axis labels and a title and prefer semantic legend/location strings (e.g., ‘upper right’) and timestamped x‑ticks if the index represents real times.

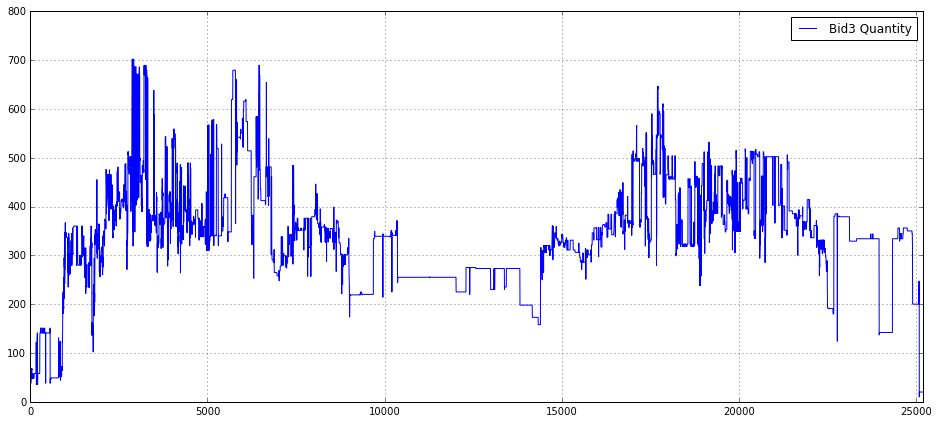

Bid3_Quantity_16_ = []

for i in range(0,25200,1):

index = np.where(array(timestamp_time_second) <= i)[0][-1]

Bid3_Quantity_16_.append(Bid3_Quantity_16[index])This snippet is taking an irregular, tick-level sequence of bid sizes (Bid3_Quantity_16) and producing a regular, one-second resolved series of the same length (25200 seconds, i.e., 7 hours). The outer loop iterates over each second i in the target sampling grid. For each second it finds the last index in timestamp_time_second whose timestamp is <= i (the np.where(…)[0][-1] expression) and appends the corresponding bid size to the output list. In short, for every integer second it carries forward the most recent observed bid quantity — a last-observation-carried-forward (LOCF) resampling that produces a stepwise, time-aligned series.

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Bid3_Quantity_16_,label = ‘Bid3 Quantity’)

plt.xlim(0.0,25200)

plt.legend(loc = 1)This block constructs a simple, focused visualization of the Bid3 quantity time series so you can inspect liquidity behavior at the third-best bid level over a trading interval. First it creates a wide figure (16x7 inches) to give horizontal space for high-frequency or long-duration time series — this reduces overplotting and makes short-lived spikes or microstructure patterns easier to see. Enabling the grid improves visual alignment so you can judge magnitudes and timing of moves at a glance, which is important when comparing small changes in order size across many observations.

The core action is plotting the array Bid3_Quantity_16_. That variable represents the quantity available at the third bid level (likely sampled at a regular interval), and plotting it directly surfaces liquidity dynamics such as step changes when orders are placed/cancelled, predictable intra-day patterns, or outliers that could indicate fat-finger events or data issues. The label provided on the series allows the legend to identify this trace when multiple series are overlaid.

Setting x-axis limits to (0.0, 25200) intentionally crops the view to the initial window of interest — 25200 is the number of seconds in 7 hours — so this is probably meant to focus the chart on a single trading session or a fixed analysis window rather than the entire dataset. That helps avoid displaying irrelevant pre/post-market data or very long histories that would compress the structure of a single session and hide microstructure effects. Finally, placing the legend at location 1 (upper-right) ensures the label is visible without covering the main body of the series in typical layouts; if you later add more series or annotations you may need to reposition or use an automated placement to avoid overlap.

Ask1_Quantity_16_ = []

for i in range(0,25200,1):

index = np.where(array(timestamp_time_second) <= i)[0][-1]

Ask1_Quantity_16_.append(Ask1_Quantity_16[index])This loop is taking irregular, tick-level order-book updates and turning them into a fixed, one-second cadence series for the top-of-book ask quantity (Ask1). Conceptually you start with two parallel arrays: a timestamp array in seconds (timestamp_time_second) and an Ask1 quantity array sampled at those timestamps (Ask1_Quantity_16). The goal is to produce Ask1_Quantity_16_ — a length-25200 time series where each element is the most recent Ask1 value as of that whole-second timestamp.

Step by step: for each second i from 0 up to 25,199 the code finds the last index in the timestamp array whose timestamp is <= i, and then uses that index to read the corresponding Ask1 quantity and append it to the per-second output. Because the code uses the last index <= i, if there were multiple tick updates within the same second it picks the most recent one in that second; if there were no updates exactly at i it carries forward the last known value (last-observation-carried-forward). That design is intentional for quant workflows: many downstream features and risk calculations require regularly sampled inputs (returns, realized variance, microstructure features), so you need to align irregular updates onto a uniform grid.

A few important behavioral and robustness points to keep in mind. First, this is a forward-fill strategy: long gaps between ticks will produce repeated stale values for many consecutive seconds, which is appropriate when you want a snapshot series but can distort measures that assume frequent updates. Second, the current code relies on there being at least one timestamp <= i for every i; if the timestamp series starts after 0 the np.where(…)[0][-1] lookup will error. You should explicitly handle initial-empty-periods (for example, by pre-filling with NaN or the first observation). Third, the implementation is inefficient: calling np.where inside a Python loop is O(T*N) in practice and will be slow for large timestamp arrays and long time horizons. A much faster, more robust approach is to use a vectorized search on a sorted timestamp array (e.g., a searchsorted-style lookup for all integer seconds followed by a single index-based selection) or simply use a time-indexed Series/DataFrame and reindex with forward-fill. Finally, beware of variable-name confusion: Ask1_Quantity_16_ is the new list, while Ask1_Quantity_16 (without the trailing underscore) must be the original source array — ensure you don’t accidentally overwrite the source before this loop.

In short: the code produces a per-second snapshot series by carrying the last known ask quantity forward to every whole second, which is a common preprocessing step in quant trading to synchronize irregular order-book data onto a regular time grid. Make the edge-case handling explicit and switch to a vectorized method for scalability.

Ask2_Quantity_16_ = []

for i in range(0,25200,1):

index = np.where(array(timestamp_time_second) <= i)[0][-1]

Ask2_Quantity_16_.append(Ask2_Quantity_16[index])This code is building a second-by-second version of an order-book quantity series so downstream quant models or backtests can consume a uniformly sampled feature. Conceptually, the original data consists of two parallel arrays: timestamp_time_second (irregular or event-driven times in whole seconds) and Ask2_Quantity_16 (the quantity observed at those event times). The loop walks through every second from 0 up to 25,199 and, for each second i, finds the most recent event whose timestamp is less than or equal to i, then copies that event’s Ask2_Quantity_16 into the per-second output array Ask2_Quantity_16_. The effect is “last observation carried forward” (LOCF): you hold the most recently observed quantity constant until an update arrives.

How the logic flows: for each i the code uses np.where(array(timestamp_time_second) <= i)[0][-1] to locate the index of the last timestamp that does not exceed i. That index is then used to pick the corresponding Ask2_Quantity_16 value and append it to the output list. Choosing the last matching index (the [-1]) enforces the forward-fill semantics and the <= ensures that an event occurring exactly at second i is used for that second’s value.

Why this is done in quant trading: many models and backtests require fixed-frequency inputs (per-second, per-minute, etc.) even though market messages arrive asynchronously. By converting irregular event data into a regular time grid and using LOCF, you preserve the most recent market state at every evaluation time — which is usually the correct semantic for features like displayed quantity that persist until changed. That alignment is critical for feature engineering, aggregation, and ensuring model inputs and target computations are synchronized.

Important assumptions and pitfalls to watch for: the code assumes timestamp_time_second is sorted ascending and that there is at least one timestamp <= i for every i in the loop; otherwise np.where(…)[0][-1] will raise an IndexError (this often occurs if the first timestamp is greater than 0). It also assumes timestamps are integer seconds and that Ask2_Quantity_16 is indexed the same way as timestamp_time_second. Performance is another concern: this is O(T * log? or O(T * N) depending on np.where internals) because each second performs a search over the timestamps; for long time ranges or large event counts this will be slow.

Ask3_Quantity_16_ = []

for i in range(0,25200,1):

index = np.where(array(timestamp_time_second) <= i)[0][-1]

Ask3_Quantity_16_.append(Ask3_Quantity_16[index])This loop is building a regular, per-second time series of the level-3 ask quantity by mapping each integer second to the most recent observed market value at or before that second. Concretely, for every second i from 0 up to 25,199 the code finds the last index in the timestamp_time_second array whose value is <= i, then appends the Ask3_Quantity_16 value at that index to the output list. In other words, it implements a last-observation-carried-forward (forward-fill / “as-of”) resampling: every second gets assigned the latest known Ask3 quantity available up to that time.

Why this is done: many quant workflows and backtests require features sampled on a fixed time grid (per-second here) so that models, metrics, or simulators see regularly spaced inputs. Orderbook updates arrive irregularly; mapping them onto a uniform timeline with the most recent observation preserves the intraday state without inventing values between updates. Using the condition <= i ensures we pick the latest observation at or before each second, which is appropriate when you want market state “as of” that second.

Important assumptions and edge cases to be aware of: this presumes timestamp_time_second is monotonic (sorted). If it is not sorted, the “last index where <= i” does not reliably represent chronological last observation and will produce incorrect mapping. If there are seconds before the first recorded timestamp, np.where(…)[0][-1] will raise an IndexError — you should decide whether to fill those leading seconds with NaN, zeros, or a defined default. Also, if many updates occur inside the same second, the code picks the last one within that second, which is usually the desired behavior for an “as-of” view.

Performance and robustness concerns: the code calls np.where on a (re)constructed array(timestamp_time_second) every iteration, so it repeats the same scan thousands of times. That makes the routine O(T * N) in the worst case (T = 25,200 seconds, N = number of timestamps) and will be slow for typical tick-level volumes. It also continuously converts timestamp_time_second to an array if that conversion happens inside the loop. A more robust approach is to use a vectorized search (np.searchsorted on a precomputed numpy array) or to use pandas’ reindex/resample with asof/ffill functionality, both of which are O(N + T) and handle edge cases more cleanly. Finally, consider making the target time range dynamic (based on trading hours or data span) and explicitly handling leading missing values and any timezone/epoch alignment issues so downstream models and backtests see consistent, well-defined inputs.

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Ask1_Quantity_16_,label = ‘Ask1 Quantity’)

plt.xlim(0.0,25200)

plt.legend(loc = 1)This block creates a wide, readable time-series plot of the top-of-book ask volume for the instrument labeled “16” so you can visually inspect liquidity dynamics. The code first allocates a rectangular canvas (16×7 inches) and turns on a grid to give you horizontal/vertical reference lines, which makes it easier to judge the magnitude and timing of changes in the series. It then draws the series Ask1_Quantity_16_ as a single line and tags it with the label “Ask1 Quantity” so the plotted trace is self-describing in the legend.

From a data-flow perspective, matplotlib uses the series index as the x-axis by default, so the visualization shows Ask1 quantities over whatever implicit time or sample index your array carries. The explicit x-axis limit xlim(0.0, 25200) zooms the view to the integer range 0 → 25,200, which is deliberate: 25,200 seconds equals seven hours, so this constrains the plot to a single trading-session window (or any intended 7-hour slice) and ensures consistent alignment when you compare multiple sessions or instruments.

Finally, placing the legend at location 1 (upper-right) is a simple, pragmatic choice to keep the annotation out of the main plot area in most cases and to future-proof the code when you add more series. The net business purpose is to enable quick visual detection of liquidity events — spikes, dry-ups, or regime shifts at the best ask — which are critical signals for execution algorithms, market-impact estimation, and feature engineering in quant strategies. If you plan to do repeated visual QA, consider ensuring the x-axis truly represents the intended time base (seconds vs. datetimes) and, for high-frequency noisy traces, think about downsampling or smoothing to reveal mid- to larger-scale patterns.

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Ask2_Quantity_16_,label = ‘Ask2 Quantity’)

plt.xlim(0.0,25200)

plt.legend(loc = 1)This small block is a focused visualization step whose goal is to make the time evolution of the second-level ask-side quantity for a particular instrument (Ask2_Quantity_16_) immediately interpretable for a quant workflow. First, we create a wide, single-panel figure (16x7) so the intraday structure is visible with enough horizontal resolution to display many time steps; choosing a large aspect ratio is deliberate for time-series where patterns and transient spikes matter. Enabling the grid improves visual readibility of horizontal and vertical reference lines so you can quickly judge magnitudes and timing of liquidity events.

The central action is plotting Ask2_Quantity_16_ as a labeled series; the label ensures the plotted line can be identified when multiple series are shown or when saving figures for later review. The explicit x-axis limit of (0.0, 25200) constrains the view to a fixed intraday window — in practice this maps the array index to a trading-day span (25200 is likely an integer number of seconds or ticks chosen to correspond with the period of interest). Limiting the x-range focuses analysis on the main session, removes far-out indices or tails that would compress the main structure, and makes it easier to compare this plot across days or instruments on a consistent time grid.

Finally, the legend placed in the upper-right (loc=1) keeps the label unobtrusive while remaining visible, and the overall arrangement is intended to serve two operational purposes in quant trading: quick human validation of the raw order-book quantity series (spotting data quality issues, gaps, or timestamp misalignment) and exploratory detection of microstructure phenomena — e.g., liquidity depletion, periodicity, or spikes around known events — that can inform feature engineering, intraday regime classification, or trigger rules for execution algorithms.

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Ask3_Quantity_16_,label = ‘Ask3 Quantity’)

plt.xlim(0.0,25200)

plt.legend(loc = 1)This block is setting up a visual inspection of a single time series — the Ask3_Quantity_16_ — with the intention of making patterns in that order-book variable visible across a full trading session. The code first creates a wide plotting canvas (16x7) so that time-dependent structure and transient spikes are not compressed; for quant trading work you often need horizontal space to see intraday seasonality, clusters of liquidity events, and the fine structure of order-flow spikes that could become features. Turning on the grid is a deliberate readability choice: horizontal and vertical gridlines make it easier to estimate magnitudes and align events in time when you’re eyeballing relationships between order-book quantities and price moves.

Next, the series itself is rendered with a label of ‘Ask3 Quantity’, so it will appear in the legend and be identifiable when multiple series are overlaid. Plotting the Ask3 quantity (level-3 ask size) is a common diagnostic for liquidity dynamics — you’re looking for changes in resting size that precede price moves, consolidations, or episodes of liquidity withdrawal. The hard x-axis limit of 0.0 to 25200 is meaningful: it forces a consistent timescale across charts (25200 seconds = 7 hours), which is useful when you want to compare multiple days or multiple instruments on the same horizontal span. That consistency helps detect intraday patterns and align events across runs, but it also has two trade-offs: it will clip data outside that window and will show empty space if the series is shorter than 25200 indices, so you should ensure your index-to-time mapping matches this scale.

Finally, the legend is placed in location 1 (upper-right) so the plotted label is visible without overlapping typical mid-chart activity. In summary, this snippet is about turning raw Ask3 quantity data into a consistently-scaled, readable diagnostic plot to support feature discovery and event inspection in a quant trading workflow; be mindful that using integer x-limits and not converting indices to human-friendly timestamps can obscure interpretation, so consider aligning the x-axis with actual timestamps or annotating known market events when you move from exploration to production diagnostics.

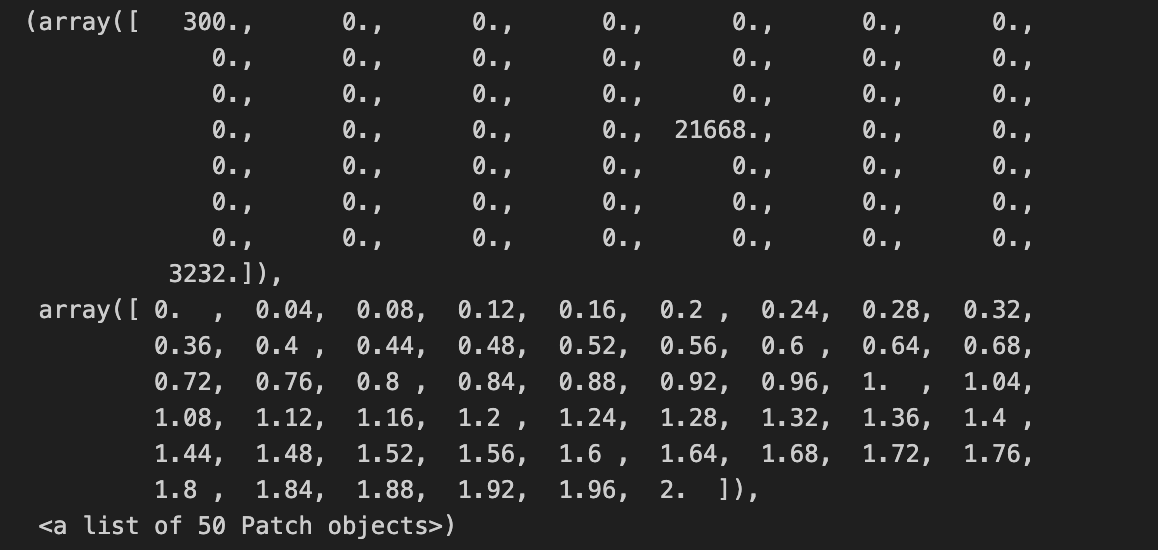

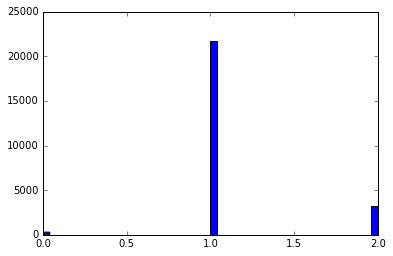

hist(array(bas_16_one_second)/5.0,bins = 50)This line takes your raw per-second series (bas_16_one_second), ensures it’s treated as a numeric array, rescales every element by dividing by 5.0, and then builds a 50-bin histogram to visualize the empirical distribution. Converting to an array first guarantees we get elementwise arithmetic (so the division produces a vector of scaled values rather than a single scalar or object-level operation), which is important if bas_16_one_second can be a list-like or other iterable. The division by 5.0 is a deliberate rescaling step — in quant trading this is usually done to convert the raw metric into a consistent unit (for example, from ticks to price change per 5 seconds, from total volume to volume per standard lot, or to normalize amplitudes so different instruments are comparable). Doing the scaling up front keeps the histogram’s bins interpretable in that target unit rather than the original raw measurement.

Choosing bins=50 is a pragmatic trade-off between resolution and noise: with 50 bins you get reasonably fine-grained detail on the distribution shape (peaks, shoulders, and tail behavior) without making each bin so narrow that sampling noise dominates. From a quant perspective the resulting histogram is used to inspect distributional properties relevant for modeling and risk — spotting heavy tails, skew, clustering or outliers that might invalidate Gaussian assumptions, influence volatility estimates, or require robust preprocessing.

min_Ask1_16_time_series = []

min_Ask1_16_time_series.append(0)

for i in range(1,len(Ask1_16),1):

min_Ask1_16_time_series.append(min(Ask1_16[i:]))This small block is building a time series of suffix minima from an Ask price sequence (Ask1_16) — in other words, for each time index it records the minimum ask price observed from that index forward. The code starts by creating an output list and appending a 0 as the first element; then it iterates i from 1 to the last index and appends min(Ask1_16[i:]) for each i. Concretely, the element at position i in min_Ask1_16_time_series (for i >= 1) is the minimum of Ask1_16 over indices [i, i+1, …, end]. The explicit 0 at position 0 is a placeholder so the output list ends up the same length as Ask1_16 — the author has chosen not to compute a suffix minimum for index 0 (or to represent it with a sentinel).

Why you would compute this in a quant context: suffix minima give you the best (lowest) ask price you could have achieved by waiting from each observation forward, which is useful for labeling supervised problems (e.g., “how low will the ask go in the future?”), estimating potential slippage or execution opportunity, or constructing event-based targets for backtests. Whether the minimum should include the current price matters for the interpretation: the current code includes Ask1_16[i] in the minimum (because it takes Ask1_16[i:]), so the suffix minimum can be equal to the current price — if you want to measure future improvement strictly after the current tick, you should use Ask1_16[i+1:].

max_Bid1_16_time_series = []

max_Bid1_16_time_series.append(0)

for i in range(1,len(Bid1_16),1):

max_Bid1_16_time_series.append(max(Bid1_16[i:]))This snippet is building a forward-looking “peak” series from an existing Bid1_16 price series: for each time index i it records the maximum bid seen from i (inclusive) to the end of the array, and it prepends a single zero to keep the output the same length as the input. Stepping through the flow: the list is created and seeded with 0 as the zeroth element; the loop starts at index 1 and for each i computes max(Bid1_16[i:]) — that is, the highest bid that occurs at or after time i — and appends that value. By the loop’s final iteration (i == len(Bid1_16) — 1) it appends the last element of Bid1_16, so the resulting list has one entry per original timestamp.

Why you would do this in quant trading is straightforward: you often need a label or feature that captures the best possible future price movement from a given entry point (for example to estimate potential profit, the peak reachable after entry, or to determine whether a price will ever exceed a threshold after a given time). This code produces that “future-peak” signal for each time point (except the zeroth index, which this code currently represents with a zero placeholder).

There are a couple of important behavioral and correctness details to be aware of. First, the choice to seed the series with 0 is a design decision that can materially affect downstream decisions or model training: zero may be an inappropriate placeholder if bids are strictly positive (it will artificially lower metrics) or if you expect NaN/missing to indicate absence of future information. Second, the loop uses max(Bid1_16[i:]) which includes the current index i in the future window; if your intent is to measure the maximum strictly after the current timestamp, you should shift the slice to i+1. Third, this implementation is O(n²) in time and allocates many intermediate slices, because each iteration computes a new max over an increasingly large suffix; for long tick series that will be slow and memory inefficient.

To address performance and robustness, compute the forward maximum in a single O(n) pass from right to left: maintain a running max, update it with each element as you traverse backwards, and store the running max values (then reverse if needed). That avoids repeated slicing and is linear time with constant extra memory overhead beyond the output. Also consider whether the initial placeholder should be NaN, the full-array max, or omitted, and be explicit about whether the window is inclusive or exclusive of the current timestamp — align that choice with how you will use this series in labeling, backtesting, or feature engineering so you do not leak future information or bias model targets.

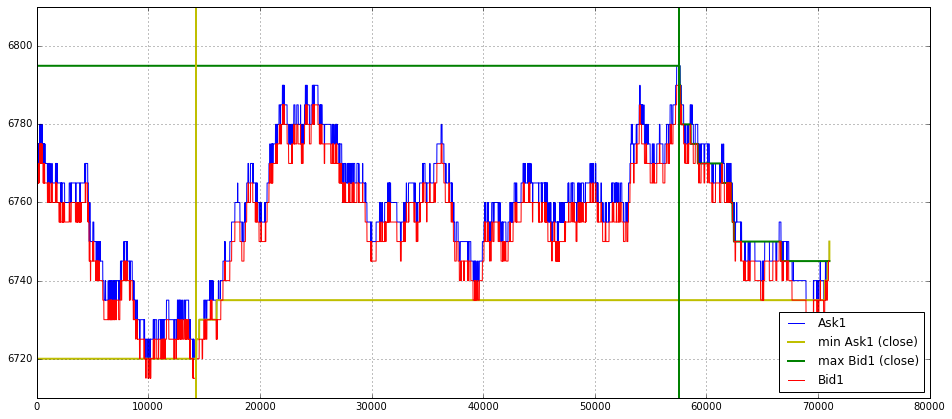

import matplotlib.pyplot as plt

plt.figure(figsize = (16,7))

plt.grid()

plot(Ask1_16[0:len(Ask1_16)],label = ‘Ask1’,color = ‘b’)

#plot(Ask2[0:data_trade_time_series_0900_0930],label = ‘Ask2’)

#plot(Ask3[0:data_trade_time_series_0900_0930],label = ‘Ask3’)

plot(min_Ask1_16_time_series[0:len(Ask1_16)],label = ‘min Ask1 (close)’, linewidth = 2,color = ‘y’)

plot(max_Bid1_16_time_series[0:len(Ask1_16)],label = ‘max Bid1 (close)’, linewidth = 2,color = ‘g’)

plot(Bid1_16[0:len(Ask1_16)],label = ‘Bid1’,color = ‘r’)

#plot(Bid2[0:data_trade_time_series_0900_0930],label = ‘Bid2’)

#plot(Bid3[0:data_trade_time_series_0900_0930],label = ‘Bid3’)

plt.ylim(6710,6810)

plt.legend(loc = 4)