Recurrent Neural Networks With Custom Attention Layers in Keras

In the past few years, deep learning networks have become increasingly popular.

Deep learning networks are improved by integrating an ‘attention mechanism’. Machine translation, image recognition, text summation, and other similar tasks have been improved by adding attention components.

A recurrent neural network is used to build a network with a custom attention layer. With the help of a very simple dataset, we will demonstrate the end-to-end process of time series forecasting. Using this simple example, users can build more complex applications by adding user-defined layers to a deep learning network.

The following information will be provided to you after completing this tutorial:

Creating a Keras custom attention layer: which methods are needed

Building a SimpleRNN network with the new layer

Now let’s get started.

The Dataset

Our goal in this article is to gain an understanding of how a custom attention layer can be added to deep learning networks. The way one number is constructed from two previous numbers is illustrated using a Fibonacci sequence. Following are the first ten numbers of the sequence:

0, 1, 1, 2, 3, 5, 8, 13, 21, 34, …

Would a machine be able to correctly reconstruct the next number when given the previous ‘t’ numbers? The correct operation would be performed on the last two numbers after discarding all previous inputs.

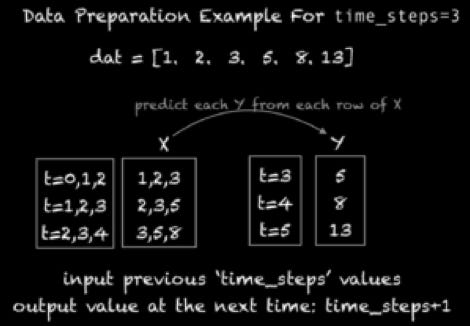

As part of this tutorial, we will construct our training examples from t-time steps and use t+1 as the target value. Consider the following training examples and their target values if t=3:

The Simple RNN Network

Using a Simple RNN network, we will predict the next number of the Fibonacci sequence using the basic code in this section.

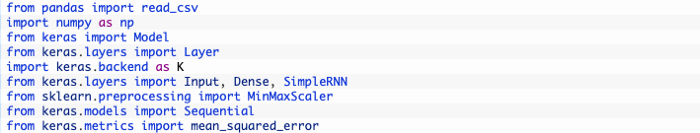

The Import Section

The import section should be written first:

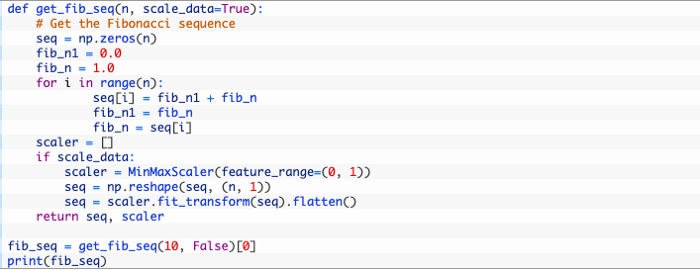

Preparing The Dataset

Fibonacci numbers are generated by the following function (excluding the first two values). The scale_data parameter may also be used to scale values between 0 and 1 using the MinMaxScaler module from scikit-learn. Here is what it produces with n=10.

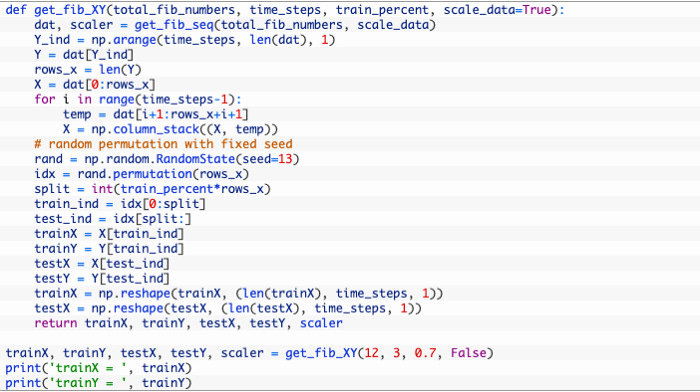

Our next step is to create a function get_fib_XY() that formats the sequence into training examples and target values for Keras to use. Time_steps is passed as a parameter to get_fib_XY(), which constructs time_steps number of columns in every row of the dataset. As well as creating the training and test sets from the Fibonacci sequence, this function shuffles the training examples and reshapes them into the TensorFlow format based on the number of samples times the number of time steps. As well, if scale_data is set to True, the function returns the scaler object that scales the values.

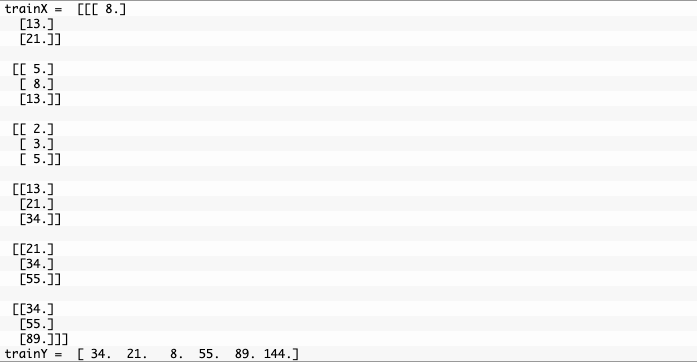

I’d like to see what makes the training set look like with a small set. Approximately 70% of the examples go towards the test points with time_steps set to 3, total_fib_numbers set to 12, and total_fib_numbers set to 12. The permutation() function has shuffled both training and test examples.

Setting Up The Network

Let’s now set up a small network with two layers. Firstly, there is the SimpleRNN layer and secondly, there is the Dense layer. The model is summarized below.