TV Script Generation Using Machine Learning

In this project, we will generate your own simpsons TV script using RNNs. You will be using the part of Simpsons dataset of script from 27 seasons.

The Neural Network you will build will generate a new TV script for a scene at Moe’s Town.

Get Data

The data is already there, which you can download. You will be using a subsset of the original dataset. It contains of only the scenes in Moe’s Town. This doesn’t include other versions of the tavern, like Moe’s Cavern.

"""

DON'T MODIFY ANYTHING IN THIS CELL

"""

import helper

data_dir = './data/simpsons/moes_tavern_lines.txt'

text = helper.load_data(data_dir)

# Ignore notice, since we don't use it for analysing the data

text = text[81:]First, the code imports a helper library that will be used in the rest of the code. Then, it sets the data directory to the file path of the tv script data. This data is then read and loaded into the variable text using the helper library function load_data. A comment states that a notice is being ignored, as it is not needed for analysis. Finally, the last line of the code removes the first 81 characters from the text. This may be used to remove any unnecessary information or titles from the data, leaving only the actual script lines.

Explore The Dataset

Let’s play around with some view_sentance_range to view different parts of the data.

view_sentence_range = (0, 10)

"""

DON'T MODIFY ANYTHING IN THIS CELL

"""

import numpy as np

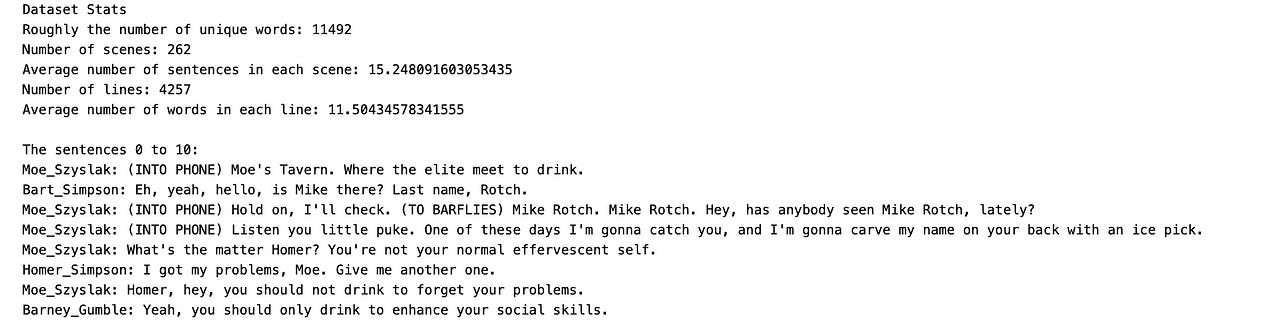

print('Dataset Stats')

print('Roughly the number of unique words: {}'.format(len({word: None for word in text.split()})))

scenes = text.split('\n\n')

print('Number of scenes: {}'.format(len(scenes)))

sentence_count_scene = [scene.count('\n') for scene in scenes]

print('Average number of sentences in each scene: {}'.format(np.average(sentence_count_scene)))

sentences = [sentence for scene in scenes for sentence in scene.split('\n')]

print('Number of lines: {}'.format(len(sentences)))

word_count_sentence = [len(sentence.split()) for sentence in sentences]

print('Average number of words in each line: {}'.format(np.average(word_count_sentence)))

print()

print('The sentences {} to {}:'.format(*view_sentence_range))

print('\n'.join(text.split('\n')[view_sentence_range[0]:view_sentence_range[1]]))This block of code sets a range for viewing sentences, imports the numpy library, prints the stats of the dataset including the number of unique words, the number of scenes, the average number of sentences in each scene, and the number of lines. It also calculates the average number of words in each line. The view_sentence_range variable is used to view a specific set of sentences, and the code prints out those sentences.

Implement Preprocessing Functions

The first thing to do to any dataset is preprocessing. Implement the following preprocessing functions below:

Lookup Table

Tokenize Punctuation

import numpy as np

import problem_unittests as tests

from collections import Counter

def create_lookup_tables(text):

"""

Create lookup tables for vocabulary

:param text: The text of tv scripts split into words

:return: A tuple of dicts (vocab_to_int, int_to_vocab)

"""

# TODO: Implement Function

word_counts = Counter(text)

sorted_vocab = sorted(word_counts, key=word_counts.get, reverse=True)

int_to_vocab = {ii: word for ii, word in enumerate(sorted_vocab)}

vocab_to_int = {word: ii for ii, word in int_to_vocab.items()}

return vocab_to_int, int_to_vocab

"""

DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE

"""

tests.test_create_lookup_tables(create_lookup_tables)This code first imports the numpy library and the problem_unittests module. It also imports the Counter class from the collections library. Next, there is a function called create_lookup_tables which takes a parameter called text. This function is used to create lookup tables for vocabulary. The first step in the function is to create a Counter object called word_counts which counts the frequency of each word in the input text. Then, the function sorts the vocabulary in descending order based on the frequency of each word, using the sorted function and passing in the key and reverse parameters. The result is stored in a list called sorted_vocab. Next, two dictionaries are created — int_to_vocab and vocab_to_int. These dictionaries serve as the lookup tables for translating between words and their corresponding integer values. The keys in int_to_vocab are integers, while the keys in vocab_to_int are words. The values in both dictionaries correspond to each other, with integers representing the words and vice versa. Finally, the function returns a tuple containing the two dictionaries — vocab_to_int and int_to_vocab. The last part of the code is just a comment reminding the user not to modify the code below it, followed by a test to ensure that the function is working as expected.